Every SEO Guide About Claude Gets the Same Thing Wrong

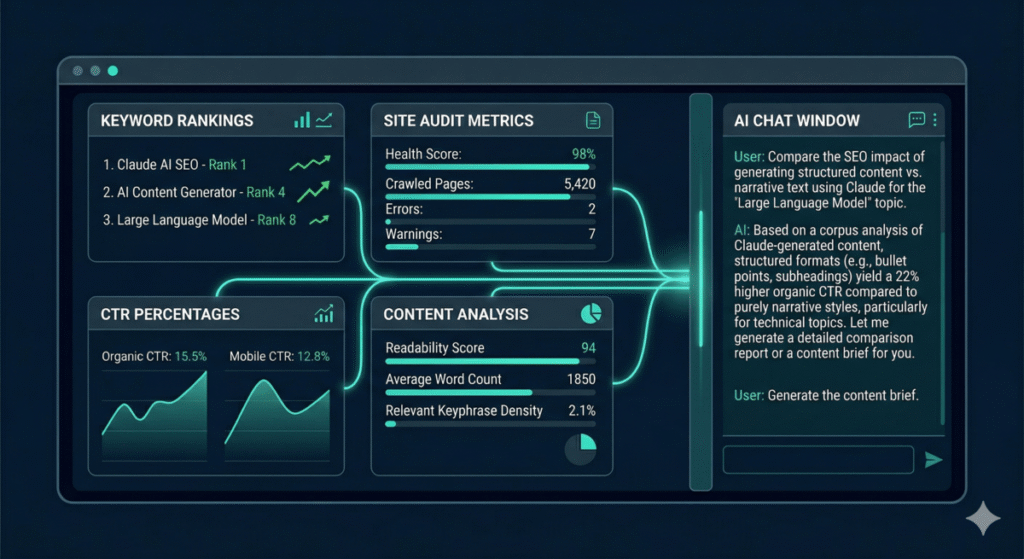

Search for “Claude AI SEO” right now and you’ll find a stack of listicles offering the same advice: paste your content into Claude and ask it to “optimize for SEO.” That’s not a strategy. That’s a party trick – and it produces the same generic, homogenized output that Google is getting better at ignoring every month.

The actual value of Claude for SEO has almost nothing to do with content generation. It’s in the analysis layer – the ability to process an entire site’s GSC data in seconds, cross-reference keyword cannibalization across hundreds of pages, reverse-engineer competitor content strategies, and build technical audit workflows that run on command. The stuff that used to take a senior SEO three to four hours now takes 90 seconds, and the output is often more thorough because Claude doesn’t get bored halfway through a 400-row spreadsheet.

This guide covers everything I actually use Claude for in my SEO practice – not theoretical use cases, but workflows I run on real client accounts every week. You’ll get:

- The technical setup that makes Claude genuinely useful for SEO (not just another chat window)

- Seven specific workflows with exact prompts, covering audits through execution

- How to connect Claude to live SEO data from Google Search Console, Ahrefs, and Semrush

- The critical difference between Claude Code and Claude.ai for SEO work – and why it matters

- Where Claude fails at SEO tasks and how to work around those limitations

- Reusable skill packages that turn multi-step SEO workflows into single commands

- How to use Claude to optimize content for AI citation (the GEO angle most guides ignore)

If you’ve already set up MCP connections or built custom skills, you’ll find workflows here that build directly on that foundation. If you’re starting from scratch, I’ll tell you exactly where to begin.

Why Claude – Not Just “AI” – for SEO

I’m not writing a generic “AI for SEO” post. This is specifically about Claude, and the distinction matters because Claude has structural advantages for SEO work that ChatGPT and Gemini don’t share.

200K token context window. Claude can process an entire site’s worth of content, GSC data, and competitor analysis in a single conversation without losing context. When you’re analyzing 300 pages of crawl data alongside keyword performance, context window size isn’t a nice-to-have – it’s the difference between getting a complete analysis and getting a truncated one that misses the patterns buried in row 247.

Claude Code gives you a full development environment. This is the biggest differentiator most people miss. Claude Code isn’t just a chat interface — it’s a CLI tool that can read and write files, execute scripts, connect to live APIs via MCP, and chain operations together. An SEO workflow in Claude Code can pull GSC data, analyze it, generate a prioritized action plan, write the schema markup, and save everything to files your team can act on — all in one session. ChatGPT can’t touch your file system. Gemini can’t execute arbitrary code in your terminal.

MCP (Model Context Protocol) connects Claude to your actual tools. MCP is an open protocol that lets Claude connect directly to Google Search Console, Ahrefs, Semrush, Notion, Google Sheets, and dozens of other services. No CSV exports, no copy-pasting data between tabs. Claude reads your live data, analyzes it, and writes results back to your documentation tools. The MCP setup post covers the full installation – it takes about 15 minutes.

Extended thinking for complex analysis. Claude’s extended thinking mode lets it reason through multi-step SEO problems – like diagnosing why a page dropped 30 positions after a core update — with a structured reasoning chain you can actually follow and verify. This isn’t a black box; you can see Claude’s reasoning process and catch where it might be making wrong assumptions about your site.

The Setup That Makes Claude Actually Useful for SEO

Before diving into workflows, let’s get the infrastructure right. The difference between “Claude as a slightly better ChatGPT” and “Claude as a genuine SEO operating system” comes down to three things: the client you use, the data connections you establish, and the context you provide.

Step 1: Choose Your Claude Client

| Client | Best For | SEO Limitations |

|---|---|---|

| Claude.ai (browser) | Quick analysis, content review, one-off questions | No file system access, no MCP, no automation |

| Claude Desktop (app) | MCP connections, conversational analysis | No code execution, no file writing, no scripting |

| Claude Code (CLI) | Full SEO workflows — audits, automation, reporting, skill packages | Requires terminal comfort (but less than you think) |

My recommendation: If you’re serious about using Claude for SEO, start with Claude Code. Everything in this guide works in Claude Code. Some of it works in Claude Desktop. Very little of it works in claude.ai alone. The introductory post covers installation and first steps – it takes about 10 minutes.

Step 2: Connect Your SEO Data Sources via MCP

This is where Claude goes from “slightly better chatbot” to “SEO command center.” MCP (Model Context Protocol) lets Claude connect directly to the tools you already use – no CSV exports, no copy-pasting between tabs. Claude reads your live data, analyzes it in real-time, and writes results wherever you need them.

The MCP setup post covers the full installation process. What follows is the SEO-specific breakdown of each connection, what it unlocks, and which workflows in this guide depend on it.

Google Search Console (via Composio)

Priority: Install first. This is your most valuable MCP connection – free, first-party data that 60% of the workflows in this guide depend on.

What Claude can do with the GSC MCP:

- Search Analytics Query – Pull clicks, impressions, CTR, and position data by query, page, country, device, or date. This powers the CTR audit, cannibalization detection, and content optimization workflows below. You can filter by date ranges, regex patterns, and specific dimensions – everything the GSC API supports, accessible through natural language.

- URL Inspection – Check whether a specific URL is indexed, when it was last crawled, whether it has mobile usability issues, and what canonical Google selected. This is a technical SEO workflow most guides miss entirely: instead of checking URLs one-at-a-time in the GSC interface, you can ask Claude to inspect a batch of URLs and flag any with indexation problems.

- Sitemap Management – List all sitemaps, check submission status, error counts, and indexed vs. submitted page counts. Useful for diagnosing why pages aren’t being discovered.

Example you can run immediately after setup:

List all my GSC properties. For my primary domain, pull the last 90 days

of search analytics grouped by page. Show me the top 30 pages by impressions

with clicks, CTR, and average position. Then inspect the 3 lowest-CTR pages

and tell me if Google is seeing any indexation or mobile usability issues.

That single prompt combines Search Analytics and URL Inspection – two separate workflows in the GSC interface – into one 30-second operation.

Ahrefs (Official MCP Server)

Priority: Install second. The Ahrefs MCP is massive – 80+ tools across six product areas. Here’s what matters most for SEO workflows:

Site Explorer – The backbone of competitive analysis:

- Domain Rating and URL Rating history — track authority trends over time

- Organic keywords — every keyword a domain ranks for, with position, volume, traffic, and difficulty

- Organic competitors — find domains competing for the same keywords

- Top pages by traffic — see which pages drive the most organic visits

- All backlinks, referring domains, anchor text distribution — full link profile analysis

- Broken backlinks — find link reclamation opportunities

- Metrics by country — geographic performance breakdown

Keywords Explorer — Keyword research without leaving Claude:

- Keyword overview — volume, difficulty, CPC, clicks data, SERP features

- Matching terms and related terms — expand a seed keyword into a full keyword map

- Search suggestions — autocomplete-style keyword ideas

- Volume by country — see where demand exists geographically

- Volume history — spot seasonal patterns and trending topics

Site Audit — Technical SEO directly from Claude:

- Issue detection — all technical issues categorized and prioritized

- Page explorer — filter pages by any crawl metric (status code, word count, load time, etc.)

- Page content — pull the actual HTML and rendered text of any crawled page, useful for on-page analysis without leaving your session

Rank Tracker — Keyword position monitoring:

- Keyword ranking overview — current positions for all tracked keywords

- Competitor rankings — see how competitors rank for the same keywords

- SERP overview — what SERP features appear for each keyword

Brand Radar — This is the one most people don’t know about, and it’s critical for the GEO strategy covered later in this post:

- AI responses — see how AI models (Claude, ChatGPT, Perplexity) mention your brand

- Cited domains and cited pages — which of your pages are being cited in AI responses

- Impressions and mentions history — track your AI visibility over time

- Share of voice — how your AI mentions compare to competitors

Ahrefs GSC integration — Ahrefs also has its own GSC connection that provides additional analysis layers: keyword history, page history, CTR by position curves, performance trends, and anonymous query estimation. This gives you a second lens on your GSC data with Ahrefs’ analytical overlays.

Batch Analysis — Analyze multiple URLs or domains in a single request. Feed Claude a list of 50 competitor pages and get Domain Rating, organic traffic, backlinks, and keyword counts for all of them at once.

Semrush (MCP Server)

Priority: Install if you use Semrush. The Semrush MCP covers the core platform through a discover-then-execute pattern — you call a research category, get available reports, then run the specific report you need.

- Organic Research — keywords, competitors, ranking positions for any domain

- Keyword Research — difficulty, volume, SERP analysis, related keywords

- Backlink Research — link profiles, referring domains, anchor text distribution

- Site Audit — technical SEO issues, crawl data, recommendations

- Overview Research — authority scores, traffic estimates, domain snapshots

- Tracking Research — position tracking data for monitored keywords

- Trends Research — market and competitive intelligence data

- Subdomain and Subfolder Analysis — drill down into specific parts of a domain

If you use both Ahrefs and Semrush, Claude can cross-reference data from both in a single analysis — something you can’t do natively in either tool’s interface. Ask Claude to compare Ahrefs’ keyword data against Semrush’s for the same domain and you’ll often spot opportunities that neither tool surfaces alone.

Google Docs (via Composio)

Priority: Nice to have. The Google Docs MCP lets Claude write audit reports, content briefs, and strategy documents directly to Google Docs — formatted, structured, and shareable without any copy-paste step.

- Create new documents and write content in Markdown that converts to formatted Docs

- Update existing documents — add sections, replace text, insert tables

- Search your document library to find and reference existing strategy docs

The workflow: Claude runs an audit, generates findings, and writes the final report directly to a Google Doc that you share with the client. No intermediate file, no formatting cleanup.

Google Sheets (via Composio)

Priority: Install if your workflows involve spreadsheets. Read and write spreadsheet data — essential if your keyword tracking, content calendars, or client reporting lives in Sheets.

- Read keyword lists and tracking sheets as input for Claude’s analysis

- Write audit results, keyword opportunities, and action items back to Sheets

- Update existing tracking spreadsheets with new data from GSC or Ahrefs

Notion

Priority: Install if your team uses Notion. Write audit findings, content briefs, and action items directly to Notion databases and pages. Search existing documentation for context.

Hostinger

Priority: Situational. If your site is hosted on Hostinger, this MCP lets Claude access your website content directly — useful for on-page analysis and content auditing without needing a separate crawl tool. Claude can pull page content and analyze it for SEO issues in real time.

The MCP Stack: What to Install in What Order

| Phase | MCP | What It Unlocks | Setup Time |

|---|---|---|---|

| Day 1 | Google Search Console | CTR audits, cannibalization, performance tracking, URL inspection | 10 min |

| Day 1 | Ahrefs | Keyword research, competitor analysis, backlinks, site audit, Brand Radar | 5 min |

| Week 1 | Google Sheets | Read/write keyword tracking, content calendars, client data | 5 min |

| Week 1 | Google Docs or Notion | Automated report delivery, content brief generation | 5 min |

| Week 2 | Semrush | Cross-platform data validation, trends, additional keyword data | 5 min |

| As needed | Hostinger | Direct site content access for on-page auditing | 5 min |

Total setup time for the full stack: about 35 minutes. The MCP setup guide walks through the process step-by-step. Start with GSC + Ahrefs and you’ll have enough to run every workflow in this guide.

How to Connect the Ahrefs MCP

Ahrefs offers an official MCP server that works with both Claude Desktop and Claude Code. You’ll need an Ahrefs account with API access (available on Standard plans and above).

For Claude Code, add it to your MCP settings:

claude mcp add ahrefs -- npx -y @anthropic-ai/claude-code-mcp-ahrefsOr if you’re using Claude Desktop, add this to your claude_desktop_config.json:

{

"mcpServers": {

"ahrefs": {

"command": "npx",

"args": ["-y", "@anthropic-ai/claude-code-mcp-ahrefs"]

}

}

}

Ahrefs also surfaces natively in claude.ai for Pro and Max subscribers — check your integration settings at claude.ai to enable it. This is the fastest path if you don’t use Claude Code yet: enable the Ahrefs integration in your Claude account, and you’ll have access to the full Ahrefs API from any Claude conversation.

Once connected, verify with a quick test:

Pull the Domain Rating and top 5 organic keywords for michaelpatrickcortez.com using Ahrefs.If you get real data back, you’re connected. The doc tool within the Ahrefs MCP provides full documentation for every endpoint — if you’re ever unsure what’s available, ask Claude to check the docs for a specific tool before running it.

How to Connect the Semrush MCP

Semrush also offers a native MCP integration available in claude.ai for Pro and Max subscribers. Enable it through your Claude account’s integration settings — no command-line setup required.

The Semrush MCP uses a three-step pattern: discovery → schema → execution. In practice, you don’t need to think about this — just ask Claude what you want and it handles the API calls. But understanding the pattern helps when you’re building skills:

# Step 1: Claude calls organic_research to discover available reports

# Step 2: Claude calls get_report_schema to understand the parameters

# Step 3: Claude calls execute_report with the right parameters

# What you actually type:

Pull the top 20 organic keywords for competitor.com from Semrush,

sorted by traffic. Include position, volume, and traffic cost.

Claude abstracts the three-step process into a single natural-language request. If you use both Ahrefs and Semrush, you can cross-validate data between them in the same conversation — ask Claude to compare keyword rankings from both sources and flag discrepancies.

How to Connect Google Search Console via Composio

GSC connects through Composio, which handles the OAuth authentication. The MCP setup guide has the full walkthrough, but the short version:

- Install the Composio MCP server for Claude Code

- Authenticate with your Google account (Composio handles the OAuth flow)

- Verify by asking Claude to list your GSC properties

The GSC MCP gives you access to the Search Analytics API, URL Inspection API, and Sitemap management — everything you need for the performance analysis and technical audit workflows below.

Step 3: Set Up Your CLAUDE.md

This is the step most people skip, and it’s the one that separates generic AI output from output calibrated to your specific site, industry, and goals. A CLAUDE.md file gives Claude persistent context about your client or project — business model, target audience, competitors, content strategy, brand voice guidelines, and any site-specific constraints.

For SEO work, your CLAUDE.md should include:

# Client: [Business Name]

## SEO Context

- Primary domain: example.com

- GSC property: sc-domain:example.com

- Target market: [geography + audience]

- Primary competitors: [3-5 competitor domains]

- Current content focus: [topic clusters / pillar pages]

- CMS: [WordPress / Shopify / custom]

- Known technical issues: [any ongoing problems]

## Content Guidelines

- Brand voice: [tone, style notes]

- Topics to avoid: [if any]

- Internal linking hub pages: [key pages to link to]

## SEO Goals

- Primary KPIs: [organic traffic / rankings / conversions]

- Current baseline: [traffic numbers, ranking positions]

- Target keywords: [priority keyword list or reference to sheet]

With this in place, every SEO workflow you run automatically accounts for your specific context. Claude won’t suggest targeting keywords your competitor already dominates unless there’s a realistic angle. It won’t recommend content structures that conflict with your CMS limitations. It won’t forget your brand voice halfway through a content brief.

7 SEO Workflows That Replace Hours of Manual Work

These are the workflows I run on client accounts regularly. Each one includes the exact prompt I use, what Claude does with it, and what the output looks like. They’re ordered from simplest (works with just GSC) to most complex (requires multiple data sources + Claude Code).

Workflow 1: The CTR Quick-Win Audit

What it does: Identifies pages that are ranking well but underperforming on click-through rate — the fastest path to more organic traffic without creating new content or building links.

Time saved: 2–3 hours → 90 seconds

What you need: GSC connection

Pull all pages from Google Search Console for [domain] over the last 90 days.

For each page: URL, impressions, clicks, CTR, average position.

Now identify pages where:

- Impressions > 500

- CTR < 2%

- Average position between 1 and 15

For each underperformer:

1. Calculate the expected CTR at that position (use standard CTR curves)

2. Calculate the click gap — how many additional clicks I'd get if CTR matched the benchmark

3. Suggest a revised title tag and meta description designed to improve CTR

Rank by click gap descending. Output as a table I can hand to a content team.

The click gap calculation is what makes this actionable rather than just informational. A page at position 4 with 0.8% CTR versus an expected 6.2% CTR represents a quantifiable opportunity. Multiply the gap by monthly impressions and you have a dollar value (or at least a click value) attached to each fix.

I covered this workflow in detail in the content audit post — this is the expanded version with the click-gap math built in.

Workflow 2: Keyword Cannibalization Detection

What it does: Finds cases where multiple pages on your site compete for the same queries, splitting ranking authority and confusing Google about which page to surface.

Time saved: 3–4 hours → 2 minutes

What you need: GSC connection

Pull all queries from Google Search Console for [domain] over the last 90 days.

For each query, pull ALL pages that received impressions for that query.

Identify cannibalization: queries where 2 or more pages received impressions.

For each cannibalized query:

- List all competing pages with their respective clicks, impressions, CTR, and position

- Identify which page SHOULD be the primary target (highest impressions + best position)

- Flag the severity: HIGH (pages within 3 positions of each other, both getting clicks),

MEDIUM (pages within 5 positions, one getting most clicks),

LOW (secondary page getting <10% of impressions)

Group by severity. For HIGH severity cases, recommend a specific resolution:

consolidate (301 redirect + content merge), differentiate (re-angle one page toward

a distinct intent), or de-optimize (remove keyword targeting from the weaker page).

Cannibalization analysis is one of the most tedious manual SEO tasks — it requires cross-referencing hundreds of query-page combinations. Claude does it exhaustively because it doesn’t lose patience at row 150. The severity scoring is the key differentiator from basic cannibalization reports: it prioritizes the cases that are actually costing you traffic.

Workflow 3: Competitor Content Gap Analysis

What it does: Identifies keywords your competitors rank for that you don’t — filtered by relevance, difficulty, and traffic potential — and generates content recommendations for closing the gaps.

Time saved: 4–5 hours → 5 minutes

What you need: Ahrefs or Semrush MCP connection + GSC

Run a content gap analysis between [my domain] and these competitors:

- [competitor1.com]

- [competitor2.com]

- [competitor3.com]

Using Ahrefs, find keywords where at least 2 of these competitors rank in the top 20

but my domain does NOT rank in the top 50.

Filter to:

- Search volume > 200/month

- Keyword difficulty < 40

- Commercial or informational intent (exclude navigational)

For each keyword opportunity:

1. The keyword and its monthly search volume

2. Which competitors rank and at what position

3. The dominant content format in the SERP (guide, listicle, tool page, comparison)

4. A recommended content approach for my site — including suggested title,

content type, and which existing page (if any) could be expanded to target it

Group the results into topic clusters. Prioritize clusters where I already have

topical authority (existing content ranking for related terms).

The topic clustering is what elevates this above a standard Ahrefs content gap export. Raw keyword lists are overwhelming — clustered recommendations with format guidance are actionable. Claude cross-references the gaps against your existing content (via GSC data) to identify where you already have topical authority to leverage.

Workflow 4: Technical SEO Audit From Crawl Data

What it does: Processes site audit / crawl data and produces a prioritized technical fix list with specific implementation guidance.

Time saved: 3–6 hours → 10 minutes

What you need: Ahrefs Site Audit or Screaming Frog export + Claude Code

I've connected the Ahrefs Site Audit MCP. Pull the current audit data for [project].

Analyze all issues and categorize them by:

1. CRITICAL — Issues affecting crawlability or indexation

(broken canonical tags, noindex on important pages, redirect chains/loops,

orphaned pages with traffic, 5xx errors)

2. HIGH — Issues affecting ranking potential

(duplicate titles/descriptions, missing H1s, slow page speed,

missing structured data on key page types, thin content pages)

3. MEDIUM — Issues worth fixing but lower impact

(image alt text, broken internal links to low-traffic pages,

non-HTTPS resources, oversized images)

4. LOW — Cleanup items

(minor HTML validation issues, empty meta descriptions on non-ranking pages)

For CRITICAL and HIGH issues:

- Provide the specific fix (not just "fix the canonical tag" but the actual

correct canonical URL or the specific code change needed)

- Estimate implementation time

- Note any dependencies (e.g., "fix redirect chain before updating internal links")

Output as a project plan I can paste into a task management system.

What makes this different from the Ahrefs Site Audit dashboard itself: Claude contextualizes the issues. A “duplicate title” on two blog category pages is different from a “duplicate title” on your two highest-traffic product pages. Claude reads the page context and adjusts severity accordingly — something the automated crawl report can’t do.

Workflow 5: Content Optimization With Live SERP Data

What it does: Analyzes a specific page against its SERP competitors and generates a targeted optimization plan — not generic “add more keywords” advice, but structural and semantic improvements based on what’s actually ranking.

Time saved: 2–3 hours → 5 minutes

What you need: Ahrefs MCP + GSC + the page content

I want to optimize this page: [URL]

1. Pull the top 10 organic keywords this page ranks for from GSC (by impressions).

2. For the primary keyword, use Ahrefs to pull the SERP overview — the top 10 ranking URLs.

3. Analyze what the top 3 ranking pages cover that my page doesn't:

- Content sections/topics they include

- Content depth (estimated word count, number of sections)

- Content format differences (do they use tables, lists, videos, tools?)

- Semantic gaps — subtopics or related entities they address

4. Generate an optimization plan:

- Specific sections to add (with suggested H2/H3 headings)

- Content to expand or deepen

- Internal linking opportunities to/from this page

- Schema markup recommendations based on the content type

- Title tag and meta description rewrites if needed

Do NOT suggest stuffing keywords or making the content longer for length's sake.

Only recommend additions that genuinely improve the page's value for the searcher.

The explicit instruction to avoid keyword stuffing and length padding matters. Without it, AI tools default to “make it longer and add more keywords” — which is the opposite of what Google’s helpful content updates reward. This prompt forces Claude to focus on genuine content gaps, not volumetric optimization.

Workflow 6: Internal Linking Audit and Optimization

What it does: Maps your site’s internal link structure, identifies orphaned pages, surfaces strategic linking opportunities, and generates the actual link placements.

Time saved: 4–6 hours → 8 minutes

What you need: Ahrefs Site Audit MCP or crawl data + GSC

Analyze the internal linking structure for [domain].

1. Pull internal link data from the site audit — pages by number of internal links

pointing to them.

2. Cross-reference with GSC performance data. Identify:

a. HIGH-TRAFFIC pages with FEW internal links (under-linked important pages)

b. MANY-LINKS pages with LOW traffic (over-linked unimportant pages)

c. Pages with ZERO internal links (orphaned content)

d. Your top 10 pages by organic traffic — how many internal links point to each?

3. For each under-linked important page:

- Identify 5-10 existing pages that could naturally link to it

- Suggest the specific anchor text and the paragraph where the link should be inserted

- Prioritize contextually relevant links over forced placements

4. Identify topic clusters where internal linking could create stronger

topical authority signals. Map hub pages to supporting content.

Output as two deliverables:

- A prioritized linking action list (specific from-page → to-page → anchor text)

- A topic cluster map showing hub-and-spoke relationships

Internal linking is the most underutilized lever in SEO — partly because the manual analysis is so tedious that most SEOs do it once, implement a fraction of the recommendations, and never revisit. Claude makes it repeatable. Run this monthly and you’ll continuously surface new linking opportunities as you publish new content.

Workflow 7: Schema Markup Generation at Scale

What it does: Analyzes page content and generates accurate, validated JSON-LD schema markup for every applicable page type — articles, FAQs, products, local business, how-to, and more.

Time saved: 1–2 hours per page → 30 seconds per page

What you need: Claude Code (for file read/write)

Read the content of [URL or file path] and generate the appropriate JSON-LD

structured data markup.

Rules:

- Only include schema types that genuinely match the page content

- For Article pages: include headline, author, datePublished, dateModified, image, publisher

- For FAQ content: generate FAQPage schema with all Q&A pairs found on the page

- For How-To content: generate HowTo schema with proper step structure

- For product pages: include Product schema with offers, ratings if present

- For local business pages: generate LocalBusiness with address, hours, phone

Validate the output against schema.org specifications.

Flag any fields where you're making assumptions vs. extracting from actual page content.

Output the complete JSON-LD script tag ready to paste into the page head.

The validation step is critical. AI-generated schema that includes fabricated data (like a star rating that doesn’t exist on the page) will trigger manual actions from Google. The prompt explicitly asks Claude to distinguish between extracted facts and assumptions. If you want to go deeper on schema implementation, the skills post includes a reusable schema generation skill you can build once and run on any page.

Building Reusable SEO Skills: Run Complex Workflows With One Command

Running these prompts manually works, but it’s not scalable. If you’re running the same audit across 10 client accounts every month, you need automation. Claude Code skills let you package any workflow into a single slash command.

Here’s how an SEO audit skill works in practice:

# Save as: ~/.claude/skills/full-seo-audit.md

Run a comprehensive SEO audit for the current client using the CLAUDE.md context.

## Phase 1: Performance Baseline

Pull 90 days of GSC data. Identify top 50 pages by impressions.

Compare to prior 90-day period. Flag pages with >25% traffic decline.

## Phase 2: Quick Wins

Find CTR underperformers (position 1-15, CTR below position benchmarks).

Calculate click gap for each. Generate revised title tags.

## Phase 3: Cannibalization

Identify queries with multiple ranking pages. Score severity.

Recommend resolution for HIGH severity cases.

## Phase 4: Technical Issues

Pull Ahrefs site audit data. Categorize by CRITICAL/HIGH/MEDIUM/LOW.

Provide specific fixes for CRITICAL and HIGH.

## Phase 5: Content Opportunities

Run content gap analysis against competitors listed in CLAUDE.md.

Filter to KD<40, volume>200. Cluster by topic.

## Output

Save full audit report to ./seo-audit-[date].md

Save action items to ./seo-actions-[date].md

Notify when complete.

Now type /full-seo-audit in any client project directory and Claude runs all five phases automatically. The entire audit — which would take a senior SEO 6–8 hours — runs in about 10–15 minutes with live data and produces two deliverable documents.

You can build skills for any repeatable SEO workflow: content briefs, monthly reporting, competitor monitoring, backlink outreach research. The skills guide walks through the full build process.

Where Claude Gets SEO Wrong (And How to Guard Against It)

Claude is not infallible, and if you treat it as an oracle you will make expensive mistakes. Here’s where I’ve seen it fail — and the guardrails I’ve built into my workflows.

1. Keyword Difficulty Misestimation

Claude can analyze keyword data from Ahrefs or Semrush, but it sometimes underestimates the difficulty of ranking for a keyword when the SERP is dominated by high-authority domains. It sees KD 25 and says “go for it” without weighing that all top 10 results are from DR 80+ sites. Guardrail: Always have Claude pull the top 10 URLs for a keyword and report their Domain Rating alongside the KD score. If every result is DR 70+ and your site is DR 30, that KD 25 is effectively a KD 65 for you.

2. Outdated SEO Advice

Claude’s training data has a cutoff, and SEO evolves constantly. It may recommend practices that were standard 18 months ago but have since been devalued or penalized. Examples: exact-match anchor text ratios, meta keyword tags (still occasionally suggested), and certain link-building tactics that Google’s spam updates have since targeted. Guardrail: For any strategic recommendation, ask Claude to cite when that practice was last validated by a Google update or official guidance. If it can’t, verify independently.

3. Hallucinated Metrics

When Claude doesn’t have access to live data (no MCP connection), it will sometimes fabricate plausible-looking search volumes, difficulty scores, or traffic estimates. It’s confidently wrong — the numbers look real but are invented. Guardrail: Never trust metrics that don’t come from a live data source. If Claude reports a search volume, it should be pulling from Ahrefs or GSC via MCP, not generating from memory. This is why the MCP setup step isn’t optional — it’s the difference between data-driven analysis and plausible fiction.

4. Content That Sounds Good but Adds Nothing

Claude can generate perfectly fluent, well-structured content that reads well and says absolutely nothing new. Google’s helpful content system explicitly devalues content that “doesn’t provide substantial value compared to other pages in search results.” If you let Claude write your content on autopilot, you’ll produce exactly the kind of content that system penalizes. Guardrail: Use Claude for research, analysis, outlines, and optimization — not for writing the final draft verbatim. The best workflow is Claude generates the structure and data, you supply the expertise and originality.

5. Over-Optimization

Ask Claude to “optimize this page for [keyword]” without constraints and it will increase keyword density, add the keyword to every heading, and stuff it into the meta description twice. This was fine in 2015. It triggers spam signals in 2026. Guardrail: Frame optimization prompts around the searcher’s intent, not the keyword itself. “Make this page the best possible answer for someone searching [keyword]” produces dramatically better results than “optimize this page for [keyword].”

6. Newer Models Can Be Worse at SEO Tasks

This one surprises people. Search Engine Land’s benchmarking found that newer flagship models — optimized for deep reasoning and agentic workflows — actually showed accuracy drops on standard SEO tasks compared to previous versions. The cause: they overthink simple tasks and hallucinate complexity where straightforward analysis is what’s needed. Guardrail: Use Sonnet for routine SEO work (audits, data analysis, content optimization). Reserve Opus for genuinely complex strategic analysis. And use a constrained CLAUDE.md with explicit instructions to keep analysis grounded in the data rather than speculating beyond it.

The Co-Pilot Framing

The single most common mistake I see from SEOs adopting Claude: treating it as autopilot instead of co-pilot. Claude is an analyst that works alongside your expertise — it processes the data, surfaces the patterns, generates the deliverables. You provide the strategic judgment, the client context, the “this metric looks wrong even though the data says it’s fine” intuition that comes from years of practice. The SEOs getting the most value from Claude are doing more strategic work, not less. They’ve just automated the commodity analysis that used to eat 60–70% of their week.

Using Claude to Get Cited BY AI: The GEO Angle

Here’s an angle almost nobody is covering: you can use Claude as both your SEO optimization tool AND optimize your content to get cited by Claude itself — along with Perplexity, Google AI Overviews, and every other AI search interface.

AI-referred search sessions are up over 500% year-over-year. Claude is now integrated into Safari. When someone asks Claude or Perplexity “what’s the best tool for X,” the AI doesn’t show ten blue links — it names names. If you’re not in that answer, you don’t exist in that conversation. This is Generative Engine Optimization (GEO), and it’s running in parallel with traditional SEO.

What makes this a dual-purpose opportunity: you can use Claude to optimize your content for AI citation while simultaneously using Claude for your traditional SEO workflows. Here’s a workflow I’ve started running for clients:

Analyze [URL] for AI citation readiness. Evaluate:

1. Are key claims stated as clear, declarative sentences that an AI could

extract as a direct answer? (AI models prefer scannable, factual statements

over buried conclusions)

2. Does the page clearly associate [brand] with [category] in multiple places?

(LLMs learn category associations from repeated, consistent positioning)

3. Are there FAQ sections with explicit question-answer pairs?

(These are the easiest content format for AI to extract and cite)

4. Is the content structured with clear headings that match common

AI query patterns? (e.g., "What is X," "How does X compare to Y,"

"Best X for [use case]")

5. Does the page include unique data, original research, or practitioner

insights that would make it a preferred citation source?

(50% of content cited in AI responses is less than 13 weeks old —

recency and originality matter)

Generate a GEO optimization checklist with specific changes to improve

this page's likelihood of being cited in AI-generated responses.

Measuring AI Visibility: Ahrefs Brand Radar via MCP

Here’s where the MCP stack pays off for GEO specifically. Ahrefs Brand Radar — accessible directly through Claude via MCP — tracks how AI models mention your brand. This is the measurement layer most GEO guides are missing.

Using Ahrefs Brand Radar, pull the current AI visibility data for [brand/domain]:

1. Share of voice overview — how do my AI mentions compare to [competitor 1],

[competitor 2], and [competitor 3]?

2. Which of my pages are being cited in AI responses? Pull cited pages data.

3. What domains are being cited most in my category? Pull cited domains.

4. Show me my mentions history over the last 3 months — are we trending up or down?

5. Pull the actual AI responses where my brand appears — what prompts

are triggering mentions?

Compare my share of voice against competitors. Identify the prompts and

topics where competitors are being cited but I'm not — those are my

GEO content gaps.

This creates a closed loop: use Claude to optimize your content for AI citation, then use Claude + Brand Radar to measure whether it worked. Run this monthly alongside your traditional SEO reporting and you’ll have a complete picture of visibility across both search and AI channels.

The key insight: content optimized for AI citation also tends to perform well in traditional search. Clear structure, declarative statements, FAQ formatting, and genuine expertise — these are the same signals that Google’s helpful content system rewards. GEO and SEO are converging, not competing.

Claude vs. Dedicated SEO Tools: What Replaces What

Claude doesn’t replace Ahrefs. It doesn’t replace Semrush. It doesn’t replace Screaming Frog. Here’s the honest breakdown of where Claude adds value and where dedicated tools remain essential.

| Task | Dedicated SEO Tool | Claude | Winner |

|---|---|---|---|

| Keyword research (discovery) | Ahrefs / Semrush — massive databases, related terms, SERP features | Can query Ahrefs via MCP, but doesn’t add discovery value | Dedicated tool |

| Keyword research (analysis + prioritization) | Manual — export, filter, score in spreadsheet | Instant analysis, competitive context, opportunity scoring | Claude |

| Site crawling | Screaming Frog / Ahrefs Site Audit — purpose-built crawlers | Can’t crawl websites | Dedicated tool |

| Crawl data analysis | Manual review of crawl reports | Contextual prioritization, specific fix recommendations | Claude |

| Backlink analysis | Ahrefs / Semrush — link databases, DR scores, anchor profiles | Can query via MCP, adds contextual analysis | Both (tool for data, Claude for analysis) |

| Content optimization | Surfer / Clearscope — NLP-based content scoring | SERP-aware optimization, structural gaps, semantic analysis | Claude (more nuanced) |

| Rank tracking | Ahrefs / Semrush / dedicated trackers — daily automated tracking with alerts | Can pull Rank Tracker data via Ahrefs MCP + competitor comparisons, but no automated daily alerts | Both (tool for daily monitoring, Claude for analysis + competitor context) |

| Schema markup generation | Generators produce basic markup | Context-aware, handles complex nested schema, validates | Claude |

| Monthly reporting | Data Studio / Looker — dashboards with auto-refresh | Narrative reports with insights, not just numbers | Both (dashboards + Claude for narrative) |

| Cannibalization detection | Manual spreadsheet work or limited tool features | Exhaustive analysis with severity scoring and fix recommendations | Claude |

| AI visibility / GEO monitoring | Ahrefs Brand Radar — tracks AI citations, SOV, mentions | Can pull Brand Radar data via MCP + generate optimization recommendations | Both (Brand Radar for tracking, Claude for analysis + content optimization) |

The pattern: dedicated tools are better at data collection (crawling, indexing, tracking). Claude is better at data interpretation (analysis, prioritization, recommendations, implementation). The highest-leverage setup uses both — tools to generate the data, Claude to extract the insights and produce actionable deliverables.

Advanced: Automated SEO Monitoring With Claude

Once your workflows are proven, you can automate ongoing monitoring. The loop command lets you run Claude on a recurring interval — useful for catching traffic drops, indexation issues, or competitor movements before they become problems.

Example: a daily GSC health check that flags any page with a >20% traffic drop in the last 7 days:

/loop 24h Check GSC data for [domain] for the last 7 days vs prior 7 days.

Flag any page where clicks dropped more than 20%. For each flagged page,

check if the position changed (ranking drop vs CTR drop) and note the

likely cause. Save a brief summary to ./monitoring/daily-check-[date].md

For agency teams managing multiple accounts, you can build automated reporting workflows that pull data across all clients, generate narrative summaries, and push them to Notion or Google Docs on a schedule. The reporting post covers the full setup.

The Workflow Stack: Putting It All Together

Here’s how all these pieces fit into a monthly SEO workflow for a client account:

Week 1: Audit

- Run

/full-seo-auditto generate the comprehensive audit - Review Claude’s findings — verify the critical issues, adjust priorities

- Push the action plan to Notion or your project management tool

Week 2: Content

- Run the content gap analysis against updated competitor data

- Generate content briefs for the top 3–5 opportunities

- Run content optimization on 2–3 existing underperforming pages

Week 3: Technical

- Address critical and high-priority technical issues from the audit

- Generate and implement schema markup for key pages

- Run the internal linking workflow to support new and updated content

Week 4: Report

- Run the automated reporting workflow

- Claude generates the narrative report comparing this period to last

- Review, add your strategic commentary, and deliver to the client

Ongoing: Daily monitoring via /loop catches issues between audit cycles.

This entire monthly workflow — which used to consume 20–30 billable hours — now takes 6–8 hours, most of which is strategic review and implementation rather than data extraction and formatting.

Frequently Asked Questions

Can Claude replace an SEO specialist entirely?

No, and that framing misses the point. Claude replaces the manual labor of SEO — the data pulling, spreadsheet formatting, pattern-finding, and report generation that consumes 60–70% of most SEO professionals’ time. What it cannot replace: strategic judgment about which opportunities to prioritize, understanding of business context that affects SEO decisions, relationships with clients and stakeholders, and the experience to know when a metric looks wrong even if the data says otherwise. The SEOs who are thriving with Claude are doing more strategic work, not less — they’ve just automated the commodity analysis that used to eat their week.

Is Claude better than ChatGPT for SEO?

For analysis-heavy SEO work, yes — and the reason is structural, not just model quality. Claude’s 200K token context window means it can process an entire site audit in a single conversation. Claude Code provides file system access and code execution that ChatGPT cannot match. MCP gives Claude live connections to SEO tools. ChatGPT has strengths in content ideation and has its own ecosystem of plugins, but for the technical, data-intensive side of SEO — audits, cannibalization detection, technical analysis, reporting — Claude’s infrastructure advantages are significant. For content writing specifically, both models produce similar quality and both require human editorial oversight.

Does Claude have access to real-time search data?

Not inherently. Claude’s base model works from training data, not live search results. But with MCP connections to Google Search Console, Ahrefs, and Semrush, Claude accesses real, current data from those platforms. The distinction matters: Claude connected to GSC via MCP is working with your actual performance data from the last 24 hours. Claude without MCP is working from memory and will hallucinate metrics. For any data-dependent SEO work, the MCP setup isn’t optional — it’s a prerequisite for reliable output.

How much does using Claude for SEO actually cost?

Claude Pro costs $20/month and includes generous usage of Claude Sonnet for daily work. Claude Code is included with your Pro or Max subscription. The MCP connections to GSC (via Composio) have free tiers that cover most individual and small agency usage. Ahrefs and Semrush require their own paid subscriptions — Claude connects to these tools but doesn’t replace the underlying data access. Total incremental cost for an SEO who already has Ahrefs or Semrush: $20/month for Claude Pro. The time savings — conservatively 15–20 hours per month per client — make the ROI calculation straightforward.

Will Google penalize AI-assisted SEO work?

Google has explicitly stated that AI-generated content is not inherently against their guidelines — what matters is quality and helpfulness, not how the content was produced. Using Claude for analysis, audits, technical recommendations, and content optimization is no different from using any other tool. The risk comes from using Claude to mass-produce low-value content at scale, which triggers Google’s helpful content and spam systems regardless of whether it was written by AI or humans. Use Claude to make your existing SEO work faster and more thorough, not to flood the index with more pages.

What’s the best Claude model for SEO tasks?

Claude Sonnet for 90% of SEO work — it’s fast, capable, and handles data analysis, content optimization, and technical audits with ease. Use Claude Opus for complex strategic analysis: multi-competitor gap analysis, full-site content strategy development, or diagnosing ranking drops that involve multiple interacting factors. Haiku is useful for high-volume, simple tasks like generating meta descriptions or title tag variations for 100+ pages. The Google Ads guide covers model selection in more detail — the same principles apply to SEO workflows.

Can I use Claude for local SEO?

Yes, and it’s particularly effective for local SEO tasks that involve repetitive, structured work: generating LocalBusiness schema markup, optimizing Google Business Profile descriptions, building location page content that avoids thin-content penalties, analyzing local pack competitors, and auditing NAP consistency across citations. The schema markup workflow above handles local business schema natively. For multi-location businesses, Claude Code can generate location-specific content and schema at scale while maintaining unique, locally relevant content for each page — something that’s notoriously tedious to do manually.

Start Here — Your First 30 Minutes

If this guide feels like a lot, here’s the minimum viable setup:

- Install Claude Code — follow this guide, takes 10 minutes

- Connect Google Search Console — follow this guide, takes 15 minutes

- Run the CTR Quick-Win Audit — Workflow 1 above, takes 90 seconds

- Act on the top result — rewrite the title tag and meta description for your highest click-gap page

That’s 30 minutes to a tangible SEO improvement on your site. Everything else in this guide builds from there.

If you want the full system — reusable skills, automated monitoring, multi-client reporting — work through the workflows one at a time and package each one as a skill once you’ve validated it on a real account. Don’t automate something you haven’t run manually first.

For the complete Claude Code workflow toolkit — including pre-built skills, CLAUDE.md templates for SEO clients, and the full MCP configuration — The AI Marketing Stack packages everything covered in this series into a ready-to-use system.