The Problem with Manual Checking

Marketing campaigns don’t fail all at once. They degrade. A keyword position slips from 3 to 9 over a week. A Meta ad’s frequency crosses 4.5 and CTR starts falling. A competitor quietly updates their pricing page and starts outranking you on branded terms. An automated email sequence hits a deliverability issue on day three of a five-day drip.

By the time you catch any of this manually, you’ve already lost impressions, wasted budget, or fallen behind. The problem isn’t that you don’t know what to watch — it’s that no one has time to check everything, every day, across every client.

That’s what the /loop command in Claude Code is built for. It runs any monitoring command on a repeating interval, automatically, while you work on everything else. This post shows you how to build marketing monitoring workflows that run in the background and alert you to problems the moment they appear.

What the /loop Command Actually Does

The /loop command is a built-in Claude Code skill that re-runs a prompt or command on a set interval. The syntax is:

/loop [interval] [command or prompt]Examples:

/loop 30m /rank-check

/loop 1h Check if any tracked keywords dropped more than 3 positions since last check

/loop 15m /ad-performance-monitorIf you don’t specify an interval, it defaults to 10 minutes. The loop runs until you stop it with Ctrl+C or until you close the session. Each iteration executes the full command — pulling live data, running analysis, and surfacing anything that needs your attention.

The key is what you pair it with. A /loop on its own just repeats a prompt. Paired with MCP tools connected to your data sources, it becomes a live monitoring system that checks real numbers every cycle.

Marketing Use Cases That Actually Work

Before building anything, understand what /loop is good at and what it isn’t. It’s ideal for:

- Threshold monitoring: “Alert me if X drops below Y or rises above Z”

- Change detection: “Flag anything that changed more than 10% since last cycle”

- Anomaly catching: “Is anything behaving unusually compared to the 7-day baseline?”

- Competitive watching: “Has the competitor’s ranking for this keyword changed?”

It’s less useful for tasks requiring deep judgment or multi-step data pulls that take longer than the interval. Match the interval to the monitoring task — ranking checks every 4 hours makes sense; checking for ranking changes every 5 minutes is noise.

Use Case 1: Keyword Rank Monitoring

Create a skill at ~/.claude/skills/rank-watch.md:

# Rank Watch — Continuous Keyword Monitoring

When invoked, do the following:

1. Pull current rank tracker data from Ahrefs for the project defined in CLAUDE.md

2. Compare current positions to the positions from the last run (stored in ~/monitoring/rank-baseline.json)

3. Flag any keyword that has:

- Dropped 3 or more positions

- Dropped out of the top 10

- Moved into the top 3 (positive alert)

4. If no changes meet the threshold, output: "No significant rank changes — [timestamp]"

5. If changes are detected, output a brief table: Keyword | Old Rank | New Rank | Change

6. Update ~/monitoring/rank-baseline.json with the current positions

7. Do not repeat the full keyword list every cycle — only report changes

Then run:

/loop 4h /rank-watchEvery four hours, Claude pulls live Ahrefs data, compares it to the stored baseline, and tells you only what changed. No noise. No scrolling through 50 keywords to find the two that moved.

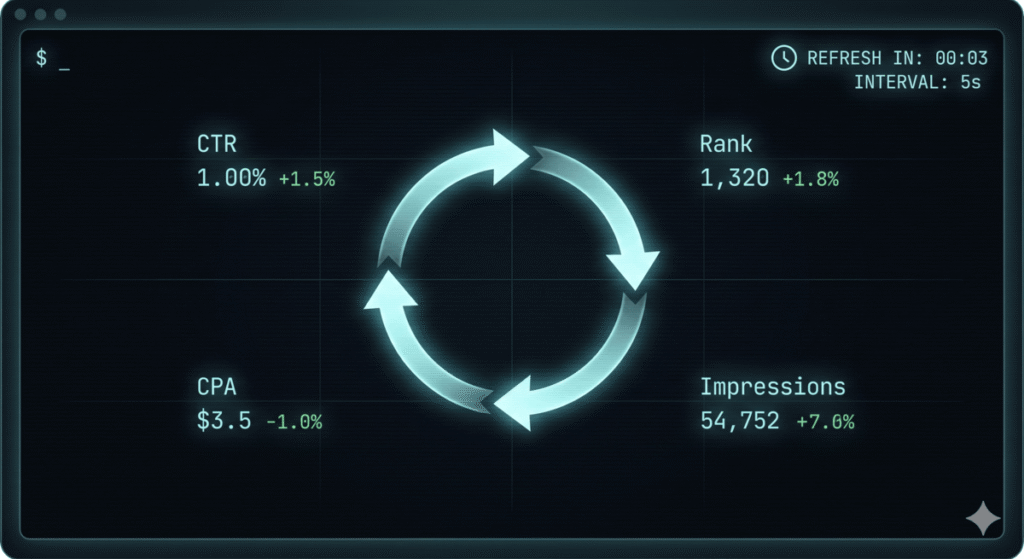

Use Case 2: Ad Performance Drift Detection

This one is especially valuable for paid media. Campaign performance drifts in ways that don’t trigger automated rules — a slow CTR decline, a CPC creep, frequency building up on evergreen creative. Create ~/.claude/skills/ad-monitor.md:

# Ad Performance Monitor

When invoked:

1. Ask the user to paste in the current performance export from Google Ads or Meta Ads

(or pull via MCP if connected)

2. Load the baseline from ~/monitoring/ad-baseline.json

3. Calculate changes for each active campaign:

- CTR change vs. 3-day average

- CPA change vs. 7-day average

- Frequency (Meta) vs. yesterday

- Impression share vs. last week (Google)

4. Flag any campaign where:

- CTR has dropped more than 15% from 3-day average

- CPA has risen more than 20% from 7-day average

- Meta frequency exceeds 4.0

- Impression share dropped more than 10 points

5. Output only flagged campaigns with a one-line diagnosis

6. Update baseline with today's numbers

The threshold values are suggestions — adjust them to match your clients’ typical variance. A campaign with high natural CTR fluctuation needs wider thresholds than a stable branded search campaign.

Use Case 3: GSC Click Anomaly Detection

One of the best uses of /loop for SEOs is catching organic traffic anomalies before they become a crisis. A page dropping 40% in clicks in a day is a fire. Catching it on day one is a quick fix. Catching it on day eight means you’ve already lost a week of traffic.

# GSC Anomaly Monitor

When invoked:

1. Pull the last 7 days of GSC data for the property defined in CLAUDE.md using the Google Search Console MCP

2. Compare yesterday's clicks per page against the 7-day rolling average

3. Flag any page where yesterday's clicks were more than 30% below its 7-day average

4. For each flagged page, also check: current average position vs. 7-day average position

5. Diagnose likely cause:

- If clicks dropped AND position dropped: ranking issue — check for manual action or algorithm update

- If clicks dropped but position held: CTR issue — check title/description or SERP feature changes

- If both are normal but clicks low: could be seasonality or day-of-week effect

6. Output: Page URL | 7-day avg clicks | Yesterday | Change % | Likely cause

This pairs directly with the content audit workflow — when /loop flags a page, you have an immediate path to diagnosis and action.

Use Case 4: Competitor SERP Monitoring

If you’ve set up Ahrefs MCP, you can monitor competitor position changes on your target keywords and surface opportunities in real time.

# Competitor SERP Watch

When invoked:

1. Pull SERP overview from Ahrefs for the top 5 target keywords defined in CLAUDE.md

2. Record positions for our domain and for each competitor listed in CLAUDE.md

3. Compare to the baseline in ~/monitoring/serp-baseline.json

4. Flag:

- Any competitor that moved into positions 1-3 on a keyword we're targeting

- Any competitor that dropped out of top 10 (opportunity to take their featured snippet or position)

- Any new domain appearing in top 10 that wasn't there last cycle

5. Output a summary table per keyword showing position changes

6. Update baseline

Run this once a day:

/loop 24h /competitor-serp-watchCombined with the skills system, you can chain this so that when a competitor drops out of the top 10, Claude automatically queues up a content brief to target the opening.

Use Case 5: Campaign Launch Monitoring

The first 4 hours of a new campaign are the most valuable for catching setup errors — wrong audience, broken tracking, disapproved ads that are silently not delivering. Build a launch monitor skill:

# Campaign Launch Monitor

Run this skill immediately after a new campaign goes live. Use /loop 20m /launch-monitor for the first 4 hours.

When invoked:

1. Pull current campaign delivery data (impressions, clicks, spend)

2. Check if spend is pacing correctly vs. daily budget (expected: 20% of daily budget in first 4 hours)

3. Flag if:

- Zero impressions after 30 minutes (delivery issue — likely disapproval or audience too narrow)

- Spend burning unusually fast vs. expected pace (possible bid issue)

- CTR is below 0.5% on a cold audience (creative may need review)

- Conversions tracking shows 0 after first 50 clicks (pixel may be broken)

4. If all metrics are within normal ranges, output: "Launch check [time] — pacing normal"

5. If issues detected, output specific diagnosis and recommended action

This turns a 4-hour manual watch into 4 hours of working on other things while Claude checks every 20 minutes.

How to Structure Your Monitoring Baseline Files

Every monitoring skill needs a baseline to compare against. Keep these in ~/monitoring/ as simple JSON files. Claude will read and update them each cycle. A typical baseline looks like:

// ~/monitoring/rank-baseline.json

{

"last_updated": "2025-04-15T09:00:00Z",

"client": "acme-co",

"keywords": {

"project management software": 7,

"best project management tool": 12,

"project management for agencies": 3,

"project tracking software": 18

}

}

On the first run of any monitoring skill, if no baseline file exists, Claude will create one with the current data and note that the first cycle establishes the baseline — no alerts will fire until the second run.

Combining /loop with /schedule

The difference between /loop and /schedule is persistence. /loop runs while your Claude Code session is open. /schedule runs whether or not you’re active — it creates a background trigger that fires on a cron schedule.

For always-on monitoring, convert your loop commands to scheduled triggers:

/schedule "Run /rank-watch and email me any flagged changes" — cron: 0 8,12,16,20 * * *

This runs the rank check at 8am, noon, 4pm, and 8pm daily, regardless of whether Claude Code is open. You get the results in your inbox. No babysitting required.

Use /loop for active sessions — campaign launches, testing periods, when you’re working and want live feedback. Use /schedule for always-on passive monitoring of stable campaigns and rankings.

The CLAUDE.md Setup for Monitoring

Your CLAUDE.md file should have a monitoring section for each client or property you’re watching. This is what makes the skills above work without prompting each cycle:

## Monitoring Config

### Active Monitoring — Acme Co

- GSC property: sc-domain:acmeco.com

- Ahrefs project: acme-co

- Target keywords to watch: [list your 10-15 priority keywords]

- Competitors to track: competitor-one.com, competitor-two.com

- Alert thresholds: rank drop > 3 positions, click anomaly > 30% below 7-day avg

- Monitoring baseline folder: ~/monitoring/acme-co/

- Alert recipient: your@email.com

### Ad Campaigns — Active

- Google Ads: [account ID]

- Meta Ads: [account ID]

- Active campaigns: [list names]

- CPA targets: [by campaign]

- Budget: [by campaign]

With this in place, every monitoring skill can reference the right property, the right thresholds, and the right baseline files without you specifying them each time.

Real Numbers: What Monitoring Caught That Manual Checks Missed

In practice, continuous monitoring catches three categories of problems that manual checking misses:

Slow degradation: A keyword sliding from position 4 to position 8 over 10 days doesn’t register on a weekly check because each individual move is small. A loop catches it on day 3 when it crosses the threshold.

Off-hours events: Algorithm updates, competitor bid changes, and ad disapprovals don’t observe business hours. If something breaks at 11pm on a Friday and you don’t check until Monday morning, you’ve lost a weekend of impressions. A scheduled monitor catches it within the hour.

Multi-client correlation: If you’re running monitoring across 8 clients and three of them show GSC click drops on the same day, that’s a signal — likely an algorithm update affecting a shared niche or a SERP feature change. Without automated monitoring surfacing the pattern, you’d likely investigate them as three isolated incidents and miss the common cause.

What to Build This Week

- Create

~/monitoring/as your baseline storage folder - Build the

/rank-watchskill and run it manually once to establish a baseline - Start

/loop 4h /rank-watchduring your next active working session - After two days of manual looping, convert it to a

/scheduletrigger - Add the GSC anomaly monitor for your highest-traffic client

- Review what each skill flagged at end of week — refine the thresholds based on what was signal vs. noise

The goal in the first week isn’t to have a perfect monitoring system. It’s to build the habit of reviewing Claude’s flags instead of manually checking dashboards. Once that habit forms, you can expand to ad performance monitoring, competitor tracking, and multi-client correlation.

Get the Full Monitoring Skill Pack

I’ve put together a complete set of monitoring skills — rank watch, GSC anomaly detection, ad performance drift, competitor SERP watch, and a launch monitor — along with the CLAUDE.md monitoring config template. All of it is included in The AI Marketing Stack.

Frequently Asked Questions

What is the /loop command in Claude Code?

The /loop command is a built-in Claude Code skill that re-executes a prompt or custom skill on a repeating time interval. You specify the interval (e.g., 30m, 4h, 24h) and the command to repeat, and Claude runs it continuously until you stop the session. It’s designed for monitoring, polling, and any workflow where you need to check for changes over time rather than run a task once.

How is /loop different from /schedule in Claude Code?

/loop runs within your active Claude Code session — when you close the session, the loop stops. /schedule creates a persistent background trigger that runs on a cron schedule independent of whether Claude Code is open. Use /loop for active monitoring during a working session (like a campaign launch), and /schedule for always-on, background monitoring that runs whether you’re at your desk or not.

Can /loop access live data from Google Search Console or Ahrefs?

Yes — if you’ve connected MCP servers for Google Search Console and Ahrefs, each loop iteration can pull live data from those sources. The GSC MCP lets Claude query clicks, impressions, CTR, and position for any property. The Ahrefs MCP lets Claude pull ranking data, competitor positions, and SERP overviews. Without MCP, each loop cycle would ask you to paste in data manually, which defeats the purpose of automation.

How do I stop a /loop command that’s running?

Press Ctrl+C in the Claude Code terminal to stop an active loop. The loop will finish its current cycle before stopping — it won’t interrupt mid-execution. If you’re running a loop in the background with a long interval (like 4 hours), you can also close the Claude Code session entirely and the loop will stop with it. For persistent monitoring that survives session closure, convert the loop to a /schedule trigger instead.

How should I set thresholds to avoid alert fatigue?

Start with wider thresholds than you think you need. A 30% click drop and a 3-position rank decline are conservative defaults that filter out normal day-to-day variance. After two weeks of monitoring, review the flags Claude generated and ask: how many were genuine issues vs. noise? Tighten or loosen thresholds based on that review. For volatile campaigns (high-spend, competitive keywords), you’ll tolerate more noise in exchange for earlier alerts. For stable organic content, you can set tighter thresholds and expect fewer but more meaningful flags.

Can I run /loop for multiple clients at once?

You can run multiple Claude Code sessions in separate terminal tabs, each running a different loop for a different client. Alternatively, build a master monitoring skill that cycles through all your clients in a single run — check all GSC properties, update all baselines, and output a consolidated summary at the end. For more than 5–6 clients, the consolidated approach is cleaner since it keeps all the alerts in one place rather than spread across multiple terminal windows.

Does /loop work without an internet connection or live data access?

The loop command itself works offline — it will keep executing the prompt on schedule. But if your monitoring skills depend on live MCP tool calls (which most useful ones do), those calls will fail without a connection to the data source. In practice, /loop is only useful for marketing monitoring when paired with live data access. If you’re offline or the MCP connection drops, Claude will report the failure at the next cycle rather than silently skipping it.