Why Most “Claude + Google Ads” Guides Miss the Point

Search for “Claude Google Ads” right now and you’ll find two types of content: listicles of generic prompts you could have written yourself (“write me 5 responsive search ad headlines for a plumber”), and shallow MCP setup guides that show you how to connect the server without ever explaining what to actually do with it.

Neither type will help you recover wasted spend, diagnose a CPA spike in 4 minutes, or build a campaign from scratch without opening the Google Ads interface. This guide will.

What you’re going to find here that you won’t find elsewhere:

- A complete comparison of 10 Google Ads MCP servers — including which ones have write access and which don’t

- The critical client selection issue that determines whether MCP works at all (most guides skip this entirely)

- Actual GAQL queries you can run immediately for the seven most valuable audit workflows

- A step-by-step campaign buildout using write-enabled MCP

- Which Claude model to use for which task (and why using Opus for every task is expensive and unnecessary)

- Honest documentation of where Claude gets Google Ads wrong

If you’re already using Claude for other performance marketing workflows, this is the natural next step. Let’s build on that foundation.

The Client Problem Nobody Explains

Before spending an hour setting up a Google Ads MCP server, understand this: MCP does not work in the claude.ai web browser.

This is the single most common point of failure and confusion — people set up a perfectly configured MCP server, go to claude.ai in their browser, and wonder why they can’t see their Google Ads tools. The answer is that the claude.ai web interface doesn’t support MCP connections from external servers. MCP requires a local client.

Here’s where each approach works:

| Claude Client | MCP Support | Best for Google Ads |

|---|---|---|

| Claude.ai (browser) | No external MCP | CSV upload + manual analysis only |

| Claude Desktop (Mac/Windows app) | Yes — full MCP support | Conversational audits, read-heavy workflows |

| Claude Code (CLI) | Yes — full MCP support + file system + code execution | Automation, buildouts, reporting pipelines, skills |

The exception: claude.ai does have a built-in Google Ads connector available in some configurations (it surfaces as list-accounts and google-ads-download-report tools within the claude.ai interface). This is separate from the external MCP servers we’ll be covering — it’s a Anthropic-managed integration, not something you configure yourself.

For everything in this guide, you need either Claude Desktop or Claude Code. Claude Code is the stronger choice if you want automation, file output, and reusable skill packages — but Claude Desktop works fine for the read-heavy audit workflows.

The Google Ads MCP Landscape: All 10 Servers Compared

In late 2025, Google released an official MCP server for the Google Ads API. Since then, the landscape has fragmented into at least ten credible options — each with different tradeoffs around setup complexity, read vs. write access, and hosting model. Here’s the full picture.

| MCP Server | Access Type | Read | Write | Setup Difficulty | Cost | Best For |

|---|---|---|---|---|---|---|

| Google Official (googleads/google-ads-mcp) | Self-hosted | Yes (GAQL) | No | High (Dev token + OAuth + GCP) | Free (OSS) | Agencies with API access already set up |

| Adspirer | Remote hosted | Yes | Yes (campaigns, keywords, budgets) | Very low (URL + auth) | Free → $199/mo | Most practitioners; officially listed in Claude Desktop |

| itallstartedwithaidea/google-ads-mcp | Self-hosted | Yes (29 tools) | Yes (dry-run default) | Medium (Python + credentials) | Free (OSS) | Full-featured self-hosted write access |

| DigitalRocket-biz/google-ads-mcp-v20 | Self-hosted | Yes (161 tools) | Yes (full CRUD) | High (Python + credentials) | Free (OSS) | Developers needing full API coverage |

| cohnen/mcp-google-ads | Self-hosted | Yes (GAQL + performance) | No | Medium | Free (OSS, 362 stars) | Read-heavy analysis without write risk |

| amekala/ads-mcp | Self-hosted | Yes (39 Google Ads tools) | Yes | Medium | Free (OSS) | Multi-platform (Google + LinkedIn + Meta + TikTok) |

| GoMarble/google-ads-mcp-server | Self-hosted | Yes (list-accounts + GAQL) | No | Medium | Free (OSS) | Minimal footprint, GAQL-focused |

| Adzviser | Remote hosted | Yes (analytics + reporting) | No | Very low (URL only) | Paid ($0.99 trial) | Cross-platform reporting (Google + Meta) |

| Composio Google Ads | Remote hosted | Yes | Yes (customer lists, campaigns) | Low (npx command) | Free tier available | Teams already using Composio for other integrations |

| Zapier MCP (via Google Ads action) | Remote hosted | Limited | Yes (basic actions) | Very low (no code) | Zapier plan required | Non-technical marketers, quick setup |

The most important distinction in this table is read vs. write access. The official Google MCP server — and most community alternatives — is read-only. It can pull data and answer questions but cannot modify bids, pause campaigns, add keywords, or create anything. Google made this choice deliberately to reduce risk in the initial release.

Write access requires a community-built server: Adspirer (hosted), itallstartedwithaidea (self-hosted), DigitalRocket v20 (self-hosted), or amekala’s multi-platform server. More on which to choose based on your situation in the setup section below.

Three Setup Paths (Pick One)

If you haven’t connected any MCP server yet, start with MCP setup basics before continuing here. Once you understand the config file format, pick one of these three paths based on your situation.

Path 1: No-Code Setup via Adspirer (Recommended for Most)

Adspirer is officially listed in Claude Desktop’s connector directory, which means you can add it without touching a config file. Open Claude Desktop → Settings → Connectors → Search “Adspirer” → Connect. Authenticate via OAuth. Done.

For Claude Code, add it to ~/.claude/settings.json:

{

"mcpServers": {

"google-ads": {

"type": "http",

"url": "https://mcp.adspirer.com/google-ads?token=YOUR_ADSPIRER_TOKEN"

}

}

}Adspirer gives you read + write access at $49/month (150 calls) or $99/month (600 calls). For an agency managing multiple accounts, this is the lowest-friction path to a fully functional setup.

Path 2: Official Google MCP (Most Credible, Highest Setup Friction)

The official Google MCP server (googleads/google-ads-mcp) is read-only but has the strongest data fidelity and no intermediary layer between your Claude session and the Google Ads API.

Prerequisites you’ll need before you start:

- Google Ads API Developer Token (apply at developers.google.com — basic access is free, takes 1–3 business days)

- Google Cloud Project with the Google Ads API enabled

- OAuth 2.0 credentials file (

google-ads.yaml) - Python with pipx installed (

brew install pipxon Mac)

Install and configure:

pipx install google-ads-mcpCreate your google-ads.yaml credentials file:

developer_token: YOUR_22_CHARACTER_TOKEN

client_id: YOUR_CLIENT_ID.apps.googleusercontent.com

client_secret: YOUR_CLIENT_SECRET

refresh_token: YOUR_REFRESH_TOKEN

login_customer_id: YOUR_MCC_ID # Optional: only for MCC accountsAdd to Claude Code’s ~/.claude/settings.json:

{

"mcpServers": {

"google-ads": {

"command": "google-ads-mcp",

"env": {

"GOOGLE_ADS_YAML_PATH": "/path/to/google-ads.yaml"

}

}

}

}Verify the connection works by asking Claude: “List all Google Ads accounts accessible to this token.” You should see your account IDs and names immediately.

The official server exposes only two tools: list_accessible_customers and search. The search tool accepts any valid GAQL query — which means it’s more flexible than servers with fixed tool names, as long as you know GAQL (covered in the audit section below).

Path 3: Full Write Access via itallstartedwithaidea (Self-Hosted)

If you want Claude to actually take actions — update bids, pause campaigns, add negative keywords, create ad groups — you need a write-enabled server. The itallstartedwithaidea/google-ads-mcp repo (29 tools, dry-run default) is the most balanced option: it has comprehensive write coverage but defaults to confirm=False, meaning Claude proposes changes and waits for your approval before executing.

# Install

git clone https://github.com/itallstartedwithaidea/google-ads-mcp

cd google-ads-mcp

pip install -r requirements.txt

# Configure credentials (same google-ads.yaml as above)

cp google-ads.yaml.example google-ads.yaml

# Edit google-ads.yaml with your credentialsAdd to your MCP config:

{

"mcpServers": {

"google-ads": {

"command": "python",

"args": ["/path/to/google-ads-mcp/server.py"],

"env": {

"GOOGLE_ADS_YAML_PATH": "/path/to/google-ads.yaml"

}

}

}

}Test the connection: “List all accessible Google Ads accounts.” Then test a write operation in safe mode: “Show me what would happen if I paused the campaign with the lowest ROAS in the last 30 days — but don’t execute anything yet.”

The dry-run behavior is your safety net. Use it for 2 weeks before enabling real execution. This is not optional caution — this is how professional PPC managers actually work with AI tools.

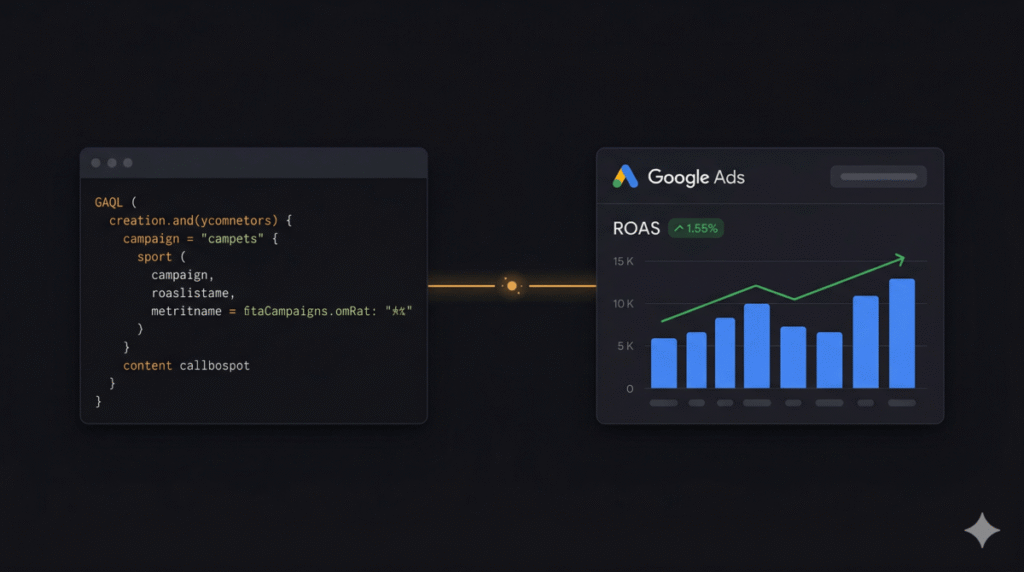

Understanding GAQL Before You Start Auditing

Most of the read-focused MCP servers (including Google’s official one) work through GAQL: Google Ads Query Language. It’s SQL-like — you select fields from resources, filter with WHERE clauses, and order results. Claude can translate natural language into GAQL, but understanding the basics helps you verify what it generates and fix queries when they fail.

The core pattern:

SELECT field1, field2, metrics.clicks

FROM resource_name

WHERE segments.date DURING LAST_30_DAYS

ORDER BY metrics.cost_micros DESC

LIMIT 50Key GAQL resources you’ll query in every audit:

campaign— Campaign-level dataad_group— Ad group datakeyword_view— Keyword performancesearch_term_view— Search term reportad_group_ad— Individual ad performancegeographic_view— Geo performancecampaign_budget— Budget data

One important note: monetary values in GAQL are returned in micros (millionths of the currency unit). A metrics.cost_micros value of 5,000,000 = $5.00. Always divide by 1,000,000 when displaying costs. Claude handles this automatically in most cases, but if a number looks wildly off, that’s why.

Seven Audit Workflows With Real GAQL Queries

Audit 1: Campaign Performance Overview (The Morning Check)

This is the 35-second daily briefing that replaces your morning dashboard scroll. Run it first thing, every day.

Natural language prompt to Claude:

Pull campaign performance for the last 7 days for account [CUSTOMER_ID].

Show total spend, clicks, impressions, conversions, and ROAS for each campaign.

Flag any campaign where:

- ROAS is below 2.0

- Impression share lost to budget is above 20%

- Spend paced more than 15% above or below daily budget average

Sort by spend descending.The GAQL Claude will generate (verify this is what it runs):

SELECT

campaign.name,

campaign.status,

metrics.cost_micros,

metrics.clicks,

metrics.impressions,

metrics.conversions,

metrics.conversions_value,

metrics.search_budget_lost_impression_share,

metrics.search_rank_lost_impression_share

FROM campaign

WHERE segments.date DURING LAST_7_DAYS

AND campaign.status = 'ENABLED'

ORDER BY metrics.cost_micros DESCAudit 2: Search Term Mining (Negative Keyword Identification)

This is where I’ve seen the most concrete ROI from Claude + MCP. A properly run search term audit typically surfaces 15–25% of spend going to irrelevant queries. The manual version takes 2–3 hours; the Claude version takes 4 minutes and is more thorough.

Pull all search terms from the last 30 days for account [CUSTOMER_ID] where:

- Cost > $20

- Conversions = 0

Categorize each term by intent:

- Job seeker (people looking for jobs, not services)

- Competitor brand (named competitor searches)

- Informational (research-only queries with no buying intent)

- Geographic mismatch (terms targeting wrong location)

- Product mismatch (related but wrong product category)

- Irrelevant (no clear connection to the offering)

For each category, output:

1. The terms (up to 10 per category)

2. Total wasted spend in that category

3. The recommended negative keyword to add (exact or phrase match)

4. At which level to add it (campaign or ad group)The GAQL behind this:

SELECT

search_term_view.search_term,

search_term_view.status,

metrics.cost_micros,

metrics.clicks,

metrics.conversions,

metrics.impressions,

ad_group.name,

campaign.name

FROM search_term_view

WHERE segments.date DURING LAST_30_DAYS

AND metrics.cost_micros > 20000000

AND metrics.conversions = 0

ORDER BY metrics.cost_micros DESCAfter Claude outputs the analysis, follow up with:

Generate a CSV with two columns: Keyword and Match Type.

This should be formatted for bulk upload to Google Ads Editor.

Include all confirmed negative keywords from the analysis above.Audit 3: Quality Score Diagnostic

Quality Score affects your actual CPC more than most advertisers realize. A one-point improvement in QS translates to roughly 13% reduction in CPC at equal position. This audit finds your worst QS keywords, diagnoses which component is dragging them down, and prioritizes which ones to fix first.

Pull all keywords from account [CUSTOMER_ID] with:

- More than 500 impressions in the last 30 days

- Quality Score below 6

For each keyword, show:

- Quality Score

- Expected CTR component (BELOW, AVERAGE, or ABOVE)

- Ad Relevance component

- Landing Page Experience component

- Impressions and current CPC

Then group them:

1. Keywords where Expected CTR is the problem → ad copy issue

2. Keywords where Ad Relevance is the problem → keyword-to-ad group mismatch

3. Keywords where Landing Page Experience is the problem → landing page issue

For each group, calculate potential CPC savings if QS improved to 7.

Prioritize by: (current CPC savings) × (impression volume) = impact score.

Show top 10 highest-impact fixes.GAQL for this audit:

SELECT

ad_group_criterion.keyword.text,

ad_group_criterion.keyword.match_type,

ad_group_criterion.quality_info.quality_score,

ad_group_criterion.quality_info.creative_quality_score,

ad_group_criterion.quality_info.post_click_quality_score,

ad_group_criterion.quality_info.search_predicted_ctr,

metrics.impressions,

metrics.average_cpc,

ad_group.name,

campaign.name

FROM keyword_view

WHERE segments.date DURING LAST_30_DAYS

AND ad_group_criterion.quality_info.quality_score < 6

AND metrics.impressions > 500

AND ad_group_criterion.status = 'ENABLED'

ORDER BY metrics.impressions DESCAudit 4: Budget and Pacing Analysis

This catches campaigns that are silently losing revenue due to budget caps — and campaigns wasting budget by overspending into low-quality hours.

For account [CUSTOMER_ID], pull data for the last 7 days showing:

- Daily budget vs. actual spend for each campaign

- Impression share lost to budget

- Average position and top-of-page rate

Identify:

1. Campaigns hitting budget cap before day ends (budget-constrained winners that need more)

2. Campaigns spending their full budget but with ROAS below target (budget-constrained losers that need less)

3. Campaigns with significant IS lost to rank rather than budget (bid adjustment opportunity)

For budget-constrained winners, calculate: if budget doubled, what's the projected incremental revenue based on current ROAS?GAQL:

SELECT

campaign.name,

campaign_budget.amount_micros,

metrics.cost_micros,

metrics.search_budget_lost_impression_share,

metrics.search_rank_lost_impression_share,

metrics.search_impression_share,

metrics.top_impression_percentage,

metrics.conversions_value,

metrics.conversions

FROM campaign

WHERE segments.date DURING LAST_7_DAYS

AND campaign.status = 'ENABLED'

ORDER BY metrics.search_budget_lost_impression_share DESCAudit 5: Root Cause Analysis for CPA Spikes

When CPA suddenly jumps and you don’t know why, this workflow isolates which dimension caused it. This typically takes 1–3 hours manually; Claude does it in under 5 minutes.

My account [CUSTOMER_ID] had a CPA spike starting [DATE].

Compare performance in the 7 days before [DATE] vs. the 7 days after [DATE].

Analyze each of these dimensions separately:

1. Device (mobile vs. desktop vs. tablet)

2. Geographic (by state/city)

3. Time of day / day of week

4. Match type (exact vs. phrase vs. broad)

5. Campaign / ad group

For each dimension, calculate:

- CPA before the spike

- CPA after the spike

- Delta (%)

- Share of total spend

Identify the top 3 contributing factors with their impact percentages.

The sum should account for most of the overall CPA change.Run two GAQL queries — one for each time period — and let Claude compare them:

-- Before period

SELECT

segments.device,

geographic_view.location_type,

segments.hour_of_day,

segments.day_of_week,

metrics.cost_micros,

metrics.conversions,

metrics.cost_per_conversion

FROM campaign

WHERE segments.date BETWEEN '[START_DATE_1]' AND '[END_DATE_1]'

AND campaign.status = 'ENABLED'Audit 6: Ad Strength and RSA Performance

Responsive Search Ads with poor asset combinations waste impressions. This audit identifies which ad groups have low-strength RSAs and which asset slots are underperforming.

For account [CUSTOMER_ID], pull all enabled RSAs from the last 30 days.

Show for each ad:

- Ad strength (Poor, Average, Good, Excellent)

- Number of headlines in use (should be 15)

- Number of descriptions in use (should be 4)

- Impressions and CTR

Flag:

1. Ads with "Poor" or "Average" strength that have >1,000 impressions

2. Ad groups where no ad has reached "Good" or "Excellent"

3. Ad groups with only one enabled RSA (zero variation)

For the worst 5 ad groups, suggest specific improvement actions:

- Missing headline themes (check vs. existing headlines)

- Whether descriptions are varied enough

- Specific ad copy recommendations based on the keyword themeGAQL:

SELECT

ad_group_ad.ad.responsive_search_ad.headlines,

ad_group_ad.ad.responsive_search_ad.descriptions,

ad_group_ad.ad_strength,

ad_group_ad.status,

metrics.impressions,

metrics.ctr,

metrics.cost_micros,

ad_group.name,

campaign.name

FROM ad_group_ad

WHERE segments.date DURING LAST_30_DAYS

AND ad_group_ad.ad.type = 'RESPONSIVE_SEARCH_AD'

AND ad_group_ad.status = 'ENABLED'

ORDER BY metrics.impressions DESCAudit 7: Impression Share Competitor Analysis

This pulls auction insights data to identify where you’re losing to specific competitor pressure — and where you’re actually overbidding against weak competition.

For account [CUSTOMER_ID], pull auction insights for the last 30 days

at the campaign level.

Show for each campaign:

- My impression share

- Top of page rate

- Absolute top rate

- Outranking share vs. competitors

- Overlap rate with main competitors

Identify:

1. Campaigns where I'm losing IS primarily to budget (not rank) — bid increases won't help

2. Campaigns where I'm losing IS to rank — bid or QS improvements needed

3. Any campaigns where I'm outranking competition by a wide margin — potential overbidding

4. Top 3 competitor domains appearing across multiple campaigns

Recommend bid adjustment direction for each campaign (increase, decrease, or hold).Campaign Buildout via Write-Enabled MCP

This is the section that most Google Ads + Claude guides skip entirely. Read-only auditing is useful. The ability to build — without switching tabs, without Google Ads Editor, without bulk upload CSVs — is where Claude + MCP becomes a genuine workflow transformation.

This requires a write-enabled server. Use Adspirer or itallstartedwithaidea. Always work in dry-run mode first.

Step 1: Set Up Your CLAUDE.md with Account Context

Before running a single buildout command, populate your CLAUDE.md file with account-specific context. This prevents Claude from asking the same questions every session.

## Google Ads Context — [Client Name]

### Account Info

- Customer ID: [XXXXXXXXXX]

- Industry: [Industry]

- Primary conversion goal: [Lead form / Phone call / Purchase]

- Target CPA: $[X]

- Monthly budget: $[X]

- Geographic targeting: [Cities/states/countries]

### Brand Rules

- Never bid on: [competitor names, irrelevant categories]

- Always use: [brand name format, trademark symbols]

- Tone in ad copy: [Direct/Professional/Conversational]

### Campaign Structure

- [Campaign 1 name]: [Purpose, budget, strategy]

- [Campaign 2 name]: [Purpose, budget, strategy]

### Negative Keyword Lists

- Account-level negatives: [key terms]

- [Campaign 1] negatives: [specific terms]Step 2: Generate Campaign Structure

Based on my CLAUDE.md context, plan a new Search campaign for [OFFERING].

Create a campaign structure with:

- Campaign name following our naming convention

- 4-6 tightly themed ad groups (max 15-20 keywords each)

- 3 keyword match types per theme (exact, phrase, broad match modifier)

- 15 headline options per ad group (following RSA best practices)

- 4 descriptions per ad group

For each ad group, include:

- Theme and core keyword intent

- Primary keyword list (exclude generic terms we already have in existing campaigns)

- Negative keywords specific to this ad group to prevent cross-contamination

Output: a structured plan in JSON format I can review before execution.Step 3: Dry Run Review

Show me what create_campaign would do if I approved the structure above.

Run in dry-run mode (confirm=False). Don't execute anything.

Show me: campaign settings, ad group names, keyword counts, and estimated budget impact.Review the output carefully. Confirm ad group themes are truly distinct. Verify keywords don’t overlap with existing campaigns. Check that all headlines are under 30 characters and descriptions under 90.

Step 4: Execute in Stages

Create the campaign shell and 2 ad groups first:

- Campaign: [name], Budget: $[X]/day, Bidding: Target CPA $[X]

- Ad Group 1: [name] with [keyword list]

- Ad Group 2: [name] with [keyword list]

Execute now (confirm=True for each step).

Pause the campaign after creation — I'll review before enabling.Build in stages rather than all at once. One campaign, then two ad groups, then ads, then keywords. If something goes wrong, you want to catch it before the entire structure is live.

Step 5: Add Assets and Extensions

For the campaign we just created, generate:

- 4 sitelink extensions with descriptions

- 3 callout extensions

- 2 structured snippet extensions (services type)

- 1 call extension (phone: [NUMBER])

All sitelinks should link to existing pages on [DOMAIN].

Use URLs that are actually live — check if unsure.Which Claude Model to Use for Which Task

Using Claude Opus for every Google Ads task is like hiring a senior strategist to sort your keywords alphabetically. The right model match saves real money at scale.

| Task | Recommended Model | Why |

|---|---|---|

| Root cause analysis, campaign strategy, complex budget reallocation | Claude Opus 4 | Multi-factor reasoning, connecting disparate data points |

| Daily audits, ad copy generation, GAQL query writing, search term categorization | Claude Sonnet 4 | Best cost-to-capability ratio; handles most PPC tasks cleanly |

| High-volume categorization (1,000+ search terms), keyword list generation, simple reporting | Claude Haiku 4.5 | Very fast, very cheap — for repeatable, high-volume classification tasks |

In practice: run your weekly audit and ad copy generation on Sonnet. Reserve Opus for your monthly strategy review and any root cause analysis where the performance drop is significant enough to warrant deep reasoning. Use Haiku inside automation loops where you’re processing hundreds of search terms or keywords at once.

In Claude Code, you can specify the model per session with claude --model claude-sonnet-4-6 or set a default in your settings.

Agency-Scale Workflows: Multi-Account Management

If you manage more than three accounts, the per-account workflows above become bottlenecks. Here’s how to operate at agency scale.

Morning Multi-Account Brief

Pull yesterday's performance for all accounts in this MCC: [MCC_ID].

For each account, show:

- Total spend, conversions, CPA

- vs. 7-day average (% change)

Flag any account where:

- CPA is >25% above 7-day average

- Spend is >20% above or below daily budget average

- Any campaign has been budget-limited for 3+ consecutive days

Output as a brief I can review in under 2 minutes.

Format: Account Name | Spend | Conv | CPA | 7d Avg CPA | Status | FlagThe GAQL for MCC-level access:

SELECT

customer.descriptive_name,

customer.id,

metrics.cost_micros,

metrics.conversions,

metrics.cost_per_conversion

FROM customer

WHERE segments.date = 'YESTERDAY'Automated Weekly Reporting

Combine Claude + MCP + automated reporting workflows to eliminate manual report generation. The pattern:

- Claude pulls account data via MCP on a schedule

- Generates a formatted performance summary

- Writes it to a Google Sheet (via Sheets MCP or Google Sheets API)

- Drafts a client-facing email with the key highlights

To run this on a recurring schedule, use the /loop command in Claude Code for within-session monitoring, or the /schedule command for true background automation:

/schedule 0 9 * * 1 "Pull Google Ads weekly performance for all accounts in MCC [ID].

Generate client report summaries. Write to ~/clients/reports/week-$(date +%Y-%m-%d).md"Cross-Platform Analysis (When Managing Google + Meta)

If you’re also managing Meta Ads (covered in the Meta Ads creative testing guide), the amekala/ads-mcp and Adspirer servers both support multi-platform in a single session. This enables questions like:

Compare last 30-day CPA across all platforms:

- Google Search (pull from MCP)

- Meta (pull from MCP)

Calculate: if we shifted $2,000/month from the higher-CPA platform

to the lower-CPA platform, what's the projected conversion increase?

Assume both platforms have room to absorb additional budget based on IS data.Where Claude Gets Google Ads Wrong (Honest Limits)

No guide on this topic should skip the failure modes. These are documented, real, and worth knowing before you put Claude in charge of anything consequential.

1. GAQL Query Errors

Claude can generate invalid GAQL — wrong field names, incompatible resource combinations, or malformed date ranges. The official server returns a clear error message when this happens. Always validate the query before acting on the results. If a query fails, paste the error back to Claude and ask it to fix the specific issue.

Common error: combining fields from incompatible resources. Not all GAQL fields can be queried together in a single statement — the API will reject queries that mix incompatible resource segments.

2. Hallucinated Change History

This is documented from real practitioner experience (OpenMoves, 2025): when asked to analyze change history without real API data, Claude sometimes generates plausible-sounding but fabricated data. The fix is simple — always pull change history from the actual API via MCP rather than asking Claude to reconstruct it from memory or inference. If you’re not connected to the API, don’t ask for historical change analysis.

3. Context Window Saturation

Large accounts with thousands of keywords and hundreds of campaigns will exceed Claude’s context window in a single session. Signs you’re hitting this limit: Claude starts forgetting earlier data, contradicts itself on account details, or produces increasingly generic responses.

The solution: split large audits into campaign-level sessions. Audit campaigns individually, then synthesize findings in a separate session that only receives the summary outputs.

4. The “Sounds Confident, Is Wrong” Problem on Bidding Strategy

Claude tends to make confident bidding strategy recommendations that are directionally correct but miss account-specific nuance: auction behavior, historical QS trajectory, competitive dynamics in specific ad auctions. Use Claude’s bidding recommendations as a starting point for analysis, not a final answer. Validate against your own data before making structural changes.

5. Security Consideration for Enterprise Use

Research from early 2025 found 43% of tested MCP implementations vulnerable to prompt injection attacks. In the context of Google Ads, this means: if your ad account contains ads with adversarial text (from competitor conquesting campaigns or display ads pulling user-generated content), there’s a theoretical risk that content could influence Claude’s behavior in a connected session.

For enterprise use, apply principle of least privilege: use read-only MCP for analysis workflows, require human approval for all write operations, and never run automated write workflows on accounts where you don’t fully control the ad creative content.

Claude vs. Dedicated Google Ads AI Tools

If you’re evaluating whether to invest time in this setup or just pay for Opteo, Adalysis, or a dedicated AI optimization platform, here’s the honest comparison.

| Capability | Claude + MCP | Dedicated Tools (Opteo, Adalysis) |

|---|---|---|

| Always-on monitoring | With /schedule or Make/n8n trigger | Built-in, zero setup |

| Pre-built optimization recommendations | Requires prompting | Automated, dashboard-first |

| Ad copy generation | Superior — context-aware, brand-specific | Limited or generic |

| Root cause analysis | Superior — multi-factor reasoning | Rules-based, not contextual |

| Custom workflow building | Unlimited flexibility | Fixed feature set |

| Cross-platform analysis | Yes (with multi-platform MCP) | Rarely (platform-specific) |

| Non-technical user experience | Requires setup investment | Purpose-built UI |

| Price (entry level) | Claude Pro ($20/mo) + MCP server | $99–$389/mo |

The framing that matters: Claude + MCP is not a replacement for dedicated optimization tools. It’s a different category. Opteo is excellent at surfacing the recommendations it was programmed to surface. Claude is excellent at answering questions nobody programmed it to answer — and at doing things no dedicated tool can do, like generating account-specific ad copy, writing Python scripts for custom reporting, or correlating ad performance with external data sources.

The best-performing agencies aren’t choosing between them. They use dedicated tools for always-on monitoring and routine optimizations, and Claude for strategic analysis, copy generation, and custom automation that falls outside the dedicated tool’s feature set.

Your First Week Action Plan

- Day 1: Choose your MCP server based on the comparison table. Install and verify the connection with a single test query (list your accounts).

- Day 2: Run Audit 1 (morning overview) and Audit 2 (search term mining). The search term audit alone will likely surface $500–$2,000 in provably wasted spend in most accounts.

- Day 3: Set up your CLAUDE.md with account context. This pays for itself every session — you stop re-explaining the account from scratch.

- Day 4: Run Audit 3 (Quality Score) and prioritize QS fixes. A 1-point improvement on high-volume keywords compounds through every subsequent click.

- Day 5: If you have write-enabled MCP: run one small buildout (one ad group with 3 ads) in dry-run mode to validate the workflow before using it on live accounts.

- Ongoing: Set up the morning multi-account brief as a scheduled workflow. Package your most-used audit prompts as reusable skills so any team member can run them with one command.

Frequently Asked Questions

Does Google officially support using Claude with Google Ads via MCP?

Yes – Google released an official open-source MCP server (googleads/google-ads-mcp) in October 2025, announced on the Google Ads Developer Blog. This server is read-only and built on the same Google Ads API that agencies and tool developers have used for years. Using it requires a Google Ads API Developer Token (free to apply for). Write operations require community-built servers like Adspirer or itallstartedwithaidea’s implementation — these are third-party tools, not Google-sanctioned, though they use the same official API.

Will Claude make changes to my live campaigns without my approval?

Only if you explicitly configure it to. The recommended write-access servers (itallstartedwithaidea, DigitalRocket v20) default to dry-run mode with confirm=False – Claude outputs what it would do and waits for your approval. Even Adspirer, which has write access, requires you to explicitly instruct Claude to execute changes. The safe operating model is: always run in review mode for at least two weeks on any new account before enabling autonomous execution, and even then, only automate mechanical decisions (pausing ads with zero conversions after $200+ spend) rather than strategic ones (campaign restructuring, bid strategy changes).

Do I need a Google Ads API Developer Token to use Claude with Google Ads?

It depends on which MCP server you use. The official Google MCP server requires a Developer Token (apply at developers.google.com — basic access is free and typically approved in 1–3 business days). Community servers using the same API also require one. Hosted solutions like Adspirer or Adzviser handle the API layer on their end — you authenticate via OAuth and they use their own developer tokens, so you don’t need to apply for one yourself. If you want the fastest path to getting started without the API setup overhead, Adspirer is the practical choice.

Can Claude write Google Ads without MCP – just from a CSV export?

Absolutely, and this is often the right starting point. Export your search terms, keyword performance, or campaign data as a CSV from Google Ads Manager, drop it into your Claude Code session, and run any of the audit prompts above. Claude reads CSV files natively. You lose the live-data connection and can’t execute changes directly, but for analysis-heavy workflows — QS diagnostics, search term categorization, ad copy generation — the CSV approach works well and requires zero setup. Start here, validate that Claude’s output matches your own judgment on a few accounts, then invest in the MCP setup once you’ve confirmed the value.

How is this different from just using Google Ads’ built-in AI features?

Google’s native AI (Smart Bidding, Performance Max, Broad Match + AI, Responsive Search Ads optimization) is a delivery system — it decides who sees your ads and which creative variants to show them. It’s excellent at that job. What it cannot do: explain why your CPA spiked last Tuesday, identify the specific search terms wasting 20% of your budget, or write ad copy calibrated to your specific customer persona and brand voice. Claude operates at the strategy and analysis layer. The two are complementary: Google’s AI optimizes delivery, Claude optimizes the strategic inputs that feed that delivery. Accounts using both — strong foundational structure built and audited with Claude, delivery optimized by Google’s AI — outperform accounts relying on either alone.

Which Google Ads MCP server should an agency use for managing multiple client accounts?

For agency use with multiple client accounts (MCC access), Adspirer is the most practical starting point — it’s hosted, supports MCC, and lists officially in Claude Desktop without a self-hosted server to maintain. For agencies with technical capacity that want zero intermediary layer and maximum data fidelity, the official Google MCP with a shared Developer Token handles MCC access cleanly. The itallstartedwithaidea server (29 tools, write-enabled) is the best self-hosted option when you need write access for buildout workflows. Note: regardless of server, you’ll want separate CLAUDE.md files per client — one per project directory — to prevent account context from bleeding between sessions.

Can I use Claude to build an entire Google Ads campaign from scratch?

Yes, with a write-enabled MCP server (Adspirer, itallstartedwithaidea, or DigitalRocket v20). The workflow covered above — CLAUDE.md setup → structure planning → dry-run review → staged execution — will build a complete campaign including ad groups, keywords, RSAs, and extensions without opening the Google Ads interface. In practice, the first buildout takes about 30–45 minutes end-to-end; subsequent campaigns in the same account run much faster because your CLAUDE.md already has account context. For large buildouts (10+ ad groups), build in stages rather than all at once — it’s easier to catch and fix structural errors early than after the entire campaign is live.