The Real Creative Testing Problem

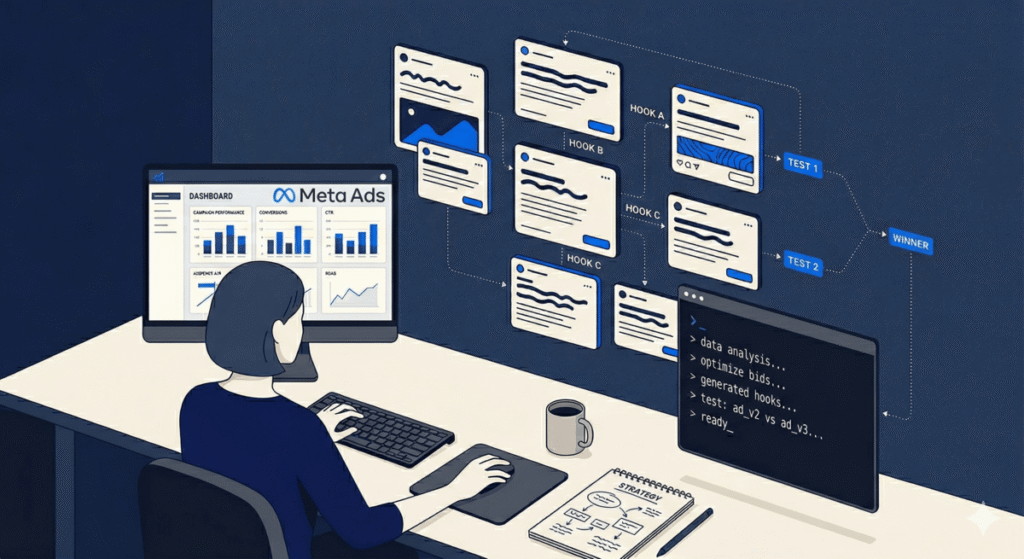

Most Meta Ads teams don’t have a creative problem. They have a creative throughput problem.

They know they should be testing more angles. They know a fresh hook can drop CPAs by 30%. They know the algorithm rewards creative diversity. But between writing briefs, getting approvals, uploading variants, and analyzing results, the realistic cadence ends up being 3–5 new creatives per month — not the 20–30 that would actually tell you something.

The result: accounts run the same 4 creatives until fatigue kills them, then scramble to produce replacements with no systematic understanding of why the last ones worked or stopped working.

Claude Code fixes the throughput problem. But not in the way most people describe it — not as a “write me 10 ad variations” prompt machine. The real use is as a creative strategy layer: analyzing what your account data is actually telling you, building a structured testing framework from that analysis, and then generating variants that are grounded in a specific hypothesis rather than produced at random.

This post walks through the exact workflow I use. It doesn’t require the Meta Marketing API. It works with CSV exports. And it produces a level of creative rigor that most accounts — including agency-managed ones — simply don’t have.

What Claude Code Adds That Meta’s Native AI Doesn’t

Before building anything, it’s worth being clear on what problem you’re solving — because Meta already has AI features. Advantage+ Creative, Dynamic Creative Optimization, and Meta’s built-in copy suggestions all exist. So why does Claude Code matter on top of them?

Meta’s AI is a delivery optimizer. It decides which existing creative to show to which person, and it can make minor tweaks — brightness adjustments, aspect ratio crops, text overlay placement. It’s excellent at that job.

What it doesn’t do: tell you why a creative performed, identify the specific element (hook, visual, CTA, pain point framing) that drove the performance, or help you design the next generation of tests based on what you learned. It optimizes within the creative set you give it. It can’t expand the strategic quality of that set.

That’s the gap Claude fills. Claude Code, given your account data and brand context, functions as a creative strategist — running the pre-flight analysis, identifying what your winners have in common, designing a structured testing matrix, and writing variants that are testing a specific hypothesis. It doesn’t replace Meta’s optimization. It feeds Meta’s optimization better raw material.

Step 0: Set Up Your CLAUDE.md for Meta Ads

Before touching a single ad, spend 20 minutes on your CLAUDE.md file. This is the persistent context that makes every Claude Code session start from the right place instead of requiring you to re-explain the account from scratch.

For Meta Ads work, your CLAUDE.md needs four sections:

## Meta Ads Context — [Client Name]

### Account Overview

- Ad account ID: [ID]

- Primary objectives: [Lead gen / Purchase / App install]

- Monthly budget: $[X]

- Average CPA target: $[X]

- Primary funnel: [Cold → Retargeting / Full funnel]

### Brand Voice & Constraints

- Tone: [Conversational, authoritative, urgent — be specific]

- What we never say: [List 3–5 things — competitor names, claims you can't back, words that feel off-brand]

- What our best ads sound like: [2–3 sentences describing the voice with a real example line]

### Customer Personas (for cold targeting)

- Persona 1 — [Name]: [Job title, main pain point, what they care about, what they distrust]

- Persona 2 — [Name]: [Same format]

- Persona 3 — [Name]: [Same format]

### Winning Ad Vault

These are our proven best performers. When generating new creative, treat these as the benchmark.

- [Ad name]: [Paste the actual hook and first 2 lines. Note: what made it work based on your read of the data]

- [Ad name]: [Same format]

### What We've Already Tested (and killed)

- Discount-forward hooks: killed — high CTR, terrible CVR on this audience

- Fear-based messaging: killed — frequency degraded faster than other creative types

- Video testimonials under 30s: killed — not enough context for cold audience

The Winning Ad Vault and the “already tested” section are the most important parts. Without them, Claude generates the same generic angles that exist in every competitor’s account. With them, Claude has a quality bar to beat and a list of directions already proven not to work.

Part 1: The Diagnosis Workflow

Never start generating new creative until you’ve analyzed the last 30 days. This is where most teams skip a step and end up with a library of new ads that make the same mistakes as the ones they just retired.

Export from Meta Ads Manager: a breakdown by ad (not campaign) showing the last 30–90 days with these columns: Ad name, Spend, Impressions, CTR, CPA or ROAS, Frequency, Relevance score.

Drop the CSV in your working directory, then run this prompt:

I'm attaching 90 days of Meta Ads performance data for [client].

Read the attached CSV. Do the following analysis:

1. Identify the top 5 performers by CPA/ROAS. For each, note their CTR and frequency

at time of peak performance (not just current).

2. Identify the 5 worst performers that received more than $500 in spend.

Diagnose whether they failed at the hook (high impression, low CTR),

the landing page (high CTR, high CPA), or audience fit (low CTR from start).

3. Look at the ad names and copy across all top performers. What do they have in common?

Identify: common emotional triggers, message structure (problem-first vs. solution-first),

length patterns, and whether they use social proof or authority signals.

4. Identify any creative fatigue signals: ads where frequency > 3.5 AND CTR has

declined more than 20% from their first-week CTR.

5. Based on this analysis, write a 3-point creative brief summary:

- What's working (the pattern to replicate)

- What to stop (the patterns that consistently underperform)

- What hasn't been tested yet (gaps in the creative approach based on what you see)

Output the brief in a format I can paste into my CLAUDE.md under "Creative Insights."

The output of this step is not copy. It’s strategic clarity. You now know what your account data actually says about your audience — not what you assumed when you set it up, but what they’ve responded to over real spend.

Part 2: Building the Creative Matrix

The Creative Matrix is the structured framework that prevents you from generating random variations and calling it testing. The idea is simple: instead of “write me 10 ads,” you define the axes you want to test across, generate one variant per cell, and know exactly what hypothesis each ad is testing.

Run this prompt after your diagnosis:

Based on the creative analysis above and the persona definitions in my CLAUDE.md,

build a Creative Matrix for the next testing cycle.

The matrix should have these axes:

- Hook type: [Problem hook / Curiosity hook / Social proof hook / Contrarian hook / Specificity hook]

- Persona: [Use the 3 personas from CLAUDE.md]

- Message angle: [The 3 "what's working" angles from the diagnosis + 1 untested angle]

For each combination, write one short ad unit (primary text + headline, Meta format).

Each ad should clearly reflect the hook type and persona it's written for —

I should be able to tell from reading it which cell in the matrix it occupies.

Format the output as a table: Hook Type | Persona | Angle | Primary Text | Headline

After the table, note which 6–8 combinations you'd prioritize launching first

based on what the diagnosis revealed about this audience.

With 5 hook types × 3 personas × 4 message angles, you have 60 possible combinations. You won’t test all 60 — but you’ll have a structured library to draw from, and every ad you do test is testing a specific cell rather than a vague “let’s try something different” hunch.

The “prioritize first” section is important. Claude, having just analyzed your account data, can make an informed call about which combinations are most likely to surface meaningful signal fastest — which ones are closest to what’s already working, and which ones represent the highest-risk, highest-reward departures from your current creative approach.

Part 3: Writing the Variants

With the matrix built, writing specific variants is fast. The key is keeping each prompt anchored to a single hypothesis. Don’t ask Claude to “write some variants of this ad.” Ask it to test one specific thing.

Here are the five variant types worth testing systematically, with prompts for each:

Hook Variants (testing the opening line)

Take this winning ad from the vault:

[Paste the ad]

Keep everything else identical (body copy, CTA, visual direction).

Rewrite ONLY the opening hook in these 4 styles:

1. Problem hook: Opens by naming the specific pain

2. Curiosity hook: Opens with an unexpected or counterintuitive statement

3. Social proof hook: Opens with a result or credibility signal

4. Direct hook: Opens by naming exactly who this is for

Each hook should be under 12 words. Do not change the body copy.

Label each variant with its hook type.

Length Variants (testing short vs. long copy)

Take this best-performing ad:

[Paste the ad]

Write two versions:

1. Short: 1–2 sentences max. Lead with the hook, end with the CTA.

2. Long: 150–200 words. Expand on the pain, provide specific context,

include one piece of social proof, close with urgency.

Both should share the same core message and CTA.

Note at the top of each: when to use this format based on audience temperature

(cold awareness vs. warm retargeting).

Persona Variants (testing different audience frames)

Write 3 versions of this ad, one for each persona in my CLAUDE.md.

The core offer is identical, but the framing, language, and pain point

referenced should be specific to each persona.

Persona 1 ad should feel like it was written specifically for [Persona 1 name].

Someone outside that persona reading it should feel like it's not for them.

[Paste the ad to reframe]

CTA Variants (testing friction and urgency)

Take this ad:

[Paste the ad]

Keep the body identical. Write 5 CTA variations:

1. Low-friction: no commitment ("See how it works")

2. Benefit-forward: leads with the outcome ("Start saving 3 hours a week")

3. Urgency: time or availability constraint (only if it's genuine)

4. Social proof embedded: references others taking action

5. Direct: what they literally do next ("Book a 20-minute call")

Flag which you'd recommend testing first given this is a cold audience.

Angle Variants (testing different reasons to care)

The core offer: [1-sentence description]

Write 4 ads, each leading with a completely different reason this matters to the customer:

1. Time: How much time does this save or what time-related problem does it solve?

2. Money: The financial outcome, cost, savings, or ROI

3. Fear: What risk or negative outcome does this prevent?

4. Identity: What does using this say about the kind of person/business they are?

These are not variations of each other — they are four separate ads for four different

audience segments defined by what they care most about.

The pattern here: every prompt asks Claude to test one variable at a time, keeps everything else fixed, and explains why you’re testing what you’re testing. This is how you actually learn from Meta’s algorithm instead of just producing more creative churn.

Part 4: Reading Results and Deciding What to Scale

Two weeks after launching your matrix, you’ll have meaningful data. Bring it back to Claude for the next round of analysis — not just to pick winners, but to understand the pattern across winners.

Here are results from our last creative test cycle (attached CSV):

Analyze the results across these dimensions:

1. Which hook type performed best on cold audiences? Any significant differences

between hook types on warm retargeting vs. cold?

2. Did any persona-specific variants significantly outperform the generic versions?

Which persona showed the strongest signal?

3. What was the best-performing length? Did long copy outperform short on any

specific objective (purchases vs. leads vs. link clicks)?

4. Was there any creative fatigue pattern in the first 2 weeks — ads that started

strong and degraded faster than others?

5. Based on the results, recommend the next 3 hypotheses to test.

For each: what you're testing, why the current results suggest this is worth testing,

and which existing winning ad to use as the control.

Update my CLAUDE.md Winning Ad Vault with any new entries that earned their place.

Add anything killed this cycle to the "already tested" section.

The last instruction matters most. Every test cycle should update your CLAUDE.md. Six months from now, your CLAUDE.md will contain a detailed, account-specific understanding of what works for this audience that no external tool can replicate. That institutional knowledge compounds — and it transfers to every new contractor, agency, or team member who works on the account. (If you haven’t set up a CLAUDE.md for your clients yet, start here.)

Connecting Meta Ads via MCP: Your Options

The CSV workflow above works for everything in this post. But once you’re running the creative testing system consistently, the manual export step becomes the bottleneck — especially when you want daily frequency monitoring, spend pacing alerts, or the ability to pause underperforming ads directly from Claude.

That’s where a Meta Ads MCP server comes in. If you’ve already set up MCP for your marketing stack, adding Meta Ads is one more connection in the same config file. There are now six credible options, and they’re not equivalent. Here’s the honest breakdown so you can pick the right one for how you work.

The Options at a Glance

| MCP Server | Setup | Read | Write | Cost | Best for |

|---|---|---|---|---|---|

| Pipeboard | Remote URL (no install) | Yes (30+ tools) | Yes | Paid | Most practitioners |

| GoMarble | One-line CLI install | Yes | Limited | Free | Read-only analysis |

| Adzviser | Remote URL (no install) | Yes (analytics) | No | Paid | Reporting-focused teams |

| Composio | npx command | Yes | Yes (pause, create) | Free tier | Write automation |

| brijr/meta-mcp | Self-hosted | Yes | Yes (full) | Free (OSS) | Developers |

| GoMarble Alt / attainmentlabs | Self-hosted Python | Yes | Yes (creates as PAUSED) | Free (OSS) | Cautious automation |

Option 1: Pipeboard — Recommended for Most Practitioners

Pipeboard’s meta-ads-mcp is the most fully-featured option and the easiest to set up. It runs as a remote MCP — you paste a URL into your Claude Code config and authenticate via OAuth. No Python environment, no local install.

It provides 30+ tools covering campaign management, ad set insights, creative performance, budget pacing, and audience analysis. It supports both read and write operations — you can pull performance data and instruct Claude to pause an ad, update a budget, or flag creative fatigue, all in the same session.

Setup in Claude Code settings (~/.claude/settings.json):

{

"mcpServers": {

"meta-ads": {

"type": "http",

"url": "https://mcp.pipeboard.co/meta-ads-mcp?token=YOUR_PIPEBOARD_TOKEN"

}

}

}

Or add it via CLI:

claude mcp add --transport http meta-ads "https://mcp.pipeboard.co/meta-ads-mcp?token=YOUR_PIPEBOARD_TOKEN"Get your Pipeboard API token at pipeboard.co — the free tier covers basic use; paid tiers unlock higher rate limits and multi-account support.

Once connected, verify the connection works:

Use mcp_meta_ads_get_ad_accounts to list all ad accounts connected to this token.Then test a real workflow:

Pull the last 7 days of performance for all active ads in account [ID].

Show me: ad name, spend, CTR, CPA, and current frequency.

Flag any ad where frequency > 3.5 AND CTR has dropped more than 15% from its first-week average.

Option 2: GoMarble — Free, Read-Only Analysis

If you want live data pulls without a paid subscription, GoMarble’s Facebook Ads MCP is the right choice. It’s free, open-source, and handles the read-side workflows — campaign performance, ad set insights, creative breakdowns — without touching write operations.

One-command install via Smithery:

npx -y @smithery/cli install @gomarble-ai/facebook-ads-mcp-server --client claudeThis auto-configures Claude Desktop. For Claude Code CLI, add manually after install:

{

"mcpServers": {

"facebook-ads": {

"command": "python",

"args": ["/path/to/facebook-ads-mcp-server/server.py"],

"env": {

"META_ACCESS_TOKEN": "your_meta_access_token"

}

}

}

}

To get your Meta Access Token: go to Meta Developer Graph API Explorer, generate a User Access Token with ads_read and ads_management permissions, and paste it into the env block. GoMarble does not store your token — it stays local.

GoMarble is the right pick if you want live data for the diagnosis and results-analysis workflows above, but you’re not ready to give Claude write access to live ad accounts yet. That’s a reasonable starting point.

Option 3: Adzviser — Reporting-First Teams

Adzviser’s MCP (https://mcp.adzviser.com/http) is purpose-built for analytics and reporting rather than campaign management. It connects Meta Ads, Google Ads, and other platforms in a single server — useful if you’re doing cross-platform performance analysis and want unified reporting in one Claude session.

Setup is a single URL paste — no install at all:

{

"mcpServers": {

"adzviser": {

"type": "http",

"url": "https://mcp.adzviser.com/http"

}

}

}

It’s read-only — no write operations. If your use case is “I want Claude to pull and analyze performance data across multiple ad platforms in one shot,” Adzviser handles that cleanly. If you need to act on what you find (pause, update, create), you’ll need Pipeboard or Composio instead.

Option 4: Composio — Write Automation with Guardrails

Composio’s Meta Ads MCP supports full write operations — create campaigns, pause ad sets, update budgets — and has a free tier worth starting on before committing to a paid plan.

{

"mcpServers": {

"metaads": {

"command": "npx",

"args": ["@composio/mcp@latest", "start", "--toolkit=metaads"],

"env": {

"COMPOSIO_API_KEY": "your_composio_key"

}

}

}

}

Composio is a good option if you’re already using it for other tool integrations (it supports 250+ apps), since you can manage everything under one API key rather than maintaining separate credentials per platform.

Read vs. Write: What You Should Actually Automate

A note before giving Claude write access to a live ad account: read operations are always safe. Write operations need guardrails.

The way I recommend approaching this:

Start in review mode. Before enabling Claude to take actions, run it in recommendation-only mode for two weeks. Give it the prompt:

Analyze today's ad performance. Tell me:

- Which ads you would pause and why (with the specific threshold that triggered it)

- Which budgets you would adjust and by how much

- Any creative fatigue you're detecting

Do NOT take any actions. Output a recommended action list that I will review and approve.

After two weeks, you’ll know if Claude’s judgment matches yours on this account. Then you can enable execution for the low-stakes operations (pausing clearly-dead creatives, minor budget shifts) while keeping higher-stakes changes (new campaign creation, audience restructuring) as review-and-approve.

The specific write operations worth automating first:

- Pause ads where frequency > 4.5 AND ROAS is more than 20% below account average — this is mechanical, not strategic

- Send a daily performance summary to a Slack channel or log file — informational, zero risk

- Flag any ad set spending more than 130% of its daily budget pace before noon — alerting, not acting

What to keep human-in-the-loop on:

- Campaign creation (audience configuration is too consequential to automate early)

- Budget increases above 20% (scaling decisions involve strategic context Claude doesn’t always have)

- Anything touching bidding strategy or campaign objective

The goal is removing the mechanical, rule-based decisions from your plate so you can focus on the judgment calls. MCP makes that possible — but only if you build the right guardrails before you hand over the keys. For always-on background monitoring (frequency checks, pacing alerts while you’re not in a session), combine MCP with the /loop and /schedule commands.

The System, Not the Ad

The most common mistake I see with AI and Meta Ads is using it to produce more output without changing the underlying process. Generating 40 ad variations with Claude and uploading them all to Meta is not a strategy — it’s expensive noise that burns budget while the algorithm tries to find signal in a haystack you created.

The workflow above is different because it’s built around a specific testing philosophy: one hypothesis per ad, analyze before you create, update your knowledge base after every cycle. Claude accelerates each of those steps dramatically. But the discipline of actually running a structured creative testing program is still yours.

What changes: you can now run that program at a scale that was previously only possible at large agencies with dedicated creative strategists. For a solo operator or a small team, that’s a genuine competitive edge.

What to Build This Week

- Add the Meta Ads section to your CLAUDE.md — personas, brand voice, and at least two Winning Ad Vault entries

- Export 60–90 days of ad-level performance data from Meta Ads Manager (CSV)

- Run the Diagnosis Workflow prompt on that export — read what it tells you; update your CLAUDE.md with the Creative Insights output

- Build your first Creative Matrix (just 3 hook types × 2 personas × 2 angles = 12 ads to start) — or package it as a reusable skill so you can invoke it with one command on every client

- Launch the top 6 as a dedicated testing campaign with 10–20% of total account budget

- Schedule a results review in 14 days — run the analysis prompt, update your Winning Ad Vault

The first cycle will feel like more work than just writing ads. The second cycle will be twice as fast and twice as informed. By cycle four, your CLAUDE.md will contain a tested creative playbook that’s specific to your audience — something no competitor can reverse-engineer from your ads alone.

Get the Meta Ads Creative Testing Skill Pack

I’ve packaged the complete workflow above as ready-to-use Claude Code skills: the Diagnosis Skill, the Creative Matrix Builder, the Variant Writer (all 5 types), and the Results Analyzer. Each skill includes the prompt, a sample CLAUDE.md block, and instructions for setting up the folder structure. They’re all included in The AI Marketing Stack.

Frequently Asked Questions

Do I need the Meta Marketing API to use Claude Code for Meta Ads?

No. Every workflow in this post runs on CSV exports from Meta Ads Manager. Export your ad-level performance data, drop the file in your project folder, and Claude reads it directly. The Meta Ads MCP connection via Composio is an optional upgrade for live data pulls and automated monitoring, but it’s not required to get value from the creative strategy and analysis workflows.

How is using Claude for Meta Ads different from Meta’s built-in AI tools?

Meta’s native AI (Advantage+ Creative, DCO, auto-placements) is a delivery optimizer — it decides which of your existing creatives to show to which person, and it can make minor asset modifications. It cannot analyze why your creatives performed, identify patterns across your winning ads, or design the strategic framework for your next test. Claude operates at the strategy layer: pre-flight diagnosis, creative brief development, structured variant writing, and post-cycle analysis. The two are complementary: Claude helps you build a better creative set, and Meta’s AI optimizes the delivery of that set.

How many ad variants should I test at once?

The answer depends on your budget. As a rule: each ad needs roughly $50–100 of spend to generate meaningful signal for lead gen or mid-funnel objectives (more for purchase-optimized campaigns). If you’re testing 20 variants with a $2,000/month testing budget, you’re getting $100 per ad — directionally useful, not statistically conclusive. Start with 6–8 variants per cycle, enough budget to read the results clearly, and expand your matrix once you’ve established a baseline. Creative velocity matters less than creative learning velocity.

How do I write good personas for the CLAUDE.md file?

The most useful persona entries go beyond demographics. Include: the specific job-related frustration that makes your offer relevant to them, the language they use when describing the problem (lift from reviews, Reddit threads, or sales call notes), what they’ve already tried that didn’t work, and what they’re skeptical about. A persona that says “marketing director, 35–45, wants better ROI” is useless. A persona that says “marketing director at a 50-person B2B SaaS company who’s being asked to do more with flat headcount and is tired of agencies who take 2 weeks to produce a single deliverable” is something Claude can write to.

What’s the right budget split between testing and scaling?

Most practitioners recommend 20–30% of total budget to creative testing, with the remainder on scaling proven winners. For a $3,000/month account: roughly $600–900/month in testing budget. This is enough to cycle 6–9 new creatives per month at $100 each in test spend. The key is not letting scaling budgets cannibalize testing — accounts that cut testing when a winner appears usually find themselves 60 days later with no backup when that winner fatigues.

How do I know when a creative is fatiguing vs. just having a bad week?

Two signals matter more than raw CTR decline: frequency and relative performance. A creative with frequency above 3.5 that shows declining CTR is almost certainly fatiguing — the audience has seen it enough that novelty is gone. A creative with low frequency but declining CTR might just be hitting a less receptive audience segment, or it could reflect a broader performance dip. Compare against your account average for the same period. If CTR for that ad is declining faster than account average CTR, that’s creative-specific. If everything is down, it’s an external factor (seasonality, competition, algorithm change). Claude’s diagnosis prompt distinguishes these automatically when you give it the frequency and CTR-over-time data.

Which Meta Ads MCP server should I start with?

Start with GoMarble (free, one-line install via Smithery) if you want live data pulls without write access — it’s the fastest path to connecting Claude to real account data for the diagnosis and analysis workflows. Upgrade to Pipeboard when you want write operations (pause, update budget) and multi-account support. Both are legitimate; the difference is free vs. paid and read-only vs. read-write. Avoid jumping straight to write-enabled MCP until you’ve run the read-only workflow for a couple weeks and confirmed Claude’s analysis matches your own judgment on the account.

Can Claude Code automatically pause underperforming Meta ads?

With a write-enabled MCP (Pipeboard, Composio, or brijr/meta-mcp), yes — Claude can execute API calls to pause ad sets or individual ads. Build a skill that checks daily performance against your CPA threshold and auto-pauses anything that’s 20%+ above target after sufficient spend ($200+). That said, run in review mode first for two weeks — Claude outputs what it would pause and why, you approve before it acts — until you’ve validated the logic on your specific account. Once you’re confident in the thresholds, flip to automated execution for the mechanical decisions and keep human review for anything strategic.