Google Has Been Telling You the Answer Since 2012 (And Most SEOs Still Haven’t Listened)

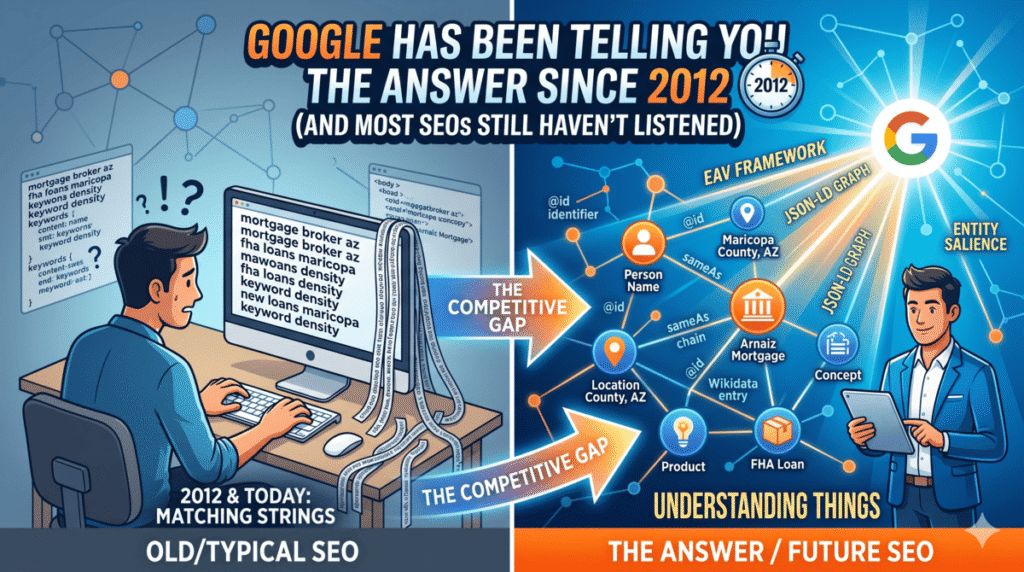

In 2012, Google published a blog post titled “Things, not strings” explaining that its search engine was evolving from matching character sequences to understanding real-world entities: people, places, organizations, concepts. At the time, most SEOs treated it as an interesting algorithm note with no immediate workflow implications.

Twelve years later, Google’s Knowledge Graph contains over 500 billion facts about 5 billion entities. AI Overviews run on the same entity model. Claude, ChatGPT, and Perplexity all build their answers using entity relationship graphs. And the SEO community is still mostly writing content optimized for keyword density rather than entity relationships.

This creates a real competitive gap — but only if you know how to exploit it.

Pages with 15 or more recognized entities in their content show 4.8x higher AI Overview selection rates. Websites with complete entity schema markup receive 3.2x more citations in AI-generated answers than sites without it. These aren’t small incremental improvements. They reflect a fundamental difference in how machines process and recommend content.

This guide covers the complete methodology I use to build entity graphs for clients: the theoretical foundation (Koray Tugberk’s EAV framework, translated into executable practice), the technical architecture (JSON-LD entity graphs with permanent @id identifiers and sameAs chains), the Wikidata bridge to Google’s Knowledge Graph, and the Claude Code skills that build and monitor all of it. By the end, you’ll have a working implementation, not just a framework.

If you’re new to how I work with Claude Code, the Claude AI for SEO workflow guide gives you the setup foundation.

How Search Engines and AI Models Actually Process Your Content

Before building anything, you need to understand what machines are doing with your content when they crawl it. Most SEOs have a vague sense that “AI reads your pages,” but the specific mechanics matter for how you structure both your content and your schema.

Google’s Natural Language API classifies every entity it finds in your content by type: PERSON, ORGANIZATION, LOCATION, EVENT, CONSUMER_GOOD, WORK_OF_ART, and several others. For each entity, it returns two critical scores: a type classification (confidence that the entity is what it appears to be) and a salience score between 0 and 1.

Salience is not keyword density. It’s semantic centrality. A salience score of 0.8 means that entity is what the entire document revolves around. A score of 0.02 means the entity appears incidentally. On a well-structured service page, your primary entity (the business or service) should hold a salience score above 0.5. Most sites I audit have their primary entity scattered somewhere between 0.08 and 0.18 — buried under competing entities, weak structure, and filler content.

You can test this on any page right now. Go to Google’s Natural Language API demo, paste your page’s full text content, and run the Entity Analysis. The output will show you exactly which entities Google can identify and how salient each one appears. If your business name doesn’t appear as the most salient entity on your About page, that’s your first problem to fix.

AI models add another layer. ClaudeBot, GPTBot, and PerplexityBot cannot execute JavaScript. When they crawl your site, they see the raw HTML response from your server. They cannot trigger React rendering, they cannot wait for dynamic content to load, and they cannot run any JavaScript-dependent schema injection. What they can parse, reliably and every time, is the content inside <script type="application/ld+json"> tags — because that’s static HTML delivered with the initial response.

This is why server-side JSON-LD is not optional. If you’re using a tag manager or JavaScript to inject your structured data, you’re invisible to AI crawlers. Your JSON-LD is the direct pipeline from your content to Claude’s entity understanding layer, and it only works if it’s in the HTML before the page is served.

There’s also an important distinction between the two types of AI bots crawling your site. Training bots like ClaudeBot crawl content to train models. Cloudflare data from early 2026 shows ClaudeBot has a 23,951:1 crawl-to-referral ratio — it crawls nearly 24,000 pages for every single referral it sends back. Retrieval bots like Claude-SearchBot crawl to power real-time answers in Claude’s web search feature and do return traffic. Both interact with your entity structure, but in different ways. The training bots inform what Claude “knows” about your brand at a base level. The retrieval bots determine whether Claude cites you in answers.

Build your entity structure for both.

The EAV Framework: Koray’s Methodology Made Executable

Koray Tugberk Gubur is the person most responsible for bringing semantic SEO methodology into practical use for the broader SEO community. His framework is built on a data model called Entity-Attribute-Value (EAV) that search engines use internally to structure the information they extract from web content.

EAV works like this:

An Entity is a real-world thing with independent existence. A business, a person, a product, a location. Not a keyword or a topic. A specific, identifiable thing.

An Attribute is a property of that entity. For a mortgage broker, attributes include license status, service area, loan types offered, years in operation, and founding date. Koray distinguishes three attribute types worth understanding: root attributes (primary distinguishing features that define what the entity is), rare attributes (specific properties competitors haven’t covered), and unique attributes (properties only this entity possesses). Rare and unique attributes are disproportionately valuable for topical differentiation because they reduce semantic distance between your content and niche search queries your competitors can’t answer.

A Value is the specific data point for a given entity-attribute pair. License status = active. Service area = Maricopa County, Arizona. Loan types = FHA, VA, USDA, conventional.

Every fact you state in your content is technically an EAV triple. The question is whether you’re stating it in a form that NLP systems can decode clearly, or whether you’re burying it in vague language that forces the system to guess.

EAV triples map directly to Subject-Predicate-Object sentences at the content writing level. This connection matters because it tells you exactly how to structure every factual claim in your content.

Compare these two sentences describing the same business:

Weak: “We specialize in helping Arizona residents navigate the complexities of the home financing process with a variety of loan options tailored to their individual needs.”

Strong: “Arnaiz Mortgage is an FHA-approved lender serving Maricopa County, Arizona, with USDA, VA, and conventional loan programs for first-time and repeat buyers.”

The weak version has no identifiable entity (generic “we”), no specific attributes (vague “variety”), and no extractable values. Google’s NLP API would struggle to assign high salience to any entity in that sentence. The strong version has an explicit entity (Arnaiz Mortgage), a specific predicate (is an FHA-approved lender), and multiple concrete values (Maricopa County, USDA, VA, conventional, first-time buyers, repeat buyers). Each one of those values is a machine-readable data point that builds the entity’s attribute profile.

Koray’s framework includes 41 content rules. The four that matter most for entity building are:

One macro context per page. Each page should orbit a single primary entity or topic. When a page tries to cover multiple primary entities, salience gets distributed across all of them and none reaches prominence. A service page about FHA loans should be about FHA loans at Arnaiz Mortgage specifically — not FHA loans, USDA loans, and VA loans all mixed together. Separate entities get separate pages.

H2 headings as questions. Format your H2 headings as direct questions. “What is an FHA loan?” “What credit score do you need for an FHA loan in Arizona?” “How does Arnaiz Mortgage process FHA applications?” This format aligns with how users search and how AI systems extract passage-level answers. Google’s NLP parser assigns higher prominence to entities that appear in heading elements versus body text.

40-word extractive answers. Directly under each question H2, write a concise answer of roughly 40 words that directly answers the question. Not an introduction to the answer. The answer itself. This 40-word block is what Google extracts for featured snippets, and it’s what AI systems like Claude pull as citation-worthy passage content. Write it as a standalone unit that makes sense without the surrounding context.

Complete EAV coverage. Every important attribute of your primary entity should appear somewhere in the content as an explicit value. Don’t assume the reader or the search engine will infer attributes from context. State them. A mortgage broker’s content should explicitly cover license number, state approvals, loan types, service counties, minimum loan amounts, contact details, and years in business. Implied attributes don’t build entity graphs. Stated attributes do.

Entity Salience Engineering: Specific Techniques

Once you understand the EAV model, you can engineer your content specifically to maximize the salience score of your primary entity. These techniques are derived from how Google’s NLP API weights different content signals.

Position matters more than frequency. Google’s entity recognition algorithm weights text position heavily. An entity mentioned in the first sentence of a document receives a stronger salience signal than the same entity mentioned 50 times in the body text. Put your primary entity in the first sentence of the page. Put it in the H1. Put it in the first sentence of each major section.

Structure forces explicit pairings. Tables and ordered lists are particularly effective for entity-attribute-value encoding because they force explicit pairing between an attribute name and its value. A table with rows for “License Status | Active” and “Service Area | Maricopa County, AZ” is unambiguous to NLP systems in a way that prose descriptions rarely are. Use tables for any information that has a clear label-value structure.

Reduce competing entity noise. Every additional entity you introduce competes for salience share with your primary entity. A page that discusses your business, two competitors, three loan types, four regulatory agencies, and five geographic markets will have its salience distributed across 15+ entities with none scoring prominently. On focused service pages, remove or minimize entities that don’t directly serve the page’s primary entity relationship. Save competitor mentions for comparison pages where the competitive entity is the intended focus.

Explicit syntax beats implied syntax. “Is,” “provides,” “serves,” “specializes in” are strong predicates that create clear entity-attribute bonds. Passive and vague constructions (“solutions are available,” “clients are helped,” “services are offered”) obscure the entity doing the providing and dilute the predicate signal. Write in active declarative sentences with strong, specific predicates.

Pronoun reduction on primary entity mentions. After your first introduction of the entity by name, most writers switch to “we,” “they,” or “the company.” NLP systems handle pronoun resolution, but explicit named references consistently strengthen salience. Don’t overdo it to the point of awkwardness, but the first mention in each new section should use the entity name, not a pronoun.

Your Entity Home: The Most Neglected Page on Your Website

Jason Barnard, founder of Kalicube, defines the entity home as the single page that a machine treats as the definitive, authoritative source of facts about your brand. In Google’s entity verification process, every fact it encounters about your business across the web gets traced back to a primary source. That primary source needs to exist on your own domain, and it needs to be explicitly structured as the authoritative record for your entity.

For most businesses, the entity home is the About page. It is also the most neglected page on most websites. About pages typically contain vague mission statements, stock photography, and generic descriptions of “passionate teams” delivering “exceptional service.” From a machine understanding perspective, these pages are nearly useless.

A properly built entity home does specific things:

It states every primary attribute of the entity explicitly. For a business: founding year, founder name, business type, service area, services offered, credentials and certifications, physical address, and contact information. For a person: full name, role, employer, area of expertise, education, and verifiable credentials. Every attribute should be stated directly as an EAV triple, not implied through narrative.

It carries the complete Organization or Person schema with full @id setup and the sameAs chain pointing to every external profile where the entity appears. The schema on this page is the machine-readable version of the entity home, and it needs to be complete, not a minimal implementation.

It links outward to every external platform where the entity has a verified profile. The Kalicube loop works like this: your entity home states facts about the entity and references external profiles via sameAs. Those external profiles (LinkedIn, Wikipedia, Wikidata, Google Business Profile, industry directories) independently confirm the same facts. Some of them link back to your entity home. Google sees a closed corroboration loop and increases its confidence score for the entity. Higher confidence scores lead to Knowledge Graph inclusion, Knowledge Panel appearance, and higher AI citation rates.

The time investment for rebuilding an About page the right way is about three hours. The compounding impact on entity recognition across all AI systems is measured in months. This is one of the highest-leverage technical actions available in 2026.

The JSON-LD Entity Graph: Architecture and Implementation

JSON-LD is not just schema markup. When built correctly, it functions as a machine-readable knowledge graph for your domain — a network of connected entities with explicit relationships between them. The difference between a minimal schema implementation and a full entity graph is the difference between a name tag and a complete personnel file.

The foundation of the graph is the @id property. Every entity in your schema needs a permanent, unique identifier using @id. The standard format is your canonical URL plus a hash and a descriptive label: https://yourdomain.com/#organization for the business entity, https://yourdomain.com/#founder for the founder, https://yourdomain.com/services/fha-loans/#service for a specific service.

The critical rule about @id values: once set, never change them. Your @id is the permanent digital fingerprint for that entity across your site. Every time another page references this entity in its own schema, it does so by pointing to the same @id value. If you change it, you sever every cross-page entity connection you’ve built. Set it correctly from the start and treat it as permanent.

The @graph technique lets you define multiple interconnected entities within a single <script> block. This is the right approach for your homepage, About page, and any page that establishes multiple entity relationships. Here’s the entity graph architecture I build for service businesses:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Organization",

"@id": "https://yourdomain.com/#organization",

"name": "Your Business Name",

"url": "https://yourdomain.com",

"logo": "https://yourdomain.com/logo.png",

"foundingDate": "2019",

"description": "One clear declarative sentence stating what the business is and does.",

"address": {

"@type": "PostalAddress",

"streetAddress": "123 Main St",

"addressLocality": "Phoenix",

"addressRegion": "AZ",

"postalCode": "85001",

"addressCountry": "US"

},

"contactPoint": {

"@type": "ContactPoint",

"telephone": "+1-555-000-0000",

"contactType": "customer service"

},

"founder": {

"@id": "https://yourdomain.com/#founder"

},

"knowsAbout": [

"FHA Loans",

"VA Loans",

"USDA Loans",

"Conventional Mortgages",

"First-Time Homebuyer Programs"

],

"areaServed": {

"@type": "State",

"name": "Arizona"

},

"sameAs": [

"https://www.linkedin.com/company/yourbusiness",

"https://www.facebook.com/yourbusiness",

"https://www.google.com/maps/place/yourbusiness",

"https://www.wikidata.org/wiki/Q[YOUR-Q-NUMBER]",

"https://en.wikipedia.org/wiki/Your_Business_Name"

]

},

{

"@type": "Person",

"@id": "https://yourdomain.com/#founder",

"name": "Founder Full Name",

"url": "https://yourdomain.com/about",

"jobTitle": "Founder and Mortgage Broker",

"worksFor": {

"@id": "https://yourdomain.com/#organization"

},

"knowsAbout": [

"FHA Loan Programs",

"Arizona Real Estate",

"First-Time Homebuyer Assistance"

],

"sameAs": [

"https://www.linkedin.com/in/foundername",

"https://www.wikidata.org/wiki/Q[FOUNDER-Q-NUMBER]"

]

}

]

}

</script>Note the explicit founder property on the Organization pointing to the Person @id, and the worksFor property on the Person pointing back to the Organization. These bidirectional references are what make this a graph rather than isolated markup. Search engines can traverse the relationship in both directions, strengthening the entity association between the individual and the organization.

The knowsAbout property is underused and high-value. It explicitly tells knowledge graph systems what topics the entity has authority over. For an Organization, list the specific services and subject matter areas. For a Person, list the specific domains of expertise. This feeds the topical authority signal that connects entity credibility to specific query clusters.

For each service page, add a Service or Product schema block that references the parent Organization via @id:

{

"@type": "Service",

"@id": "https://yourdomain.com/services/fha-loans/#service",

"name": "FHA Loan Services",

"provider": {

"@id": "https://yourdomain.com/#organization"

},

"areaServed": "Arizona",

"description": "FHA mortgage origination and processing for Arizona buyers with credit scores from 580.",

"serviceType": "Mortgage Lending"

}Every page that adds a new Service schema node pointing back to your Organization’s @id is expanding the entity graph. Over time, Google and AI systems see a dense web of entity relationships where every service, every article, and every author is explicitly connected to the central entity. This is what machine-readable authority looks like.

Wikidata: Your Direct Connection to Google’s Knowledge Graph

Wikidata is a structured knowledge base maintained by the Wikimedia Foundation. Unlike Wikipedia, which is written for human readers, Wikidata stores facts as machine-readable statements using Q-numbers (unique item identifiers) and P-numbers (property identifiers). Google’s Knowledge Graph draws directly from Wikidata as one of its primary structured data sources.

When you add your Wikidata Q-number to your Organization or Person schema’s sameAs property, you’re creating a direct assertion: “The entity described on this website is the same as Wikidata item Q[X].” This is entity disambiguation at the most direct level. No inference required. Google can merge the facts on your website with the facts in Wikidata’s record and build a more complete, more confident entity profile for your brand.

Getting a Q-number requires creating a Wikidata item. The process:

First, register an account at wikidata.org using the business email associated with the brand you’re creating an entry for. Before creating anything new, make 3-5 minor edits to unrelated existing entries. This builds editing credibility in the system and reduces the likelihood of your new entry being flagged or deleted.

Search thoroughly to confirm the entity doesn’t already have a Wikidata entry. Duplicate entries are against Wikidata policy and will be merged or deleted.

Click “Create a new item” from the left sidebar. Add the label (entity name), description (one line, lowercase, e.g., “American mortgage broker”), and aliases if the entity is known by other names.

The properties that matter most:

- P31 (instance of): What type of entity this is. For a business: “business” or more specifically “mortgage company.” For a person: “human.”

- P856 (official website): The canonical URL of the entity’s primary web presence.

- P571 (inception): Founding date for organizations. Birth date for persons.

- P17 (country): Country of operation or citizenship.

- P159 (headquarters location): For businesses with a physical location.

- P749 (parent organization): If the entity operates under a parent company or franchise.

The most important rule in Wikidata: every statement needs at least one external reference citation from an independent, verifiable source. Your own website cannot serve as a reference for statements about your own entity. Use business registration records, state licensing databases, industry directories, news coverage, or official government records. Statements without references are vulnerable to deletion by other editors.

Once your Wikidata entry is published and has received its Q-number (visible in the URL: wikidata.org/wiki/Q1234567), add it to your schema:

"sameAs": [

"https://www.wikidata.org/wiki/Q1234567",

"https://www.linkedin.com/company/yourbusiness"

]The typical timeline for a well-referenced Wikidata entry to influence your Google Knowledge Graph status is 4 to 12 weeks. The Knowledge Graph reads Wikidata on a crawl cycle, not in real time. But once the connection is made, it compounds permanently. Every AI system that draws from Google’s Knowledge Graph or Wikidata directly now has a structured, machine-readable record of your entity.

The Semantic Neighborhood Problem

No entity exists in isolation inside a knowledge graph. Every entity is defined partly by the entities it’s associated with, the topics it appears alongside, and the other entities that link to or mention it. This is the semantic neighborhood concept, and it matters for how search engines assign authority to your entity.

Google’s Knowledge Graph organizes entities into semantic neighborhoods based on the topics they consistently appear within and the entities they consistently co-occur with. A mortgage broker who publishes extensively about FHA loans, appears in citations from Arizona real estate publications, and is mentioned alongside Arizona Housing Finance Authority programs is assigned to a semantic neighborhood that includes those entities. When queries related to Arizona FHA lending occur, Google’s system looks within that neighborhood first.

The SEO implication: you don’t just optimize for your own entity in isolation. You optimize for your entity’s neighborhood. This means:

Your content should consistently co-occur with the specific entities that define your authority domain. For a mortgage broker in Arizona, those entities include specific loan programs (FHA 203(b), USDA Section 502, VA home loans), regulatory bodies (NMLS, Arizona Department of Financial Institutions), and geographic entities (Maricopa County, Pima County, Scottsdale, Mesa). These entities should appear explicitly and consistently in your content, not as keyword targets, but because they are the actual entities that define the subject matter.

Your external citations should come from sources already in your target semantic neighborhood. A backlink from an Arizona real estate blog carries more entity association value than a backlink from an unrelated marketing publication, even if the marketing publication has higher domain authority. The neighborhood signal compounds when your co-citations come from sources already clustered with the entities you want to be associated with.

Your internal linking structure should mirror entity relationships. Pages about specific loan programs should link to the parent service page. The founder bio should link to articles that demonstrate expertise in specific subject areas. The entity home should link to every primary service. These internal links don’t just distribute page authority — they signal entity relationship structure to crawling systems.

Why This Workflow Only Works in Claude Code

Before getting into the skills, this distinction matters: everything in this section is Claude Code-specific. You cannot replicate it in Claude.ai, ChatGPT, or any browser-based AI tool. The reason is architectural, not cosmetic.

ChatGPT and Claude.ai operate in isolated sessions. You paste content in, get output, and the session ends. Next time you open a new chat, the model knows nothing about your client, their entity graph, their schema architecture, or what you analyzed last week. You re-explain everything, every time. You also have to manually copy page content into the chat, which means you’re limited to whatever you thought to paste. The model never sees the full picture.

Claude Code operates as an agent with persistent context, live tool connections, and a file system it can actually read and write. The differences that matter for entity SEO work:

MCP connections to live data. When a Claude Code skill runs ahrefs.site-explorer-organic-keywords or ahrefs.site-explorer-top-pages, it is pulling real data from your Ahrefs account right now — not analyzing a spreadsheet you exported three days ago. When it calls WebFetch to retrieve a page, it gets the actual live HTML, including whatever JSON-LD is in the response. It can pull competitor pages, client pages, and external directories all within a single session without you copying anything. The MCP setup guide covers connecting Ahrefs, GSC, and WebFetch if you haven’t done that yet.

CLAUDE.md as a persistent entity registry. Claude Code reads the CLAUDE.md file in your project directory at the start of every session. This means you define the entity architecture once and the agent knows it permanently. Your client’s primary entity @id, their sameAs chain, their Wikidata Q-number, their topical authority domain — all of it lives in the CLAUDE.md. Claude never asks you to re-explain what the business does. Every skill runs with full entity context already loaded.

Skills as permanent slash commands. A Claude Code skill is a markdown file saved in .claude/skills/. Once it’s there, you type /entity-audit and the entire multi-step agentic workflow runs. It fetches pages via MCP, analyzes all of them, cross-references the data, and produces output. You don’t manage the steps. You run the command. This is categorically different from a “prompt template” you paste into ChatGPT — skills execute workflows, not instructions.

File system access across an entire project. On a local development workflow, Claude Code can read every HTML file on the site, find every application/ld+json block across all pages, check whether @id values are consistent, flag any schema that was added via JavaScript, and validate the @graph structure — all in a single pass without you touching anything. No tool has access to that scope.

The /loop command for ongoing monitoring. Entity authority isn’t a one-time project. Citation rates change as competitors build entity structure, as AI models update, and as new content shifts semantic neighborhoods. The /loop command runs any skill on a repeating schedule. An entity citation monitoring skill running weekly via /loop 7d /entity-citation-check gives you a standing alert system that ChatGPT has no equivalent for.

The CLAUDE.md Entity Context File

Before building any entity skills, you need the CLAUDE.md entity context file in your project. This is what makes every skill Claude-native rather than generic. Every session reads this file first, so the agent enters each workflow already knowing the full entity architecture.

Here is the entity section I add to the CLAUDE.md for every client engagement:

# Entity Architecture

## Primary Organization Entity

- Legal Name: [Business Legal Name]

- DBA: [DBA name if different]

- @id: https://[domain]/#organization

- Type: LocalBusiness / Organization / ProfessionalService

- Founded: [year]

- License: [number and issuing body if applicable]

## Founder / Author Entity

- Full Name: [Name]

- @id: https://[domain]/#founder

- Role: [title]

- Expertise: [comma-separated topic domains]

## sameAs Chain (verified)

- Wikidata: https://www.wikidata.org/wiki/Q[number]

- LinkedIn Org: https://www.linkedin.com/company/[slug]

- LinkedIn Person: https://www.linkedin.com/in/[slug]

- Google Business: https://maps.app.goo.gl/[id]

- Facebook: https://www.facebook.com/[slug]

- Wikipedia: [URL or MISSING — needs Wikidata first]

## Service Entity @ids

- [Service 1 name]: https://[domain]/services/[slug]/#service

- [Service 2 name]: https://[domain]/services/[slug]/#service

## Semantic Neighborhood

Primary entities this site should co-occur with:

- [Entity 1 — e.g. "FHA 203(b) loan program"]

- [Entity 2 — e.g. "Arizona Department of Financial Institutions"]

- [Entity 3 — e.g. "Maricopa County"]

## Entity Gaps (not yet built)

- [ ] Wikidata entry for founder

- [ ] Industry directory citations (list specific ones)

- [ ] Wikipedia eligibility assessment

## Schema Implementation Status

- Homepage: @graph implemented [date]

- About page: @graph implemented [date]

- Service pages: [n] of [n] completeWhen this file exists in the project, every skill that runs inherits this context automatically. You never type the @id, the Q-number, or the sameAs list again. The agent knows the entity graph and can validate every output it generates against it.

The Claude Code Entity Workflow

Three skills do most of the entity SEO work. The key distinction from generic prompts: each one calls MCP tools to pull live data, references the CLAUDE.md entity context, and produces implementation-ready output rather than suggestions for you to act on manually.

Skill 1: Entity Audit with Live Ahrefs Data

Save this as .claude/skills/entity-audit.md:

# /entity-audit

Entity SEO audit using live site data. Reads CLAUDE.md entity context before starting.

## Step 1: Pull Top Pages from Ahrefs

Use the ahrefs site-explorer-top-pages MCP tool to retrieve the top 15 pages by organic traffic

for the domain in CLAUDE.md. This is the analysis scope — not pages I choose manually.

## Step 2: Fetch and Parse Each Page

For each page URL returned:

- Use WebFetch MCP to retrieve the live HTML

- Extract all text from main content areas (not nav, footer, sidebar)

- Extract the full contents of any application/ld+json script blocks

- Note whether JSON-LD is present in the initial HTML or appears to be JavaScript-injected

(JavaScript-injected schema will be absent from the fetched HTML — flag these as critical issues)

## Step 3: Entity Salience Analysis

For each page, analyze the extracted text content:

- Identify the primary entity (what real-world thing is this page about)

- List all secondary entities mentioned explicitly

- Check: does the primary entity appear in the H1?

- Check: does the primary entity appear in the first 100 words?

- Evaluate EAV coverage: are the entity's key attributes stated as explicit values, or implied?

- Flag pages where the primary entity from CLAUDE.md does not appear as the dominant entity

## Step 4: Schema Validation Against CLAUDE.md

For each page's JSON-LD:

- Check whether @id values match the permanent identifiers defined in CLAUDE.md

- Check whether Organization schema references the correct @id

- Check whether sameAs array includes all entries from CLAUDE.md sameAs chain

- Flag any @id values that differ from CLAUDE.md (these indicate broken entity connections)

- Flag any pages with no schema, missing @id, or missing sameAs

## Step 5: Competitor Entity Comparison

Use ahrefs site-explorer-organic-competitors MCP to identify the top 3 organic competitors.

For each competitor's homepage and top service page (via WebFetch):

- Extract their JSON-LD and note @id structure, sameAs chain depth, schema types present

- Note any entity signals they have that the CLAUDE.md site lacks

## Output Format

Table 1: Page-by-page entity clarity scores (Page | Primary Entity Clear? | Schema Present | @id Matches CLAUDE.md | sameAs Complete | Critical Issue)

Table 2: Competitor entity comparison (Competitor | Schema Types | sameAs Count | Wikidata Present | Advantage Over Client)

Priority action list: ordered by impact, referencing specific page URLs and specific fixesRun it with: /entity-audit

The difference between this and a ChatGPT prompt: when you run /entity-audit, Claude Code calls the Ahrefs MCP to pull your actual top pages, fetches each one live via WebFetch, extracts and parses the real JSON-LD, and cross-references everything against your CLAUDE.md entity registry. You do not paste anything. The agent does the full workflow end to end. The output is a validated issue list tied to real URLs with specific fixes, not generic recommendations.

Skill 2: Entity Graph Builder with sameAs Validation

Save this as .claude/skills/entity-graph-builder.md:

# /entity-graph-builder

Generate a complete, validated JSON-LD entity graph. Reads CLAUDE.md for all entity data.

## Step 1: Confirm Entity Data

Read CLAUDE.md. List all entity fields present and flag any that are MISSING or marked incomplete.

Ask me to provide any missing required fields before proceeding. Do not generate partial schema.

## Step 2: Validate sameAs Targets Before Including Them

For each URL in the CLAUDE.md sameAs chain, use WebFetch MCP to confirm it:

- Returns a 200 status (not 404 or redirect to a different entity)

- Contains the entity name on the page (confirms it's the correct profile, not a deleted or reassigned URL)

Flag any sameAs URLs that fail validation. Do not include invalid URLs in the output schema.

## Step 3: Generate the @graph

Build the complete JSON-LD @graph block:

Organization node:

- @type, @id (from CLAUDE.md), name, url, foundingDate, description (one declarative sentence)

- address as PostalAddress

- contactPoint

- founder reference pointing to Person @id

- knowsAbout array (pull from CLAUDE.md expertise fields)

- areaServed (specific counties/states, not generic "nationwide")

- sameAs array (validated URLs only from Step 2)

Person node (founder):

- @type Person, @id (from CLAUDE.md)

- name, jobTitle, url pointing to the About page

- worksFor reference pointing to Organization @id

- knowsAbout array (founder's specific expertise domains)

- sameAs (founder's LinkedIn, Wikidata if present)

Service nodes (one per service in CLAUDE.md):

- @type Service, @id using permanent service URL format

- name, provider reference to Organization @id

- areaServed, description as a single declarative EAV sentence

- serviceType

## Step 4: @id Consistency Check

Verify every @id in the generated output matches exactly what is in CLAUDE.md.

List any discrepancies. The generated schema must match CLAUDE.md — if CLAUDE.md needs updating,

flag that explicitly rather than generating schema with different @id values.

## Step 5: Output and Update

Output the complete @graph JSON-LD block.

Then output an implementation checklist:

- Which pages need this script block added

- Whether any existing schema on those pages conflicts with the new @graph and needs to be replaced

- Whether any sameAs targets are flagged as missing and need to be created before the schema goes liveRun it with: /entity-graph-builder

Skill 3: EAV Content Audit and Rewrite

Save this as .claude/skills/eav-rewriter.md:

# /eav-rewriter

Audit and rewrite page content using EAV structure. Reads CLAUDE.md for entity context.

Usage: /eav-rewriter [URL or paste content below]

## Step 1: Retrieve Content

If a URL is provided, use WebFetch MCP to retrieve the live page.

Extract only the main content body — exclude navigation, footer, sidebar, and schema blocks.

## Step 2: Entity Salience Pre-Analysis

Before rewriting, analyze the existing content:

- What is the primary entity? Does it match the primary organization entity in CLAUDE.md?

- What salience level does the primary entity likely hold? (Estimate: high/medium/low)

- How many competing entities are present that dilute salience?

- Are attribute statements explicit (EAV triples) or implied?

- Are predicates strong (is, provides, serves, processes) or weak (helps with, offers solutions, works to)?

## Step 3: Rewrite Rules

Apply all of these to the rewritten output:

1. Primary entity name appears in the first sentence. Not "we" or "our company."

2. Every H2 is formatted as a direct question the target reader would type into Google.

3. The first 40 words after each H2 answer the question directly and stand alone without surrounding context.

4. Every attribute claim is an explicit EAV triple: [Entity] [strong predicate] [specific value].

Convert: "We help homebuyers with financing" to "[Business] originates FHA and VA loans for [specific buyer type] in [specific geography]."

5. Remove all filler sentences that state no attribute. Every sentence carries a data point.

6. Use the entity name explicitly at the start of each new section rather than a pronoun.

7. Surface rare and unique attributes from CLAUDE.md that competitors would not have.

## Step 4: Gap Report

After the rewritten content, output:

- Attributes from CLAUDE.md that should appear on this page but are currently absent

- External entities from the CLAUDE.md semantic neighborhood that should be mentioned but are missing

- Whether the current H1 and meta description reflect the primary entity clearlyRun it with: /eav-rewriter https://yourdomain.com/services/fha-loans/

Because this skill uses WebFetch MCP to pull the live page, you never copy and paste content. Point it at a URL and it retrieves, analyzes, and rewrites from the live HTML. Run it across an entire service page library by looping through a URL list in a single session.

Skill 4: Entity Citation Monitor via Ahrefs Brand Radar

This is the workflow that separates an ongoing entity SEO program from a one-time implementation. Save this as .claude/skills/entity-citation-check.md:

# /entity-citation-check

Weekly entity citation monitoring. Reads CLAUDE.md for entity and competitor context.

## Step 1: Brand Radar Pull

Use the ahrefs brand-radar-mentions-overview MCP tool to retrieve AI mention data

for the primary domain in CLAUDE.md. Pull the last 30 days.

## Step 2: Competitor Citation Comparison

Use ahrefs brand-radar-mentions-overview for each competitor listed in CLAUDE.md.

Compare mention counts and platforms between the client and each competitor.

## Step 3: Citation Gap Analysis

For any competitor with significantly more AI citations:

- Use ahrefs brand-radar-cited-pages MCP to see which specific pages of theirs are being cited

- Use WebFetch to retrieve those pages and identify what entity signals they have that the client lacks

(schema types, sameAs depth, EAV content structure, Wikidata presence)

## Step 4: Weekly Report

Output a brief report:

- Client AI mentions this period vs. last period (trend)

- Client vs. top competitor citation comparison

- Top cited page for client (if available)

- Top cited page for competitor

- One specific action item based on the gap identified this week

Flag if any new sameAs targets should be added to CLAUDE.md based on sources that appear in competitor citations.Set this to run automatically with the /loop command:

/loop 7d /entity-citation-checkEvery seven days, Claude Code pulls fresh Brand Radar data from Ahrefs, compares your citation counts against competitors, fetches the competitor pages that are winning citations, and produces a gap report with one specific action item. No manual data pull, no dashboard login, no spreadsheet. The agent runs the full analysis and surfaces only what changed.

This is the part of entity SEO that most practitioners skip because it requires ongoing effort with no clear trigger. A scheduled Claude Code skill removes that friction entirely. You get a weekly report without doing anything to generate it.

The Ghost Citation Problem (And Why Entity Structure Fixes It)

There’s a pattern I’ve started tracking across AI citation monitoring that I call ghost citations: AI systems that use your content to construct an answer but credit a competitor or an aggregator site instead of you.

This happens because AI citation systems don’t just ask “where did I find this information?” They ask “what is the most authoritative, clearly identified entity associated with this information?” When your content is factually useful but your entity is weakly defined, the system finds the information in your content but attributes it to a more clearly structured entity in the same semantic neighborhood.

A concrete example: you publish a guide about FHA loan requirements in Arizona. The information is accurate and well-written. But your organization schema is incomplete, your About page doesn’t clearly establish the entity, and your sameAs chain only has a LinkedIn URL. Meanwhile, a competing lender has a fully built entity graph, a Wikidata entry, complete service schema, and an entity home with clear attribute coverage. When Claude or Perplexity answers a query about Arizona FHA loans, both sites’ content may be in the training data or retrieval index. The competitor gets cited because the system can confidently resolve who they are. You get used but not credited.

Entity structure is how you claim credit for content you’ve already written.

The specific trust signals AI systems look for before citing a source: verifiable author or organization entity (named, schema-identified, with external corroboration), technical accuracy (factual statements that can be cross-referenced), formal or authoritative tone, and explicit source citations within the content itself. Claude’s Constitutional AI framework creates a particularly strong bias toward technically precise, clearly attributed sources. If your content reads like it was written by an identified expert with cited evidence, Claude is more likely to cite it than to use it silently.

Implementation Roadmap: Three Phases Over 12 Weeks

Entity authority does not build overnight. The 6 to 12 month lag between implementing entity signals and seeing measurable AI citation impact is real, and it reflects both the time required for crawlers to re-process your content and the cycle time for LLM training data updates. Starting the foundation work now compounds into results that take competitors months or years to replicate.

Phase 1, Weeks 1 to 4: Entity Foundation

Run /entity-audit on your site. Fix the entity home page first. Rebuild your About page as a proper entity home with full EAV coverage of all primary attributes. Implement the complete @graph JSON-LD on the homepage and About page. Create or claim your Wikidata entry for the organization and founder if they don’t exist. Add all available sameAs references to your schema. Verify that no structured data is JavaScript-injected; everything should be server-side in the initial HTML response.

Phase 2, Weeks 5 to 8: Content Architecture

Run /eav-rewriter on your top 10 traffic pages. Prioritize any page that appears in your entity audit with low entity clarity scores. Restructure each service page with proper H2 question headings and 40-word extractive answers under each one. Add Service schema to each service page with @id and provider references back to Organization. Audit your internal linking structure to ensure entity relationships are mirrored in your link graph: every service page linking to the entity home, every article linking to its parent service topic, all cross-links using explicit descriptive anchor text that references entity attributes.

Phase 3, Weeks 9 to 12: Authority Building

Begin systematic external entity corroboration. Submit your organization to relevant industry directories that are already in your semantic neighborhood. Pursue citation coverage from publications that frequently appear in AI answers within your topic domain. Track AI citation rates using Ahrefs Brand Radar or a manual prompting protocol across Claude, ChatGPT, and Perplexity for your target queries. Update content updated more than 30 days ago and re-submit to Google Search Console for reindexing. Content updated within the last 30 days receives 3.2x higher citation rates in AI-generated answers than older content.

The technical work in Phases 1 and 2 is largely a one-time investment that compounds indefinitely. Phase 3 is ongoing but builds on a foundation that most competitors will never fully establish.

For a deeper look at how internal linking structure supports this entity graph, the AI internal linking guide covers the Ahrefs MCP workflows in detail. For connecting this entity work to your broader AI citation strategy, the LLM visibility guide covers cross-platform monitoring. The topical authority mapping guide covers the parallel architecture — how your entity’s semantic neighborhood gets built through systematic topical coverage using Koray’s five-component framework and three Claude Code skills that run on live Ahrefs and GSC data. Once your entity map is in place, the next step is measuring which entity relationships your content is missing compared to what ranks above you — that is what the entity co-occurrence gap analysis workflow covers.

Frequently Asked Questions

What is the difference between keyword SEO and entity SEO?

Keyword SEO optimizes content to match character sequences in search queries. Entity SEO optimizes content and structured data to match the real-world things those queries refer to. Google’s ranking systems now use both: keyword signals for initial retrieval, entity signals for authority and relevance scoring. The distinction matters in practice because entity optimization requires explicit declarative statements, complete structured data, and external corroboration — not just keyword placement. A page optimized for keywords can rank for a query. A page optimized for entity authority gets cited in AI answers for that topic category across multiple platforms.

How do I check whether my content has good entity salience?

Use Google’s Natural Language API demo tool, which is free and requires no account setup. Paste your page’s full text content (without HTML) into the Entity Analysis tab. The output shows every entity Google’s NLP parser identifies, its type classification, and its salience score from 0 to 1. Your primary entity should hold the highest salience score on the page, ideally above 0.4. If your business name scores below 0.1 on your own About page or service pages, that’s a direct signal of weak entity structure. Compare your salience distribution against a top-ranking competitor in your space to see the gap you need to close.

Do I need a Wikipedia page to build entity authority?

Wikipedia helps but is not required, and pursuing a Wikipedia page before meeting notability requirements is counterproductive. The more accessible and equally effective path is Wikidata. Wikidata does not have Wikipedia’s notability standards — any real entity can have a Wikidata entry. Because Google’s Knowledge Graph draws directly from Wikidata, a well-documented Wikidata entry with properly referenced statements provides much of the same Knowledge Graph benefit as a Wikipedia article. Focus on Wikidata first, pursue Wikipedia only when you have verifiable third-party coverage that meets their guidelines.

How long does it take for entity schema changes to affect AI citation rates?

Schema changes become visible to AI systems once they crawl your updated pages, which typically takes days to weeks for major systems. The impact on AI citation rates depends on two separate cycles: the crawl cycle (fast) and the model training or retrieval index update cycle (slow). For retrieval-based systems like Perplexity that run live web searches, improvements can show up within days to weeks of your pages being recrawled. For training-data-based citation (what Claude “knows” at a base level), the lag is 6 to 12 months. This is why the work must start now. The competitive window for establishing entity authority in most local and B2B niches is open, but it closes as more practitioners implement these systems.

What is the most common mistake in entity schema implementation?

Using JavaScript injection to add schema markup. AI crawlers including ClaudeBot, GPTBot, and PerplexityBot cannot execute JavaScript. Any structured data added via tag manager, JavaScript injection, or client-side rendering is invisible to these systems. The application/ld+json script block must be in the HTML delivered by the server on the initial page load. The second most common mistake is setting @id values and then changing them. The @id is a permanent entity identifier. Changing it severs every cross-page entity connection built around the original value. Treat @id as permanent from the moment you set it.

Can entity SEO work for local service businesses, or is it mainly for large brands?

Entity SEO is particularly high-impact for local service businesses precisely because the competition for entity authority in most local markets is nearly zero. Large national brands have entity recognition by default due to their scale. Local businesses have to build it deliberately, but the bar for achieving Knowledge Graph inclusion in a local context is far lower than for national brand recognition. A well-documented Wikidata entry, complete Organization schema with local address and service area markup, and consistent NAP (name, address, phone) corroboration across directories can establish meaningful entity authority for a local mortgage broker or fence company within a few months. The techniques in this guide apply directly to local businesses, and the client examples throughout are drawn from exactly this context.

How does entity SEO interact with topical authority building?

They are two parts of the same system. Topical authority (covering a topic domain completely with interlinked content) builds the semantic neighborhood your entity lives in. Entity SEO (building the machine-readable graph that identifies and connects your entities) builds the machine’s confidence that your entity belongs in that neighborhood and deserves to be cited for queries within it. Topical authority without entity structure gives you ranking potential without citation authority. Entity structure without topical coverage gives you AI credibility without the content surface that gets retrieved. Both are required. The order of operations: establish entity foundation first, then build topical coverage with EAV-structured content that consistently references the established entity graph.