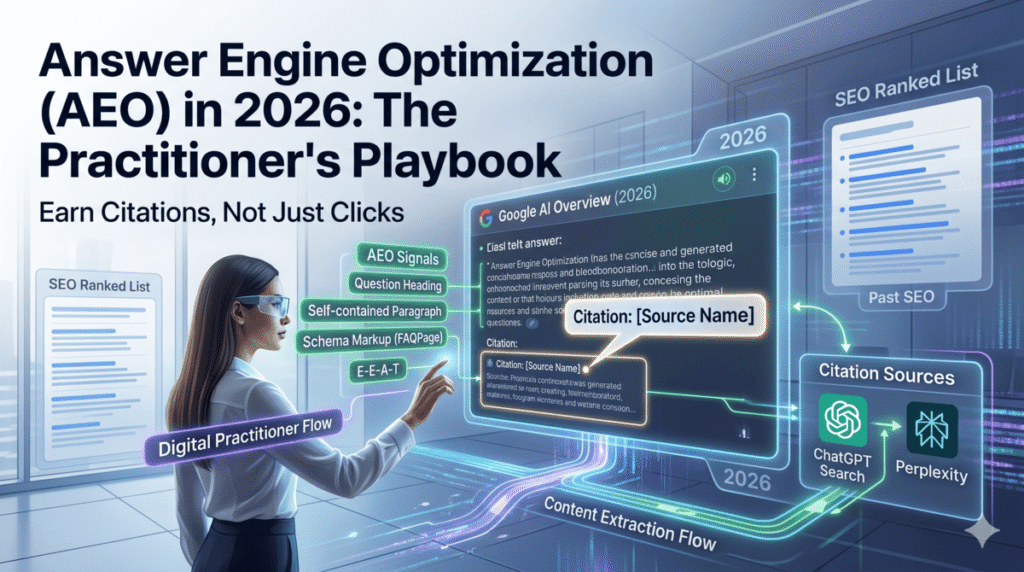

Answer engine optimization (AEO) is the practice of structuring your content so that AI systems can extract, cite, and surface it directly inside generated answers. Unlike traditional SEO, which earns you a position in a ranked list and hopes someone clicks, AEO earns you a citation inside the answer itself. In 2026, Google AI Overviews appear on 50 to 60 percent of U.S. searches. ChatGPT processes 2.5 billion prompts per day. Zero-click searches account for 60 percent of all Google queries. If your content isn’t structured for extraction, you lose ground even when you rank. This guide covers the exact citation signals AI systems look for, how those signals differ across Google, ChatGPT, and Perplexity, and the Claude-assisted workflow I use to audit and reformat pages for AEO readiness in under 30 minutes.

What does answer engine optimization actually mean in 2026?

AEO is not a replacement for SEO. It’s a layer on top of it. You still need to rank. You still need backlinks, technical health, and topical authority. But ranking alone no longer guarantees visibility, because users often get their answer before they ever see your listing.

Here’s the distinction that matters: SEO earns you a position in a list. AEO earns you a citation inside the answer. Those are different goals, and they require different content structures.

GEO (generative engine optimization) is often used interchangeably with AEO, but there’s a useful distinction. GEO is the broader strategy of getting cited across all generative AI platforms. AEO tends to refer specifically to optimizing for answer-driven queries, particularly inside Google AI Overviews and similar structured response formats. The techniques overlap substantially. If you want the broader breakdown of GEO strategy, the GEO audit guide I published earlier this month covers that in full. This post focuses specifically on the content-level signals that determine whether you get cited, and the workflow to fix the ones holding your pages back.

Why does AEO matter more right now than at any point before?

The data on this is hard to argue with. When a Google AI Overview appears for a query, organic CTR for uncited sites drops to 0.52 percent, a 65 percent year-over-year decline. But sites cited within the AI Overview get a 35 percent CTR boost compared to uncited competitors sitting at the same ranking position. Being cited inside an AI Overview is now more valuable than holding the number one organic position without a citation.

That changes the math completely. You’re not optimizing just to rank first. You’re optimizing to be selected by an AI system that reads your page the way a researcher reads a source: scanning for clear, attributed, extractable claims it can use directly.

There’s another stat that should change how you think about strategy. Google AI Overview citations from top-10 organic results dropped from 76 percent to 38 percent between 2024 and 2026. That means over half of AI Overview citations now come from pages ranking outside the top ten. AEO success and traditional SEO success are increasingly decoupled. A page at position eight with strong AEO signals has a real shot at an AI Overview citation ahead of the page sitting at position one that never restructured for extractability.

The window to establish citation authority is open right now. By late 2027, Gartner projects that 25 percent of organic search traffic will shift to AI chatbots and voice assistants. The brands that get their AEO fundamentals in place in 2026 will compound that advantage in ways that late movers can’t match.

What signals do AI systems use to decide what to cite?

Research across 15,847 AI Overview results found that content scoring above 8.5 out of 10 for semantic completeness is 4.2 times more likely to be cited than content scoring below 6.0. Pages with proper schema markup are cited 3 times more often than pages without it. And 65 percent of pages cited by Google AI Mode include structured data markup.

Five citation signals account for most of that gap. Here’s how each one actually works.

Self-contained answer structure

AI systems extract at the paragraph level, not the page level. They pull a specific block of text that answers the query and either cite it or use it to construct the answer. If your opening section requires the reader to know context from a previous paragraph to understand it, an AI system can’t use it in isolation. Every key claim needs to stand on its own without resolving references to “above” or “the following section.”

Question-format headings

AI Overviews trigger frequently on question-format queries. When your H2s and H3s mirror the phrasing of those queries, you signal to the AI that this specific section directly addresses this specific question. “How do you optimize for Google AI Overviews?” performs better as a heading than “Optimization strategies,” even when the content underneath is identical. The match between the query and the heading is part of the citation selection logic.

Information gain

The March 2026 Core Update made this explicit. Google now measures how much genuinely new information a page contributes compared to what already ranks. If your content synthesizes the same claims already in the top 10, it provides no information gain and scores lower on this signal. AI systems prefer citing sources that add something to the conversation: original data, firsthand case studies, specific numbers from real work, or analysis that changes how you think about a topic. Generic posts don’t get cited. Specific, experience-backed posts do.

Schema markup coverage

FAQPage, HowTo, Article, and SpeakableSpecification are the four schema types with the clearest relationship to AI Overview citation. SpeakableSpecification is the most underused of the four. It explicitly tells AI systems which sections of your page are designed for voice or AI extraction. Implementing it on your primary answer blocks is a direct, undercompetitive signal that most sites haven’t added yet.

E-E-A-T authority signals

Named authorship with credentials, external citations to primary sources, and first-person experience language all indicate to AI systems that a real practitioner with actual knowledge produced this content. “When I audited a 400-page site for information gain last quarter, the pages with the lowest scores all had the same structural problem” carries more authority than “many SEOs have found that content quality matters.” The specificity is the signal.

How do citation patterns differ across Google, ChatGPT, and Perplexity?

Most AEO guides treat all AI systems as if they use the same citation logic. They don’t. Each platform has a distinct signal set, and optimizing for one without considering the others leaves significant citation surface unclaimed. Here’s what the data shows.

Google AI Overviews

Google still skews toward established domains with organic ranking signals, but the correlation weakened significantly in 2026. You need structured data, clear E-E-A-T signals, and answer-first formatting. Content freshness matters, but the window is longer here than on the other platforms: content updated within six months can still rank, though recent content within 90 days gets a recency boost. The AI Overview content for any given query changes approximately 70 percent of the time when you check it on different days, which means citations rotate. Maintaining fresh content keeps you in the rotation longer.

ChatGPT Search

ChatGPT cites Wikipedia at a rate of 47.9 percent for factual queries, followed by editorial sites and established news sources. The freshness window here is sharper than on Google: 50 percent of content cited in ChatGPT answers is less than 13 weeks old. If you haven’t updated a post in six months and you’re targeting ChatGPT citations, you’re working against a recency filter that authority signals alone can’t overcome. ChatGPT also responds well to named authorship and institutional credibility markers. A post bylined to a named practitioner with a clear area of expertise tends to outperform unsigned content even when the content itself is equivalent.

Perplexity

Perplexity’s citation pattern is the most distinctive of the three. Nearly 46.7 percent of its citations come from Reddit. That reflects what Perplexity is optimizing for: direct, conversational, experience-based content from real people. For brand content to compete, it needs to match that register: direct answers, specific details, first-person framing, and zero marketing language. Perplexity also has a hard 90-day freshness preference. Content published more than three months ago appears far less frequently in its citations than in Google AI Overviews. If Perplexity is a meaningful traffic source for your audience, publishing velocity and content refresh cadence matter more than domain authority.

How do you run an AEO audit with Claude Code in under 30 minutes?

I’ve been running this audit for client pages since February and it consistently surfaces the same categories of problems: buried answers, self-referential paragraphs, and missing schema on pages that otherwise rank well. Here’s the exact three-step workflow.

Step 1: Calculate your AEO Readiness Score

Before rewriting anything, you need a baseline. The AEO Readiness Score is a 10-point framework that measures the five citation signals above. Give each criterion 0 to 2 points based on how well the page satisfies it, then total the score.

- Self-contained answer in the first 200 words: 0 to 2 points

- Question-format H2s and H3s: 0 to 2 points

- Extractable paragraph structure, meaning each paragraph stands alone: 0 to 2 points

- Schema markup including FAQPage, HowTo, Article, or SpeakableSpecification: 0 to 2 points

- Information gain, including original data, firsthand experience, or analysis not in the top 10: 0 to 2 points

Score interpretation: 8 to 10 means the page is AEO-ready, so submit it for an indexing refresh in Google Search Console and move on. A score of 5 to 7 means citation-eligible with targeted fixes, and you should focus on the lowest-scoring criterion first. A score of 0 to 4 means a rebuild is needed before chasing AI citations on that page.

Use this Claude prompt to run the scoring on any page:

You are an AEO auditor. I’m going to paste content from a page below. Score it from 0 to 10 using this exact framework, giving 0 to 2 points per criterion:

1. Self-contained answer in first 200 words: does the opening immediately answer the primary query without requiring the reader to scroll for context?

2. Question-format headings: are H2s and H3s written as full questions a searcher would actually type?

3. Extractable paragraph structure: can any paragraph stand alone as a complete, citable answer without referencing content above or below it?

4. Schema coverage: does the page have FAQPage, HowTo, Article, or SpeakableSpecification schema implemented?

5. Information gain: does the page include original data, firsthand experience, or analysis not found in standard top-10 results for this topic?

After scoring, identify the three highest-impact changes to improve the score. Then rewrite the opening 200 words using answer-first structure. Here is the page content: [paste content]

Step 2: Run the Paragraph Extraction Test

This is the most useful single diagnostic I’ve found for AEO. For each paragraph in the target section, ask one question: “Could this paragraph appear in a Google AI Overview without any surrounding context and still make complete sense?”

A paragraph fails the test if it uses pronouns or references like “it,” “they,” “this approach,” “as mentioned above,” or “the following section” without defining what those refer to. AI systems can’t resolve those references when they extract a paragraph in isolation. They’ll either skip the paragraph or produce an inaccurate answer by guessing the wrong referent.

Paragraphs that pass are self-contained, name the topic explicitly, and contain at least one verifiable detail. Use this Claude prompt to run the test across your content:

Read each paragraph below and test whether it passes the Paragraph Extraction Test: could this paragraph appear as a standalone answer in a Google AI Overview without additional context? For each paragraph that fails, rewrite it so it passes. A paragraph passes when it: (1) names the topic explicitly, (2) contains no unresolved pronouns or references to other sections, and (3) includes a verifiable claim or specific detail. Here is the content: [paste]

Step 3: Apply the Answer-First Inversion method

Most content buries the answer. Writers set up context, explain background, and work toward a conclusion. That’s how essays work. AI systems don’t read for narrative arc. They scan for the fastest path to a complete, accurate answer and pull it.

Answer-First Inversion flips the structure. Move the direct answer to the first sentence of every section. Put the topic keyword in the first 10 words. Push all supporting context after the answer. Here’s what the difference looks like in practice.

Before: “There are several factors that determine whether Google includes your content in an AI Overview. The algorithm takes into account a range of signals related to content quality and structure. Among these, structured data tends to be particularly important for many site types.”

After: “Google selects AI Overview citations based on five primary signals: structured data markup, semantic completeness, E-E-A-T authority, content freshness, and answer-first formatting. Structured data is the highest-impact fix for most pages because pages with schema markup are cited three times more often than those without it.”

The second version answers the question in the first sentence and gives a specific, actionable detail in the second. An AI system can extract either sentence and produce an accurate answer. Apply this to every H2 section in your highest-priority pages first. Use this Claude prompt:

Rewrite the following section using Answer-First Inversion: (1) move the direct answer to the first sentence, (2) include the topic keyword in the first 10 words, (3) keep all supporting detail but place it after the answer, (4) if there is a specific stat or number, lead with it. Make the rewritten section fully self-contained so it can be extracted without surrounding context. Original section: [paste]

Which schema types matter most for AI citation in 2026?

Schema markup gives AI systems a structured, machine-readable map of your content. Pages with proper schema are cited 3 times more often in AI Overviews, and 65 percent of pages cited in Google AI Mode already have it. Here are the four types to prioritize, ranked by implementation speed relative to impact.

FAQPage schema is the highest-volume opportunity for most content sites. Every page targeting informational queries should have at least five FAQ schema questions. These get extracted directly into AI Overviews for question-format queries and give you a secondary citation surface below the organic listing. Using a Claude skill to generate FAQ schema from existing content takes about three minutes per page and compresses what used to be a two-hour manual task.

HowTo schema applies to any post with a step-by-step process. AI systems pull HowTo schema directly into structured answer panels for procedural queries like “how to audit for AEO” or “how to set up schema markup on a WordPress site.” If your content has numbered steps, HowTo schema should already be there.

Article schema with the author, datePublished, and dateModified fields filled in sends freshness and authorship signals that AI systems actively weight. Fill in the organization and author name fields completely. Leaving them empty is a missed signal that costs you nothing to fix.

SpeakableSpecification schema is underused and undervalued. It explicitly marks sections of your content as designed for voice and AI extraction. Adding it to your primary answer blocks is a direct signal to AI crawlers. Most competitors haven’t implemented it, which makes it one of the few low-competition technical wins available right now.

You can generate complete schema JSON-LD for all four types with Claude. Paste your page content, prompt Claude to output the full schema block with all required properties filled in, drop the output into your page’s head, and validate it with Google’s Rich Results Test. The full Claude SEO tool stack breakdown covers schema generation in more detail if you want to build this into a repeatable workflow.

How do you measure AEO performance over time?

AEO tracking is still underdeveloped compared to traditional SEO, but three metrics give you a reliable signal that you can act on.

The most direct measure is citation frequency: how often does your content appear in AI Overview results for your target queries? You can check this manually by sampling 20 to 30 target queries each week, but that doesn’t scale. A better approach is a Claude-based monitoring script that pulls your target queries, checks for AI Overview presence, and logs citation appearances over time. I’ll cover that setup in full in an upcoming post on AI share-of-voice tracking.

The second metric is AI referral traffic in GA4. AI-referred sessions jumped 527 percent year-over-year through mid-2025. In GA4, look at your referral traffic segment and filter for domains including perplexity.ai, chatgpt.com, and claude.ai. These sessions are already in your data. Most analytics setups just haven’t segmented them out.

Third, watch your featured snippet rate in Google Search Console. Pages earning featured snippets draw from the same content signals that earn AI Overview citations. A rising featured snippet rate is a leading indicator of AEO improvement, especially for question-format queries.

The benchmark to track against: AI Overview content changes approximately 70 percent of the time for any given query. That rotation means consistent citation is hard to lock in. Pages that maintain fresh content, updated schema, and answer-first structure tend to stay in the citation pool longer than pages that treat AEO as a one-time fix. Build a quarterly content refresh into your workflow and your citation rate will compound over time.

Where should you start if you have a large site to audit?

If you’re managing 100 or more pages, you can’t AEO-optimize everything at once. Prioritize in this order and you’ll see the fastest ROI.

Start by pulling your top-traffic informational pages from Google Search Console. Filter for pages where AI Overviews already appear when you search the target query. These pages are losing the most CTR right now, and an AEO fix on them has the highest impact on traffic.

Next, run the AEO Readiness Score on each of those pages using the Claude prompt above. Sort them by score. Pages scoring 4 to 7 are your priority: they’re citation-eligible but not optimized, meaning a targeted fix can move them into the citation pool without a full rewrite.

Add FAQ schema to every page in your audit before you tackle content changes. It’s the highest-impact, lowest-effort fix on the list. It creates a structured signal even on pages where the prose content isn’t fully AEO-ready yet, and it takes minutes per page when you’re using Claude to generate the JSON-LD.

If you want to see what a full site-level audit looks like running inside Claude with live Search Console data, the Claude for SEO complete guide walks through the broader setup, including how to connect your tools and run batch audits across a full domain in a single session. The LLM visibility guide is also worth reading alongside this one if you’re building a broader AI citation strategy.

Frequently asked questions about answer engine optimization

What is answer engine optimization (AEO)?

Answer engine optimization is the practice of structuring content so that AI systems like Google AI Overviews, ChatGPT Search, and Perplexity can extract, cite, and surface it directly inside generated answers. AEO focuses on content extractability and citation signals rather than click-through from a ranked list of results.

How is AEO different from traditional SEO?

Traditional SEO earns a page a position in a ranked list and depends on users clicking through to visit the site. AEO earns the content itself a citation inside the AI-generated answer. Both are necessary in 2026: organic ranking is still a prerequisite for most AI Overview citations, but ranking without AEO signals means losing visibility whenever an AI Overview appears above the listing.

Does ranking in the top 10 guarantee an AI Overview citation?

No. Google AI Overview citations from top-10 organic results dropped from 76 percent to 38 percent between 2024 and 2026. Over half of citations now come from pages ranking outside the top 10. AEO signals, including structured data, answer-first formatting, and information gain, can earn citations for pages that don’t hold strong organic positions.

What is the AEO Readiness Score and how do you calculate it?

The AEO Readiness Score is a 10-point framework for measuring how well a page is structured for AI citation. It scores five criteria 0 to 2 points each: self-contained answer in the opening 200 words, question-format headings, extractable paragraph structure, schema markup coverage, and information gain. Pages scoring 8 to 10 are AEO-ready. Pages scoring 5 to 7 need targeted fixes. Pages scoring below 5 need a rebuild before AEO optimization makes sense.

Which schema types matter most for AEO in 2026?

The four schema types with the strongest relationship to AI Overview citation are FAQPage, HowTo, Article, and SpeakableSpecification. Pages with proper schema markup are cited three times more often in AI Overviews than pages without it. FAQPage schema is the fastest to implement and has the broadest impact across informational content. SpeakableSpecification is underused by most sites and represents one of the few remaining low-competition technical wins.

How do AEO citation signals differ between Google, ChatGPT, and Perplexity?

Google AI Overviews prioritize structured data, E-E-A-T signals, and answer-first formatting, with a moderate freshness preference of 90 days. ChatGPT Search favors editorial authority and has a sharp 13-week freshness cutoff, with 50 percent of its citations coming from content published within that window. Perplexity cites Reddit at a 46.7 percent rate and strongly prefers content published within 90 days, making freshness and publishing velocity more important than domain authority for that platform.

How long does it take to see results from AEO optimization?

AI Overview content changes approximately 70 percent of the time for any given query, so citation status can shift within two to three weeks of implementing fixes. Most pages I’ve worked on see changes in citation frequency within that timeframe after adding FAQ schema and rewriting opening sections with answer-first structure. Full compounding of topical authority signals takes three to six months for most sites.