AI search visibility is the rate at which your brand, content, or products appear in AI-generated responses from ChatGPT, Perplexity, Google AI Overviews, Claude, and Gemini. Unlike a search ranking, it is not a fixed position — it is a citation rate across a set of prompts relevant to your category. The two strongest predictors of AI citation are organic search rankings (88% of URLs cited by ChatGPT come directly from Bing search results, and 67% of Google page-one brands appear in ChatGPT) and off-site brand authority (sites with 32,000 or more referring domains are 3.5 times more cited by ChatGPT than sites with fewer than 200). If you want a free starting point before buying any tool: Claude can audit your AI visibility in about 20 minutes using the prompt workflow in this post.

I have been tracking AI visibility across client sites since early 2026, when AI-referred sessions started showing up meaningfully in GA4 for the first time. The pattern is consistent: brands with strong organic rankings and active off-site presence in Reddit threads, YouTube videos, and review platforms get cited regularly. Brands with the same site quality but no off-site presence almost never appear in AI answers. This post covers what the research shows, how each AI platform works differently, and how to run a free visibility audit using Claude before deciding whether a paid tool makes sense. If you are newer to this area, my generative engine optimization guide covers the foundational layer first.

What Is AI Search Visibility and Why Does It Differ From a Search Ranking?

A search ranking is binary at the position level: your page either ranks #1 or it does not. AI search visibility is probabilistic. The same prompt to ChatGPT can return your brand on Tuesday and a competitor on Thursday. LLMs are non-deterministic — they generate responses by sampling from a probability distribution, not retrieving from a fixed index. This means “do I appear in AI search?” is the wrong question. The right question is: “What percentage of relevant prompts about my category include my brand?”

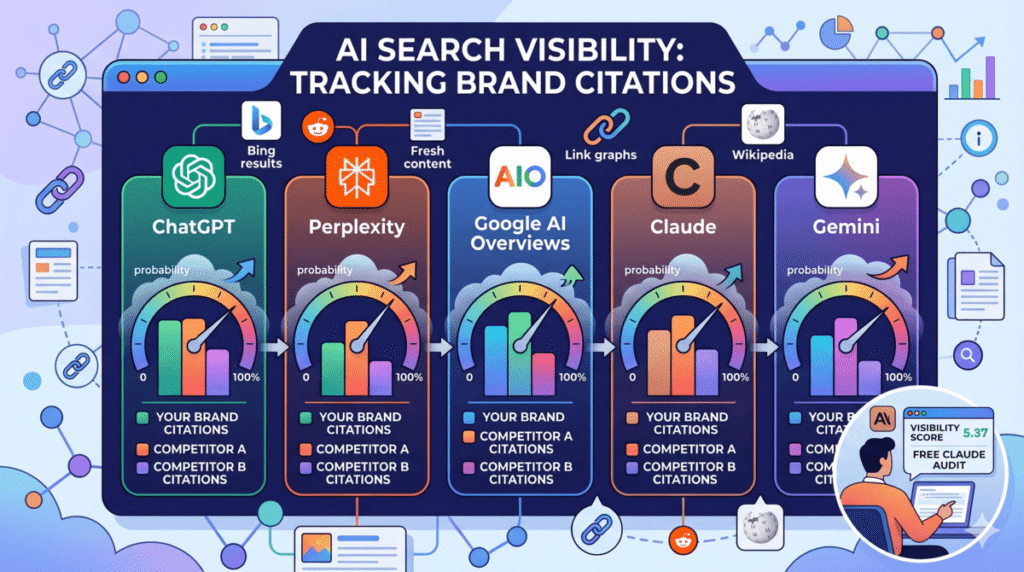

This distinction matters for how you measure and report. Traditional SEO gives you a ranking position. AI visibility gives you a share-of-voice metric — how often you appear across a sample of prompts compared to competitors. Tools like Semrush’s AI Visibility Toolkit, Profound, and SE Ranking’s AI tracker all approach this the same way: they run hundreds of representative prompts on a recurring schedule and calculate your citation frequency and share of voice against a defined competitor set.

The other structural difference is that AI search visibility operates across five distinct platforms with different source preferences. ChatGPT, Perplexity, Claude, Google AI Overviews, and Gemini do not all cite from the same pool of content. Getting strategy right requires understanding which signals each platform weights, which I cover below.

What Does the Research Actually Show About LLM Citation Patterns?

The research picture is clearer now than it was a year ago, because Ahrefs and SE Ranking have both published large-scale citation studies. Here is what the data actually says.

- Organic ranking is the baseline, not the goal. Ahrefs analyzed 16 million URLs cited by six major AI assistants and found that 88% of URLs cited by ChatGPT come directly from Bing search results. This single finding reframes the entire AI visibility conversation. If your page does not rank on Bing page one, ChatGPT almost never cites it. This means Google and Bing SEO is not a separate track from AI visibility strategy — it is the prerequisite.

- Referring domains are a harder threshold than most people expect. Sites with more than 32,000 referring domains are 3.5 times more cited by ChatGPT than sites with fewer than 200 referring domains. This does not mean small sites cannot appear in AI answers. It means the citation probability scales sharply with off-site authority at the domain level, not just the page level. Link acquisition is directly connected to AI citation potential.

- Content position inside the page matters. 44.2% of LLM citations reference content from the first 30% of an article. Only 24.7% cite from the final third. This has a specific implication: the best answer on your page needs to appear early, not after a 600-word introduction about market trends. The bottom-line-up-front structure that AEO practitioners already use is also the right structure for LLM visibility.

- AI search visitors convert at dramatically higher rates. Ahrefs documented that AI search visitors convert at a 23x higher rate than organic search visitors on their own site. This is the commercial argument for AI visibility investment that most discussions skip. The volume is lower than organic, but the intent is compressed and the conversion rate reflects it. Even modest improvements in AI citation rates can produce meaningful revenue impact.

- Content freshness has a hard drop-off. LLMrefs analyzed 17 million citations and found that citation rates decline sharply after 90 days for most categories. Perplexity, which uses real-time web search, is especially sensitive to content age. A quarterly content refresh schedule is not arbitrary — it maps directly to the freshness window AI platforms favor.

How Does AI Visibility Work Differently Across ChatGPT, Perplexity, Claude, and Google AI Overviews?

The biggest strategic mistake I see is treating all AI platforms as interchangeable. They use completely different source selection mechanisms. Optimizing as if they all work the same way produces generic content that performs inconsistently across all of them.

- ChatGPT relies heavily on Bing’s index for real-time responses (88% of citations from Bing search results per Ahrefs). For topics where ChatGPT uses its training data rather than live search, the data suggests it heavily weights Wikipedia, editorial publications with large referring domain counts, and research content. If you want consistent ChatGPT citations, Bing ranking and domain authority are your primary levers.

- Perplexity is a real-time web search engine at its core. It favors content published within the last 90 days, cites Reddit threads for community-driven topics (46.7% of citations in social/review categories), and surfaces sources quickly after publication. Perplexity is the most SEO-adjacent platform: strong content on a well-indexed domain that answers questions directly tends to appear quickly. Update velocity matters here more than anywhere else.

- Claude (in its base form without web search enabled) draws from its training data, which has a knowledge cutoff. When web search is active, Claude retrieves and synthesizes from live sources. Claude’s training data composition skews toward Wikipedia, academic sources, documentation, and long-form editorial content. Brands that appear on Wikipedia, in industry publications, and on platforms Claude’s crawlers indexed heavily benefit disproportionately. My earlier post on how to get mentioned in Claude covers the entity-building angle in depth.

- Google AI Overviews indexes exclusively from Google’s search index. The citation patterns mirror organic rankings closely — 76% of AIO citations come from pages in the organic top 10. Fan-out query coverage and answer extractability drive citations beyond the ranking baseline. This is the platform where traditional SEO technical quality and on-page optimization matter most directly. My complete Google AI Overviews workflow covers this platform specifically.

- Gemini integrates Google Search directly and behaves similarly to AI Overviews for most search-intent queries. It prioritizes authoritative sources on Google’s index and shows stronger citation overlap with Google rankings than any other platform.

The practical implication of these differences: Google SEO lifts your ChatGPT and Gemini citations. Reddit and fresh content lift Perplexity citations. Wikipedia presence and editorial citations lift Claude citations. These are different work streams, not one universal optimization.

Step 1: Run a Free AI Visibility Audit with Claude

Before spending $99 to $499 per month on a dedicated AI visibility tool, run this free audit. It takes 20 minutes and gives you a clear picture of your current citation status across the major platforms.

Open Claude and use this prompt. Run it once for each of the five platforms (ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini):

I want to audit my brand's AI search visibility. My brand is [BRAND NAME]. My category is [describe what you do in 1-2 sentences]. My target audience searches for things like [3-5 example queries they would use].

Please act as if you are [platform name] responding to each of these queries, and tell me:

1. Would you mention [BRAND NAME] in your response?

2. If yes, how would you describe them and in what context?

3. If no, which brands or sources would you cite instead?

Run this for each query:

- [query 1 — category-level]

- [query 2 — comparison/best-of]

- [query 3 — problem-solution]

- [query 4 — how-to/tutorial]

- [query 5 — specific feature/use case]

After answering each query, give me an overall AI visibility score from 0-10 and list the three biggest gaps preventing more consistent citations.Claude cannot perfectly simulate what ChatGPT or Perplexity would say – but the exercise is genuinely useful for two reasons. First, it surfaces which query types you are strong or weak on. Second, it forces you to articulate your brand’s positioning in a way that maps to how AI systems describe categories. Gaps in Claude’s answers almost always reflect gaps in how clearly your brand is positioned in the content you have published.

Run the full five-platform audit, then compare results. Consistent gaps across all platforms indicate a foundational content or off-page authority problem. Gaps on specific platforms indicate platform-specific fixes. A brand that appears clearly in Claude’s training-data responses but never in Perplexity likely needs fresher content and more Reddit presence. A brand invisible in ChatGPT but visible in Perplexity probably has good content but weak referring domain authority.

Step 2: Identify Which Pages Are Being Cited and Which Are Not

Once you have the brand-level audit, narrow down to the page level. This is where you find the specific content that is driving citations and the specific pages that should be cited but are not.

Use this second Claude prompt with your target pages loaded:

I'm going to give you a list of pages from my site. For each page:

1. Tell me whether an AI search engine would likely cite this page for its target topic.

2. Explain why or why not — specifically: is the best answer in the first third of the content? Does it cover the sub-questions someone would have on this topic? Are there clear E-E-A-T signals?

3. If a page is unlikely to be cited, give me the single highest-impact change that would improve its citation probability.

Pages:

[Paste the URL + a 3-sentence description of what the page covers for each]The patterns that come back are predictable once you run this on 10 to 20 pages. Pages performing well in AI citations tend to have: their main answer in the first two paragraphs, numbered steps or definition sections that are easy to extract, and at least one specific data point or firsthand experience that distinguishes them from generic content covering the same topic.

Pages that should be cited but are not tend to have: the best content buried after a long introduction, topic coverage that is wide but shallow (covering 20 sub-questions at two sentences each rather than eight sub-questions with real depth), or no clear signal of who wrote the content and why they are credible on the topic.

Step 3: Build the Content and Off-Page Signals That Drive AI Citations

The research points to three categories of work that consistently move AI citation rates. None of them require building a new content strategy from scratch — they are adjustments to what you are likely already doing.

- Content positioning: front-load the answer. The 44% first-third citation rate is not a coincidence. AI systems extracting content for synthesis pull from the densest information near the top of the document. Restructure any page that performs below its potential by moving the direct answer, the definition, or the core framework to the first two paragraphs. If you have a 3,000-word post where the summary is at the bottom, flip it. The Dan Petrovic grounding study (7,000+ queries) found that the 540-word mark is where AI grounding plateaus — content past that threshold does not meaningfully increase citation rates for most topics.

- Sub-query coverage: answer the follow-up questions. 67% of Google page-one brands appear in ChatGPT, but only 77% appear in Perplexity — a 10-point gap explained partly by content coverage depth. Use Claude to generate the full set of sub-questions someone researching your topic would ask, then audit which of those your page actually answers. This is the same fan-out query methodology I detailed in the answer engine optimization post. The gap between “this page ranks well” and “this page gets cited in AI answers” is almost always incomplete sub-query coverage.

- Off-page presence: appear where AI systems look. Building off-site citations is different for AI visibility than for traditional SEO. The platforms that AI systems reference most heavily include: Reddit threads with high vote counts in your category, YouTube videos that mention your brand or methodology by name, third-party roundup articles on high-authority publications (“best [category] tools”), Wikipedia entries for your brand or category, and review platform profiles on G2 or Capterra for software. A brand mentioned 30 or more times across these platforms starts to register as an entity in LLM training data — a threshold researchers at Profound call the entity recognition floor. Getting to 30 cross-platform mentions is a concrete milestone to track.

How to Track AI Search Visibility Without Spending $400 a Month

Dedicated AI visibility tools are genuinely useful at scale, but most of them are priced for enterprise budgets. Here is the free and low-cost stack that gives you 80% of the signal.

- Manual prompt testing (free). Set up a recurring 30-minute session once a week. Run the same 10 to 15 prompts across ChatGPT, Perplexity, and Claude in incognito mode (logged into accounts so you get personalized responses). Log whether your brand appears, what context it appears in, and which competitors appear when you do not. A spreadsheet with prompt, date, platform, result, and competitor columns is enough. Over 60 days you will see patterns clearly.

- Google Search Console for AI Overview signal (free). Inside Google Search Console page report where impressions are rising but CTR is dropping or flat are likely appearing in AI Overviews. Google’s AI generates a summary that satisfies the query before the user clicks – impressions register but clicks do not. Sort your GSC query report by impressions descending and look for pages with impression growth and CTR decline over the last 90 days. That pattern is your proxy for AI Overview visibility.

- Bing Webmaster Tools (free). Bing recently added an AI Performance report that is currently in beta (pictured above). It provided insight into number of citations and grouding queries. Bing failed at giving traffic or click data from Copilot/Bing AI. Bing gets bonus points here for being the only large AI/LLM platform providing performance data to users.

- GA4 referral traffic segmentation (free). AI platforms send referral traffic with identifiable patterns. In GA4, create a segment for sessions from these sources: chat.openai.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com. This tells you which pages are generating actual clicks from AI platforms, not just citations that satisfy queries without clicks. The conversion rate on these sessions is worth tracking separately — the 23x rate Ahrefs documented on their own site should motivate treating AI referral traffic as a priority segment.

- SE Ranking AI tracker ($29-$49/month entry tier). If you want systematic tracking beyond manual testing, SE Ranking’s AI Search Toolkit is the most affordable dedicated tool with ChatGPT, Google AI Overviews, and Perplexity coverage. It tracks brand mentions and citations across platforms, shows historical trends, and flags competitive shifts. The entry tier is practical for individual consultants and small agencies.

- Semrush AI Visibility Toolkit ($99+/month). For agency-scale reporting, Semrush covers the most platforms (ChatGPT, Google AI, Gemini, Claude, Grok, Perplexity, DeepSeek) and provides share-of-voice metrics against a defined competitor set. The 130-million-prompt database makes the share-of-voice numbers statistically robust. This is the tool to use if you are reporting AI visibility to clients as a service line.

How to Report AI Visibility to Clients and Leadership

The reporting question is where most practitioners get stuck. Client dashboards built around rankings and organic traffic do not have a natural slot for “AI citation rate.” Here is the framing that works.

The metric to lead with is AI share of voice: for a defined set of 20 to 30 prompts relevant to the client’s category, what percentage include the client’s brand? Report this number weekly or monthly, track it over time, and set a benchmark against two to three named competitors. This makes AI visibility concrete and comparable rather than abstract.

Supporting metrics include: AI referral sessions in GA4 (volume and conversion rate), GSC impressions-to-CTR ratio for target pages (the declining CTR pattern), and specific prompts where the brand appears or is absent. The absent prompts are the most useful reporting element — they directly translate to content gaps and off-page opportunities that have a clear action attached.

The conversation to have with clients and leadership is about conversion quality, not volume. If AI search visitors convert at 23x the rate of organic visitors, a small increase in AI citation rate has an outsized revenue impact. Frame AI visibility work in terms of the prompt set you are targeting, the citation rate today versus three months ago, and the specific content investments that drove the change. That structure maps directly to how other channel-level reporting works and avoids the abstraction that makes AI visibility feel unmeasurable.

Frequently Asked Questions About AI Search Visibility

Is AI search visibility the same as generative engine optimization (GEO)?

They describe different aspects of the same practice. GEO is the process of optimizing content so AI systems cite it — the work you do. AI search visibility is the measurement of how well that work is performing — the outcome you track. GEO is the strategy; AI search visibility is the KPI. Most practitioners use GEO to describe what they do and AI search visibility to describe the metric they report.

How often do LLMs like ChatGPT update their training data?

This depends on the platform and the query type. When ChatGPT uses its live web search feature (which it does for most informational queries), it pulls current results from Bing — those results reflect content published days or weeks ago. ChatGPT’s underlying model weights update on a training cycle measured in months, not weeks. Perplexity is closer to real-time: it indexes and retrieves fresh content within days of publication. Google AI Overviews and Gemini reflect Google’s index, which crawls fresh content within hours to days for most domains. Claude with web search enabled retrieves current sources; without web search, it relies on training data with a knowledge cutoff. The practical implication: content freshness affects Perplexity and Google AI platforms more immediately than ChatGPT’s model-level responses.

Does a high domain rating guarantee AI visibility?

No, but low domain authority makes consistent AI citations very difficult. The 32K referring domain threshold for ChatGPT is a population-level correlation, not a guarantee. High-DR domains appear in AI answers consistently because they tend to rank well on Google and Bing, publish content with strong topical depth, and appear frequently in the editorial sources AI training data favors. Low-DR domains can appear in AI answers on specific topics where their coverage is substantially deeper than larger competitors — but their base citation rate is lower. Authority building is necessary but not sufficient: a high-DR domain with shallow content on a topic still gets cited less than a mid-DR domain with genuinely thorough coverage.

Can AI-generated content rank in AI search results?

Yes, and there is direct evidence for it. SE Ranking documented their own AI-assisted articles appearing as cited sources in AI Overviews. The relevant factors are whether the content covers the topic’s sub-questions thoroughly, whether it demonstrates genuine expertise (firsthand data, specific examples, named authors), and whether it passes the basic quality signals the platform requires. Generic AI content that restates what already exists at the top of search results performs poorly. AI-assisted content that adds original data, specific workflows, or firsthand context performs well. The origin of the content matters less than its differentiation from what already exists.

How long does it take to improve AI search visibility?

Perplexity shows the fastest response to content changes, sometimes within days of publishing or updating a page. Google AI Overviews typically take two to four weeks to reflect content changes for pages that already rank well organically. ChatGPT’s citation patterns for live-search queries follow Bing’s index, so ranking changes propagate within two to four weeks as well. ChatGPT’s training data updates take months. Claude’s training data updates are on a similar multi-month cycle. The realistic expectation for a content and off-page campaign targeting AI visibility: measurable changes in Perplexity and Google AI Overviews within 30 to 60 days, with ChatGPT and Claude training data updates showing impact over a three to six month horizon. Search Engine Land documents that comprehensive AI visibility campaigns take six to twelve months to build consistent share of voice across all platforms.

What is the difference between a brand mention and a citation in AI search?

A citation is when an AI system links to or explicitly attributes a claim to a specific URL or source. A mention is when the AI references your brand name in its response without a direct link or attribution. Both matter, but they signal different things. Citations drive direct referral traffic and indicate that the AI system is using your content as a primary source. Mentions build brand association in the AI’s response pattern — they contribute to the 30-touchpoint entity recognition threshold and influence how the AI describes your brand in future responses. Citations are what you measure in GA4 referral traffic. Mentions are what tools like Semrush Brand Radar and Peec AI track as the broader presence metric. A brand with high mentions but low citations has good name recognition in AI responses but is not providing the authoritative content that earns source attribution.