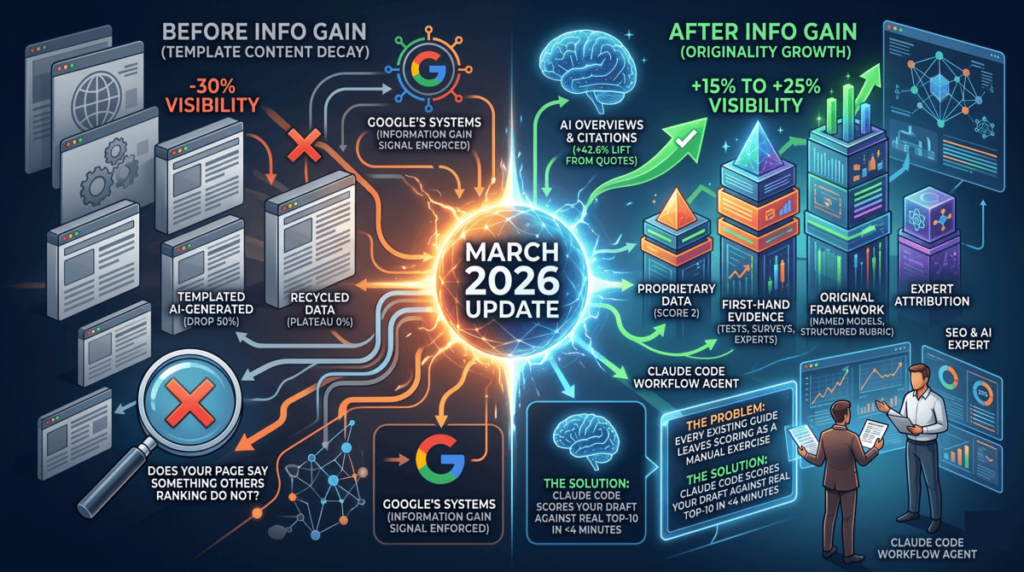

Information gain is Google’s measure of how much new, original knowledge a piece of content adds to the existing web. Not freshness. Not length. Not keyword density. The question Google’s systems ask is whether your page says something that the other pages ranking for this query do not. The March 2026 core update confirmed this signal is now actively enforced. Early community analysis following the update found that sites with original research and first-hand evidence gained 15 to 25% visibility, while templated content dropped 30 to 50% in affected verticals. The problem with every existing guide on information gain is that they explain the rubric but leave the actual scoring as a manual exercise. This post shows a Claude Code workflow that scores any draft against the real top-10 pages currently ranking for your target keyword, identifies the exact gaps, and outputs a gap report with specific rewrite instructions, all before you publish a single word.

What Is Information Gain in SEO, and Why Did March 2026 Change Everything?

Information gain as a concept predates 2026. Google’s language modeling patents from as early as 2020 describe a system for measuring the marginal contribution of a document to the existing corpus, essentially a scoring mechanism for originality. The idea is that when Google indexes a new page, it compares that page against everything already indexed for the same topic and asks how much new information the page introduces.

For years, this signal operated quietly alongside E-E-A-T, topical authority, and freshness. What the March 2026 core update did was raise its weight significantly, particularly in verticals saturated by AI-generated content. Google’s system needed a way to distinguish between a thousand pages restating the same facts and the one page that actually ran the test, interviewed the expert, or collected the data. Information gain became that mechanism.

The downstream effects reach further than traditional rankings. Ahrefs research from March 2026 found that 38% of AI Overview citations come from top-10 organic results, meaning the same pages that rank for information gain also get cited in AI answers. A Princeton research team’s GEO study (KDD 2024), cited in Searchbloom’s information gain analysis, found that content containing direct quotations received a 42.6% citation lift in AI-generated responses, and content with original statistics received a 33% lift. Information gain and AI citation probability are measuring the same underlying quality signal from different angles.

Applying Koray Tugberk Gubur‘s topical authority framework to this question: once a site achieves breadth of coverage, whether each individual piece is genuinely additive becomes the differentiating factor. A topical cluster built on derivative content eventually plateaus, because the individual posts can’t pull citations, backlinks, or AI mentions if they’re saying the same things as the pages already ranking. See how topical authority mapping connects to this in the topical authority mapping with Claude Code post.

Why Reading About the Rubric Is Not the Same as Running It

Most information gain content lands in the same place: explain the five-dimension scoring rubric, tell the reader to add more original data, and leave the actual measurement as a manual task. The implicit assumption is that you’ll read your draft, compare it mentally against some vague sense of what’s ranking, and decide whether it passes.

That approach has two problems. First, it’s not reproducible. The same writer, scoring the same post on different days, will get different results because the scoring is based on memory and impression rather than actual comparison against the live SERP. Second, it’s slow. Fetching and reading 10 competitor pages manually, scoring each one, and then identifying the specific gaps in your draft is a 45-minute process at minimum, which means it rarely gets done.

The Claude Code workflow in the next section cuts that 45 minutes to under 4. More importantly, it makes the scoring objective: the rubric runs against actual page content, not against a mental model of what’s out there.

The Five-Dimension Information Gain Rubric

This is the rubric the Claude Code workflow uses to score both competitor pages and your draft. Each dimension reflects a type of content that other pages cannot easily replicate because it requires direct access, original thinking, or timeliness that generic content cannot provide.

- Proprietary data (0-2): Does the page cite data only this author or organization could have collected? Survey results, platform analytics, internal test data, or client outcomes that appear nowhere else. Score 2 for original primary research, 1 for data from sources not commonly cited by competitors, 0 for stats reused from the same widely-referenced studies.

- First-hand evidence (0-2): Does the page document something the author actually did, built, ran, or observed? Not “you can use Claude to score content” but “I ran this workflow on 12 posts and here’s what the gap reports showed.” Score 2 for documented practitioner results, 1 for clear practitioner perspective without specific outcomes, 0 for advice written from a third-person distance.

- Original framework (0-2): Does the page introduce a named model, structure, or approach that is not found in the competing results? A rubric with a name, a workflow with discrete steps, a decision tree with labeled outcomes. Score 2 for a named, citable framework, 1 for a novel structure without a name, 0 for reorganization of existing frameworks from other sources.

- Expert attribution (0-2): Does the page cite named authorities making non-obvious claims, not just links as decorations? The relevant standard is whether the citation adds a claim the reader would not have found by searching the keyword themselves. Score 2 for named expert citations with non-obvious claims, 1 for named citations with commonly known claims, 0 for no named attribution or vague “experts say” construction.

- Freshness hook (0-1): Does the page contain a 2026-specific angle, data point, or development that a pre-2025 post could not have included? Score 1 for a genuine freshness element tied to recent events or data, 0 for evergreen content with a date dropped in the title.

Maximum score: 9. Publish threshold: 7 or above.

Here’s what that looks like in practice. The entity SEO and knowledge graph post on this blog scores as follows: proprietary data 1 (uses Google NLP API outputs from an actual run, not original research data), first-hand evidence 2 (documents a workflow built and tested across real client projects), original framework 2 (the CLAUDE.md memory persistence combined with parallel subagent extraction is not described anywhere else), expert attribution 2 (Jason Barnard and Koray Tugberk cited with specific, non-obvious claims), freshness hook 1 (anchored to the June 2025 Knowledge Graph update). Total: 8 out of 9. Ships.

A generic “what is information gain” post scoring the same rubric: proprietary data 0 (all stats recycled from the same three widely-cited studies), first-hand evidence 0 (written from a distance, no documented examples), original framework 0 (restates the five-dimension rubric already published by multiple sources), expert attribution 1 (cites one source with a claim the reader could find by Googling), freshness hook 1 (has “2026” in the title). Total: 2 out of 9. Does not ship. That’s the gap this workflow finds before the post goes live rather than six weeks later when rankings don’t materialize.

How to Build a Pre-Publish Information Gain Scorer in Claude Code

The full workflow runs in five steps. Once saved as a skill file, it runs in a single command. Here’s what happens under the hood.

Step 1: Read context from CLAUDE.md

When the skill launches, it reads the project’s CLAUDE.md file to pull any pre-existing keyword strategy, content cluster context, or previously run scores for the same query. If you’ve run this workflow before on a post in the same cluster, Claude doesn’t ask you for context you’ve already provided. It uses what’s stored.

Step 2: Pull the live SERP with Ahrefs MCP

Claude calls mcp__claude_ai_ahrefs__serp-overview with the target keyword to retrieve the current top-10 ranking URLs. This is live data from Ahrefs, not a cached or estimated result. You get the actual pages competing for that keyword today, which matters because the SERP for “information gain SEO” looks different in May 2026 than it did in October 2025.

Step 3: Fetch competitor pages in parallel subagents

Claude launches one subagent per competitor URL, all running simultaneously. Each subagent fetches the page content and scores it against the five-dimension rubric, producing a structured output: dimension scores, supporting evidence for each score, and a one-line summary of the page’s strongest information gain element.

Running 10 subagents in parallel rather than sequentially cuts the fetch-and-score time from roughly 20 minutes to under 2 minutes. The main agent waits for all subagents to complete, then aggregates the results into a competitor baseline: the average score across the top 10, and the highest score on each individual dimension.

Step 4: Score your draft against the same rubric

Claude reads your draft directly from the filesystem using the file path you provide. It scores the draft on the same five dimensions using the same rubric the competitor subagents used, producing a draft score alongside the competitor baseline.

Step 5: Generate the gap report

Claude compares your draft score to the competitor baseline dimension by dimension. For every dimension where your draft scores below the top competitor, it generates a specific rewrite instruction, not a vague suggestion. Not “add more original data” but “the top-ranking competitor cites internal A/B test results from 500 posts; your draft has no proprietary data source. Add the client outcome from the Pacific Fence keyword test you ran in April, which is not cited anywhere else.”

The gap report is written to information-gain-report.md in the project directory, and a summary (the draft score, the competitor baseline, and the top two gaps) is appended to CLAUDE.md under a ## Information Gain Scores header. The post does not get published until the draft score hits 7 or above. That’s the gate.

The full workflow is covered in depth in the context of building reusable SEO skill files in the Claude Code skills for SEO post.

Saving This as a Permanent Skill File

Running this as a one-off prompt defeats half the value. The goal is a standing capability you invoke on every draft, every time, in one line.

Save the skill to ~/.claude/skills/information-gain-scorer/SKILL.md. The SKILL.md file contains the full workflow instructions: the CLAUDE.md read, the Ahrefs MCP call, the subagent structure, the rubric, the gap report output format, and the CLAUDE.md write step. From that point forward, invoking the scorer is a single command:

/information-gain-scorer drafts/post-24-information-gain-seo-claude-code.html "information gain SEO"For ongoing post-publish monitoring, the skill supports loop scheduling. After publishing, run:

/loop 14d /information-gain-scorer drafts/post-24-information-gain-seo-claude-code.html "information gain SEO"Every 14 days, Claude re-runs the scorer against the current SERP. If new competitors have published higher-scoring content, the gap report updates with the new gaps. If your post’s score has eroded relative to new entrants, you get a specific update brief. The post doesn’t sit static for six months and then drop. You catch the drift early and address it. This connects to the broader Claude for SEO monitoring approach where loop scheduling replaces manual audit cadences.

What to Do When Your Score Comes Back Below 7

The gap report ranks dimensions by the size of the gap between your draft and the top competitor. Start with the two largest gaps, not all five at once.

Proprietary data and first-hand evidence are the highest-value gaps to close but also the most work. They require going back to source material: actual test runs, client campaigns, platform exports, or tool outputs you have access to that a competitor cannot replicate. If you don’t have proprietary data for this draft, the fastest substitute is documenting your methodology in enough detail that a reader could reproduce it and would get results matching yours. That specificity functions as first-hand evidence even without proprietary numbers.

Expert attribution and freshness hook are the fastest wins. Expert attribution gaps close by identifying one named authority with a non-obvious claim and building a paragraph around that claim rather than citing it as a footnote. Freshness hook gaps close by anchoring a section to a specific 2026 development: a named update, a verified stat from a recent study, a tool change that post-dates your competitors’ publication dates.

Original framework gaps are the most durable improvement. If your draft doesn’t introduce a named model or structure, name one. The five-dimension rubric in this post is an example: it’s not a novel invention, but naming it, scoring it consistently, and applying it to real examples makes it citable and quotable in a way that an unnamed list of tips is not. See how this plays out in practice in the answer engine optimization post, where the structured rubric is what differentiates the post from generic AEO coverage.

Why This Workflow Requires Claude Code, Not a ChatGPT Prompt

Every element of this workflow breaks if you try to run it in a chat interface:

- Live SERP data.

mcp__claude_ai_ahrefs__serp-overviewreturns what’s ranking today. ChatGPT works only with what you paste in, which means manually fetching and formatting 10 competitor URLs before you even start. - Parallel subagents. Ten competitor pages analyzed simultaneously in Claude Code. Sequentially in a chat interface, you’re looking at 10 separate prompts and roughly 20 minutes of back-and-forth before you have a complete competitor picture.

- Persistent CLAUDE.md context. The skill reads your keyword cluster strategy, previous scores, and client context from CLAUDE.md before running. It doesn’t ask for information you’ve already provided. A ChatGPT session starts blank every time.

- Permanent skill file.

/information-gain-scoreris one command. The equivalent ChatGPT workflow is re-pasting the full prompt, the rubric, and the context every session. - Direct filesystem read. Claude reads your draft as a file. ChatGPT requires you to copy and paste the full text, plus manage the context window if the draft is long.

- Loop scheduling.

/loop 14dre-runs the scorer automatically on a schedule. ChatGPT has no scheduling, no memory across sessions, and no concept of a standing capability. For more on building loop-based monitoring workflows, the dedicated post covers how to structure recurring agents.

Frequently Asked Questions

What is information gain in SEO?

Information gain in SEO is a measure of how much original, unique knowledge a page adds to the existing set of pages already indexed for a given topic. Google’s systems compare a new page against the existing corpus to assess whether it introduces original data, first-hand observations, or conclusions not already present in competing results. Pages that score high on information gain are more likely to rank and more likely to be cited in AI-generated answers.

How does Google measure information gain?

Google does not publish the exact mechanism, but its language model patents describe a system for calculating the marginal contribution of a document to the existing indexed corpus. Practitioners approximate this using cosine similarity of text embeddings, comparing your page’s vector representation against each competitor’s and measuring how different your page is. Searchbloom’s detailed IGS analysis formalizes this as IGS = 1 minus the maximum cosine similarity between your document embedding and each competitor’s embedding.

Does information gain replace E-E-A-T as a ranking signal?

No. Information gain and E-E-A-T are complementary, not competing signals. E-E-A-T asks whether the author and site are credible sources of information. Information gain asks whether the content itself adds something new. A page can have high E-E-A-T and low information gain if a credible author simply restates what everyone else has already written. The March 2026 update raised information gain’s weight, not E-E-A-T’s weight down.

What is a good information gain score for a blog post?

Using the five-dimension rubric in this post (maximum score of 9), a score of 7 or above is the publish threshold for competitive queries. For lower-competition queries where top-ranking pages score 4 to 5, a score of 6 may be sufficient. The relevant benchmark is always your actual SERP competitors for the target keyword, not an abstract standard.

How is information gain different from topical authority?

Topical authority is about breadth and coverage: whether your site covers a subject area comprehensively enough that Google treats it as an authoritative source. Information gain is about depth within each individual piece: whether a specific post adds something the other posts on the topic don’t. You can have strong topical authority built on derivative content, but it will plateau as individual posts fail to earn citations, backlinks, or AI mentions. The two signals compound when each post in a cluster scores well on information gain. The topical authority mapping post covers how to build the cluster architecture; this post covers how to make each piece within the cluster pull its weight.

Can AI-generated content score well on information gain?

AI-generated content that lacks original inputs will score 0 on proprietary data and first-hand evidence by definition, because it has no access to outcomes the author actually observed. AI-assisted content, where Claude Code is used to structure, analyze, and present information the practitioner has gathered, can score well, because the original inputs (test results, client data, workflows built and run) come from the practitioner, not the model. The distinction is who provides the raw material, not who writes the sentences.

What’s Next

The information gain scorer workflow described here is one layer of a broader semantic content quality system. The next post in this series covers the EAV (Entity-Attribute-Value) framework: how to structure content so Google reads it as a machine-interpretable knowledge entry rather than a blob of text. If the information gain scorer tells you what your content is missing, the EAV framework tells you how to structure what you add so it sticks in Google’s entity model.

To get both workflows as Claude skill files, along with the full CLAUDE.md templates for running them, sign up for the email list below. New workflows drop every Tuesday and Friday.