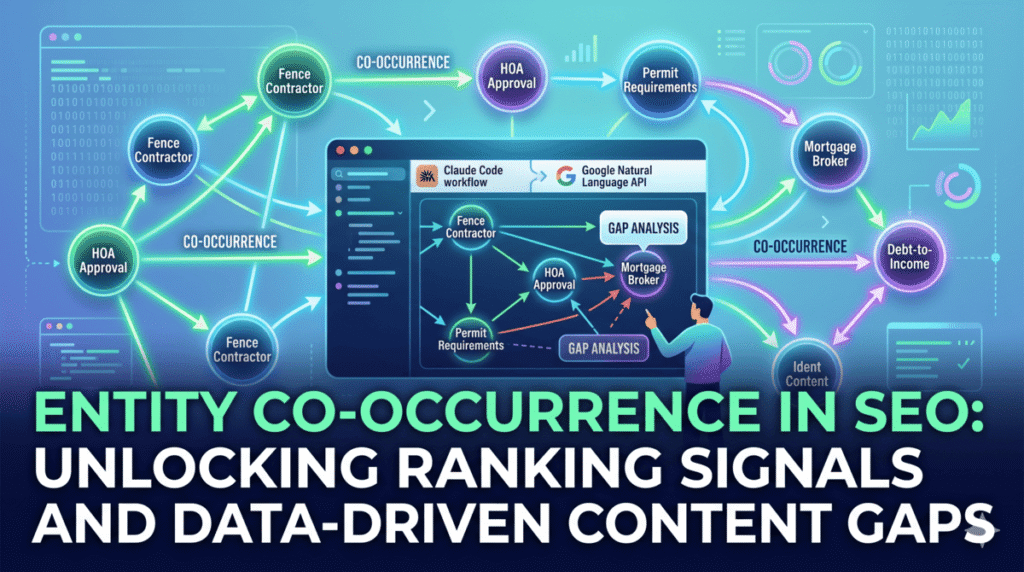

Entity co-occurrence is one of the least talked-about ranking signals in SEO, and one of the most measurable. When Google repeatedly sees two entities appear together across thousands of web pages — “fence contractor” alongside “HOA approval,” or “mortgage broker” alongside “debt-to-income ratio” – it learns that those concepts belong together. Pages that cover a topic without mentioning the entities Google expects to see around it score as semantically thinner than pages that do. That gap is measurable, and it is fixable.

The problem is that most content gap analysis tools work at the keyword level, not the entity level. They tell you which search terms your competitors rank for that you don’t. They do not tell you which concepts Google expects to find on your page based on how top-ranking competitors have taught it to understand the topic. This post covers the Claude Code workflow that closes that gap: crawl your top five competitors, extract every entity from each page using the Google Natural Language API, build a frequency table, and surface the entity relationships your page is missing. The full process runs in about 20 minutes.

What is entity co-occurrence and why does it affect your rankings?

Start with a simple way to think about this. If you read ten articles about buying a house, you would expect to see “mortgage,” “down payment,” “closing costs,” and “home inspection” appear across most of them. An article about buying a house that never mentioned closing costs would feel incomplete. Google works the same way. After processing billions of pages, it has learned which concepts reliably appear together within a topic. When your page covers a subject but is missing the entity relationships Google expects to see, your content looks incomplete compared to the pages that rank above you.

This is entity co-occurrence: the pattern of which named entities appear together within and across documents covering the same topic. Google uses these patterns to build its understanding of how concepts relate to each other in the real world. Koray Tugberk Gubur documented this in his semantic content network research, showing that Google’s Knowledge Graph is partly built from co-occurrence patterns across the web, not just from structured data sources like Wikipedia.

The practical SEO implication: if four out of five top-ranking pages for “fence installation Portland” consistently mention “HOA approval,” “permit requirements,” and “property line” alongside “fence contractor,” Google has learned that these entities belong together. Your fence installation page that covers materials and pricing but never touches permits or HOA rules will score as less complete on the topic than pages that cover the full entity neighborhood.

This builds directly on the entity salience work from the previous post in this series. Salience tells you how strongly your content signals a single entity. Co-occurrence tells you whether your content covers the right constellation of entities around that primary topic. Both matter for how Google categorizes and ranks your pages.

How is entity co-occurrence gap analysis different from standard content gap analysis?

Standard content gap analysis — the kind built into Ahrefs, Semrush, and similar tools — compares keyword rankings. It tells you that your competitor ranks for “cedar fence installation cost” and you don’t. That is useful for finding keywords to target with new content.

Entity co-occurrence gap analysis works differently. It does not look at what keywords competitors rank for. It looks at what concepts competitors consistently discuss together. The difference matters because Google’s understanding of a topic is built from entities and their relationships, not from keyword lists.

A keyword gap tells you to create new content. An entity co-occurrence gap tells you what your existing content is missing. It is an on-page optimization signal, not a content creation signal. The same page, updated to include the entity relationships Google expects, can move meaningfully in rankings without creating anything new.

That is the practical reason to run this analysis before starting any major content refresh. You are not guessing at what to add. You are reading what Google has already been taught by the pages it ranks above you.

How do you set up the entity co-occurrence workflow in Claude Code?

The workflow uses three tools inside Claude Code: WebFetch to pull competitor pages, the Bash tool to call the Google Natural Language API (the same API covered in the entity salience post), and CLAUDE.md to store results persistently. If you have not set up the Google NLP API key yet, that post walks through the free tier setup.

Start by adding a co-occurrence analysis section to your CLAUDE.md file:

## Co-Occurrence Analysis

### Target Page

- URL: https://yoursite.com/your-target-page/

- Primary entity: [what this page should rank for]

### Competitor Pages

1. https://competitor1.com/their-equivalent-page/

2. https://competitor2.com/their-equivalent-page/

3. https://competitor3.com/their-equivalent-page/

4. https://competitor4.com/their-equivalent-page/

5. https://competitor5.com/their-equivalent-page/To find your five competitor URLs, search your target keyword on Google and take the top five organic results that are not your own site. You can also use the Ahrefs MCP inside Claude Code to pull them automatically:

Use the Ahrefs site-explorer-organic-keywords MCP tool to find the top 5 ranking URLs

for the keyword "[your target keyword]" that are not from [your domain].

Add them to the Competitor Pages section in CLAUDE.md.Once CLAUDE.md is set up, the analysis runs from a single instruction block.

How do you run the co-occurrence analysis inside a Claude Code session?

Open a Claude Code session and paste this instruction. Claude will do the crawling, entity extraction, and gap analysis automatically:

Read CLAUDE.md to get the target page URL and the five competitor URLs listed under Co-Occurrence Analysis.

Run the entity extraction in parallel. Launch a separate subagent for each competitor URL that does the following:

1. Fetch the page content using WebFetch

2. Extract the plain text body, stripping all HTML

3. Call the Google NLP API via Bash to extract all entities:

curl -s -X POST \

"https://language.googleapis.com/v1/documents:analyzeEntities?key=$GOOGLE_NLP_KEY" \

-H "Content-Type: application/json" \

-d '{"document":{"type":"PLAIN_TEXT","content":"PAGE_TEXT_HERE"},"encodingType":"UTF8"}'

4. Parse the response and return: a list of entity names with their type (PERSON, ORGANIZATION, LOCATION, CONSUMER_GOOD, OTHER, etc.) and salience score

While the competitor subagents run, also call the NLP API on the target page using the same method.

After all six pages are processed, build a co-occurrence frequency table:

- List every entity found on 2 or more competitor pages

- Show how many competitor pages each entity appears on (out of 5)

- Show whether each entity appears on the target page

- Sort by: highest competitor frequency first

Identify priority gaps: entities that appear on 3 or more competitor pages but are absent from the target page.

Write the full report to CLAUDE.md under:

## Co-Occurrence Gap Report [today's date] — [target page URL]That instruction handles the full pipeline. The parallel subagent approach means all five competitor pages get crawled and analyzed simultaneously instead of one after another, which is what brings the total time down to around 20 minutes even on a site with slow-loading pages.

What does the co-occurrence gap report look like?

Here is an example output for a fence installation page in Portland. The left column is the entity, followed by how many of the five competitor pages include it, and whether the target page covers it.

## Co-Occurrence Gap Report 2026-05-16 — /fence-installation-portland/

Entity | Type | Competitor pages | On target page

--------------------|---------------|------------------|----------------

fence contractor | OTHER | 5 of 5 | YES

Portland | LOCATION | 5 of 5 | YES

HOA approval | OTHER | 5 of 5 | NO (GAP)

permit requirements | OTHER | 5 of 5 | NO (GAP)

cedar wood | CONSUMER_GOOD | 4 of 5 | YES

installation cost | OTHER | 4 of 5 | YES

vinyl fence | CONSUMER_GOOD | 4 of 5 | NO (GAP)

property line | OTHER | 3 of 5 | NO (GAP)

wood staining | OTHER | 3 of 5 | NO (GAP)

Multnomah County | LOCATION | 3 of 5 | NO (GAP)

Priority gaps (3+ competitor pages, missing from target):

1. HOA approval: 5/5 competitors

2. permit requirements: 5/5 competitors

3. vinyl fence: 4/5 competitors

4. property line: 3/5 competitors

5. wood staining: 3/5 competitors

6. Multnomah County: 3/5 competitorsReading this report, the story is clear. Every top-ranking page for “fence installation Portland” discusses HOA approval and permit requirements alongside fence contracting. The target page covers materials and pricing but skips the regulatory and administrative context that Google has learned belongs in this topic. Those two gaps alone, addressed in a few new paragraphs, close the most significant entity co-occurrence deficits the page has.

Vinyl fence appearing on four competitor pages is a different situation. Adding a section comparing vinyl to cedar might help co-occurrence, but it also introduces a competing primary entity that could dilute the salience of “fence contractor” as the page’s main topic. That is the tension the entity salience analysis helps you navigate. If the page already scores the fence contractor entity strongly, a brief comparison paragraph is probably fine. If salience is borderline, adding a full vinyl fence section could hurt more than it helps.

How do you use the gap report to improve your content without stuffing in every missing entity?

Not every gap in the report needs to be filled. The goal is not to mention every entity that competitors mention — it is to cover the entity relationships that make your content feel complete on the topic. There is a real difference between those two things.

Prioritize gaps where the entity is directly relevant to your page’s purpose and where the omission would actually feel like a hole to a reader. “HOA approval” on a fence installation page is one of the first questions homeowners actually have. Its absence from the page is a real reader problem, not just an entity gap. “Wood staining” is more of a contextual mention, relevant but not core to the primary topic.

Use Claude Code to draft the additions once you have decided which gaps to address:

Read the Co-Occurrence Gap Report in CLAUDE.md for /fence-installation-portland/.

I want to address these priority gaps: HOA approval, permit requirements, property line.

Fetch the current page content from [URL]. Draft two to three new paragraphs that naturally incorporate these entities in context. The additions should read as genuinely useful information for someone planning a fence installation project, not as a list of terms inserted for SEO. Do not add mentions of vinyl fence. That entity will be handled on a separate comparison page.

Present the draft paragraphs for review before suggesting where to place them in the existing content.This keeps a human in the decision loop on what actually gets added. Claude drafts, you review, you decide what fits. The workflow automates the research and the drafting. The editorial judgment stays with you.

How do you save this as a repeatable skill you can run on any page?

Save the full workflow as a skill file at .claude/skills/entity-co-occurrence-audit.md. The skill file contains the complete instruction set: read CLAUDE.md targets, run parallel subagents, build the frequency table, write the gap report. Once saved, you run it with:

/entity-co-occurrence-auditFor agencies managing multiple client sites, the skill file works across projects. Each client site has its own CLAUDE.md with its own co-occurrence analysis targets. The same skill command runs the analysis in any project context.

To schedule monthly co-occurrence monitoring on your own site:

/loop 30d /entity-co-occurrence-auditMonthly monitoring catches two things: new entity gaps that open up as competitor content evolves, and confirmation that gaps you addressed in previous months improved. The CLAUDE.md report history gives you a dated record of how your pages’ entity coverage has changed over time, which is useful context for explaining ranking movements to clients.

What are the most common mistakes when running entity co-occurrence analysis?

The first mistake is using weak competitor pages as your benchmarks. If you search your target keyword and the top results include thin pages, directory listings, or forum threads, those are not useful co-occurrence references. Look for competitors whose pages are genuinely strong — well-written, comprehensive, and clearly performing well on the query. The entity patterns from weak pages will not reflect what Google has learned to expect on the topic.

The second mistake is treating co-occurrence gaps as a keyword stuffing list. Adding “HOA approval” once in a natural sentence is very different from repeating it five times across the page. Co-occurrence is about whether an entity is present and meaningfully discussed, not about mention count. One well-integrated paragraph that explains HOA requirements for fence installation is more effective than six forced mentions of the phrase.

The third mistake is running the analysis and then ignoring entity type when prioritizing gaps. An entity typed as OTHER with no Wikipedia metadata is a concept Google may not have a clean Knowledge Graph entry for. Those entities are still worth addressing, but they carry less co-occurrence weight than entities typed as LOCATION, PERSON, or ORGANIZATION that Google can resolve to a specific Knowledge Graph node. When two gaps are otherwise equal, prioritize the one with a cleaner entity type classification.

The fourth mistake is running the analysis once and treating it as permanent. Competitor content evolves. New pages enter the top five. Entity co-occurrence patterns shift as Google updates its understanding of a topic. The /loop command exists because this is not a one-time audit. The sites that maintain entity coverage over time are the ones that hold rankings when algorithm updates shift what Google expects to see in a topic space.

For the broader semantic SEO picture, co-occurrence analysis sits between entity mapping and content gap analysis on the workflow hierarchy. You map which entities your site should be associated with, you score entity salience to see how your current content signals those associations, and you run co-occurrence analysis to find the entity relationships your content is missing relative to what already ranks. Each layer builds on the one before it. The full framework for using Claude Code across every layer of this process is covered in the Claude for SEO guide.

Frequently Asked Questions

What is entity co-occurrence in SEO?

Entity co-occurrence is the pattern of named entities — people, places, organizations, concepts, and things — that appear together across documents covering the same topic. Google uses co-occurrence patterns to learn which concepts belong together within a subject area. When your page covers a topic but is missing entities that consistently appear alongside that topic on competing pages, your content may score as less complete than pages that cover the full entity neighborhood.

How is entity co-occurrence different from keyword proximity?

Keyword proximity is about how close two specific search terms appear to each other within a document. Entity co-occurrence is about which named entities appear together across many documents covering the same topic. The distinction matters because Google classifies entities based on their semantic meaning, not just their string form. Two different phrasings of the same entity — “HOA approval” and “homeowners association approval” — both contribute to the co-occurrence relationship between that concept and a topic, even though they are different keyword strings.

How do you find the right competitor pages to use in the analysis?

Search your target keyword on Google and use the top five organic results that are not your own domain. Exclude results from directories, forums, and aggregator sites that are not genuine editorial content. If several results are thin or low-quality pages that rank for reasons unrelated to content quality, it is acceptable to go further down the results to find five pages that genuinely reflect what strong content looks like for the topic.

Can you run co-occurrence analysis without the Google NLP API?

Yes, but with reduced accuracy. The Google NLP API classifies entities by type and resolves them to Knowledge Graph entries, which gives you more signal about which entities Google actually recognizes versus which are just noun phrases. An alternative is to run entity extraction using an open-source NLP library like spaCy via Claude Code’s Bash tool, which does not require an API key. The trade-off is that spaCy’s entity classification will not reflect Google’s specific Knowledge Graph understanding of the entities on your page.

How many competitor pages should you include in the analysis?

Five is a reliable baseline. It is large enough to identify genuine co-occurrence patterns rather than idiosyncratic choices by a single author, and small enough that the analysis runs quickly. For highly competitive queries where the top ten results are all strong, extending to seven or eight pages gives you a more stronger frequency table, particularly for identifying entities that appear on the majority of pages versus those that appear on only one or two.

Should you address every entity gap the report identifies?

No. Prioritize gaps where the missing entity is directly relevant to your page’s purpose and where its absence would actually leave a reader without information they need. Entities that appear on competitor pages because of stylistic choices or tangential content are lower priority. The goal is to cover the entity relationships that make your content feel complete on the topic, not to match every single entity mention across competitor pages.

How long does the co-occurrence analysis take with Claude Code?

With parallel subagents processing all five competitor pages simultaneously, the crawl and entity extraction typically completes in eight to twelve minutes depending on page load times and content length. Building the frequency table and gap report adds another few minutes. The total active time, including reviewing the output and deciding which gaps to address, is usually under 20 minutes for a single page analysis.